What Is Muse Spark? Meta's New AI Model

Meta launched Muse Spark from its new Superintelligence Labs. Here's what it does, what's confirmed, and what builders should watch.

Four tabs. That’s how many I had open on Tuesday night — one for each AI assistant I rotate through during a normal work week. Wednesday morning I woke up to a fifth name in my feed. Muse Spark. Meta’s new model, live immediately, built by a team that didn’t exist a year ago.

Hey, it’s Dora here! My first instinct wasn’t excitement. It was: do I need to open a fifth tab? This piece documents what I found after spending a day pulling apart the confirmed facts, the gaps, and the things that matter if you’re building anything on top of AI right now.

What Muse Spark Is — and Where It Comes From

Meta Superintelligence Labs: the New Unit Led by Alexandr Wang

Muse Spark is the first model out of Meta Superintelligence Labs (MSL), the AI unit led by Alexandr Wang, who joined Meta nine months ago after co-founding Scale AI. Meta created this lab in response to criticism that its prior AI models underperformed, and CEO Mark Zuckerberg subsequently recruited AI researchers from OpenAI, Anthropic, and Google. The investment behind it is not small — Meta spent $14.3 billion acquiring a 49% nonvoting stake in Scale AI to bring Wang on board as its first-ever chief AI officer.

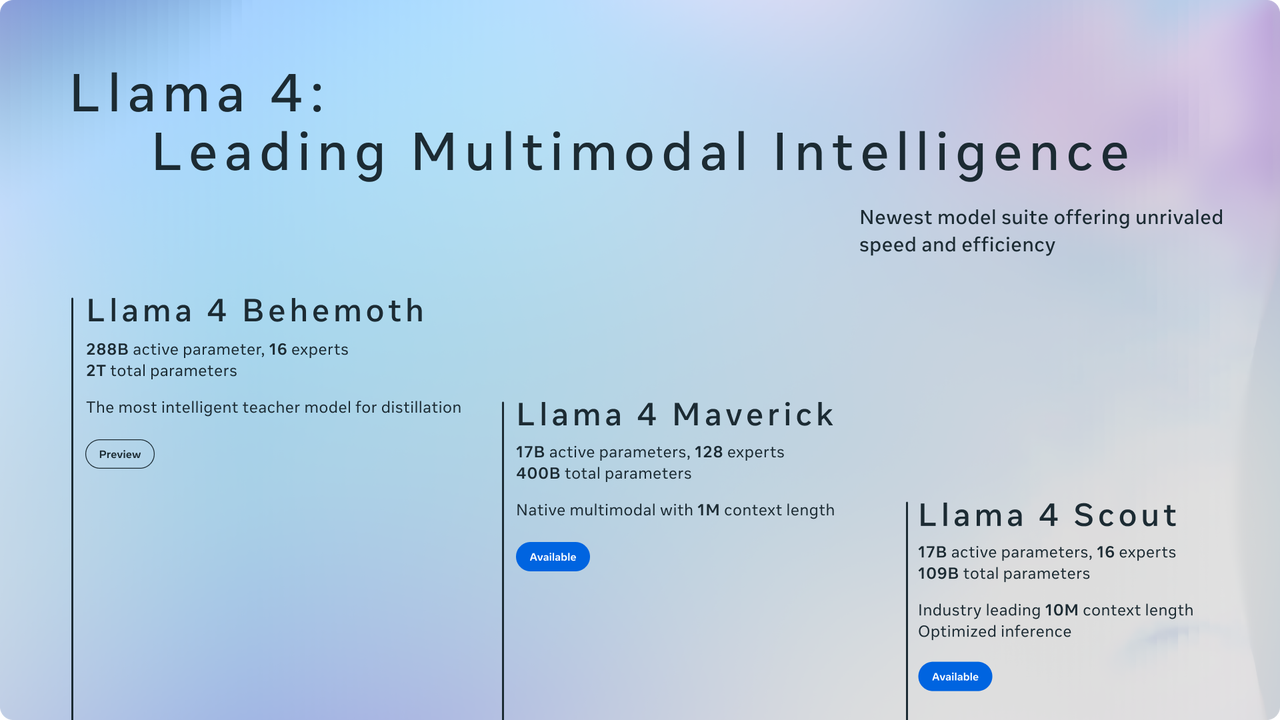

The Llama Problem: Why Meta Rebuilt from Scratch

If you followed the Llama 4 launch last April, you already know the backstory. Llama 4 was widely criticized as a dud, and Meta was later caught using specialized, unreleased model versions fine-tuned for specific tasks to inflate benchmark scores. That credibility hit is the context for everything Muse Spark is trying to do. MSL rebuilt Meta’s AI stack from the ground up over the past nine months, calling it the fastest development cycle they’ve ever run.

Code-Named Avocado, Built in 9 Months

Internally code-named Avocado, Muse Spark is the first model in Meta’s new Muse series. Meta describes it as deliberately small and fast — their technical blog states that improved training techniques enabled them to create smaller models matching older midsize Llama 4 performance for an order of magnitude less compute.

That efficiency claim is worth watching. It’s not about raw benchmark dominance. It’s about cost structure.

What Muse Spark Can Actually Do

Instant Mode vs Thinking Mode: When Each Applies

Muse Spark runs in tiered reasoning modes. Instant mode handles casual, fast-turnaround queries — the kind you’d throw at an assistant ten times a day. Thinking mode adds step-by-step reasoning for more complex tasks: legal document analysis, nutritional breakdowns from photos, multi-step math. Users of the Meta AI app can alternate between modes depending on the sophistication of their prompts.

Multimodal Understanding: Image, Audio, Text Input → Text and Interactive Output

The model accepts voice, text, and image inputs, but produces text-only output. That’s an important distinction. “Multimodal” here means perception, not generation. Snap a photo, speak a question, paste a screenshot — Muse Spark processes all of those. But what comes back is text and interactive elements (websites, dashboards, games), not images or video.

Meta built strong multimodal perception into Muse Spark so the assistant can see and understand what you’re looking at, not just read what you type. Their example: photograph an airport snack shelf and get a protein-ranked breakdown without reading labels.

Visual STEM, Visual Coding, Mini-Games: Confirmed Interactive Output Capabilities

This is the part most coverage is underplaying. Muse Spark can generate custom interactive websites, dashboards, and mini-games straight from a natural language prompt — what Meta calls “visual coding.” Their official blog post describes building retro arcade games, flight simulators, and party planning dashboards from a single sentence. The model also handles visual STEM questions that can lead to interactive experiences like creating fun minigames or troubleshooting home appliances.

This isn’t image generation. It’s code generation with a visual output layer. Different category, different use cases.

Multi-Subagent Coordination for Complex Requests

Muse Spark can launch multiple subagents in parallel to tackle a question — for instance, planning a family trip where one agent drafts the itinerary, another compares destinations, and a third finds kid-friendly activities, all simultaneously. I haven’t tested this myself yet. The architecture is interesting; the real-world reliability is unverified.

Contemplating Mode: Confirmed Upcoming, No Timeline

Meta plans to roll out a “Contemplating” mode that allows the model to tackle more complex problems TechCrunch by coordinating a group of AI agents for parallel reasoning. Wang stated on X that Contemplating mode is competitive with other extreme reasoning models such as Gemini Deep Think and GPT Pro. No public timeline. The Artificial Analysis benchmark data shows early Contemplating scores of 50.2% on Humanity’s Last Exam — but this was tested under conditions Meta provided, not independently replicated at scale.

What Muse Spark Is Not

Not a Standalone Image/Video Generation Model

I want to be blunt here because I’ve already seen this conflated in multiple articles. Muse Spark does not generate images or video. Meta’s Vibes AI video feature in the Meta AI app currently uses AI models from third parties such as Black Forest Labs, and Meta only plans for Muse Spark to “eventually” power it. As of launch, if you’re generating video through Meta AI, that’s not Muse Spark doing the work.

Not Open Weights — a Deliberate Pivot from Llama Strategy

Unlike Meta’s previous Llama models, which were released as open-weight models anyone could download, modify, and run, Muse Spark is proprietary. Meta has said they “hope to open-source future versions,” and Axios reported that an open-source release is planned. But right now, the weights are closed. For teams that built on Llama’s openness, that’s a meaningful shift.

Not a Public API (Private Preview Only, Select Partners)

Meta is offering Muse Spark in private preview via API to select partners only. No public API pricing, no announced timeline for general access. If you’re a builder hoping to integrate this, you’re waiting.

Where It’s Available Today

meta.ai and Meta AI App: Live as of April 8, 2026

Muse Spark currently powers the Meta AI app and the meta.ai website, with a new look rolling out alongside the model upgrade. All modes are free to use, though Meta may impose rate limits.

WhatsApp, Instagram, Facebook, Messenger, AI Glasses: Rolling Out

Muse Spark will be rolling out to WhatsApp, Instagram, Facebook, Messenger, and AI glasses in the coming weeks.

API: Private Preview to Select Partners Only

No public access. No pricing. This is where my data ends.

Performance Context

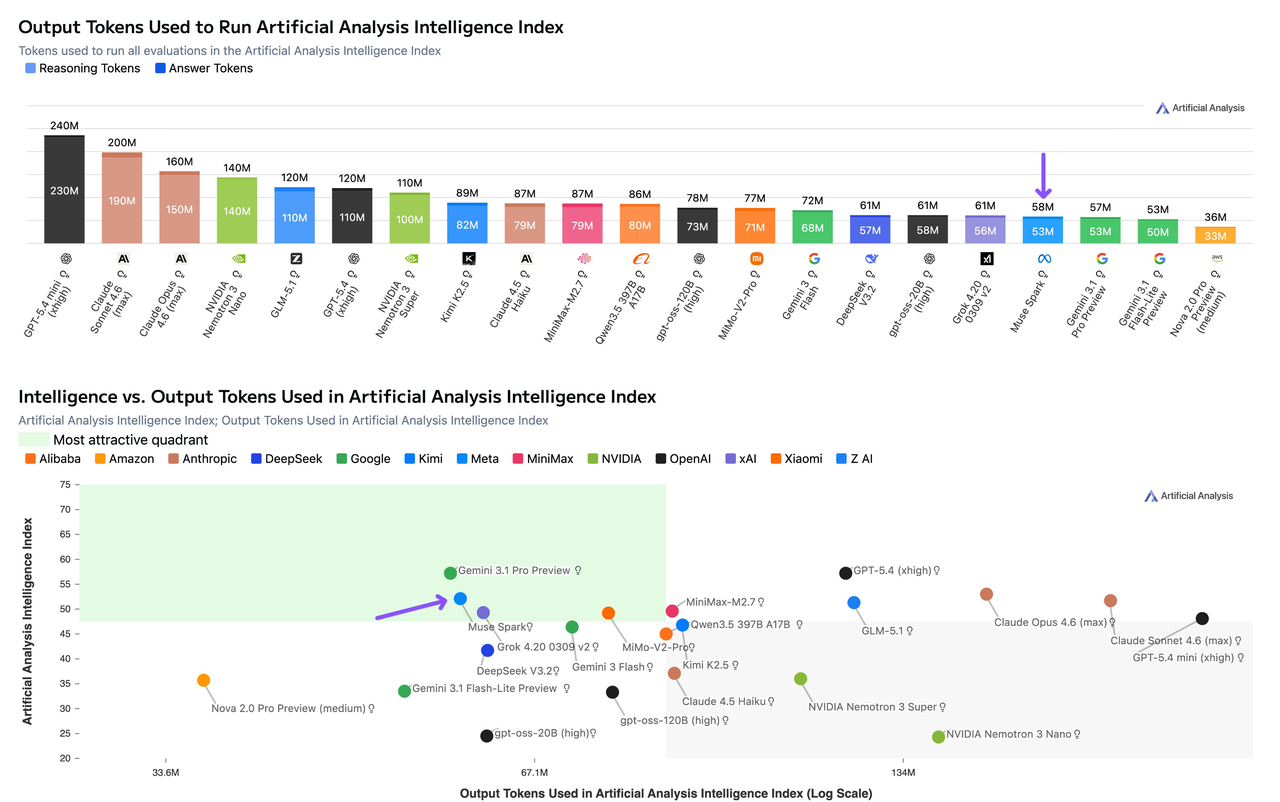

Artificial Analysis Intelligence Index: 52

Muse Spark scores 52 on the Artificial Analysis Intelligence Index, placing it in the top 5 — behind Gemini 3.1 Pro Preview (57), GPT-5.4 (57), and Claude Opus 4.6 (53). An important caveat: Artificial Analysis was given early access by Meta to independently benchmark the model.Independent, yes. But on Meta’s terms and timeline.

For context on how far Meta has moved: Llama 4 Maverick and Scout scored 18 and 13 respectively on the same index. That’s a 3x jump.

One number that caught my attention: Muse Spark used just 58 million output tokens to complete the full evaluation, compared to Claude Opus 4.6’s 157 million and GPT-5.4’s 120 million. Token efficiency at that scale isn’t a footnote — it’s a cost story.

Meta’s Stated Areas of Current Gap

Meta openly acknowledges performance gaps in long-horizon agentic systems and coding workflows. The VentureBeat analysis confirms this: Muse Spark trails significantly on coding benchmarks like Terminal-Bench and on agentic task evaluations. If your workflow is code-heavy, this isn’t your model. Not yet.

Privacy and Data Considerations

Meta Account Login Required

Muse Spark users need to log in with an existing Meta account such as Facebook or Instagram. There’s no anonymous access path.

Meta’s Data Policy: What Users Should Know

Axios noted that Meta’s privacy policy sets few limits on how the company can use any data shared with its AI system. Meta doesn’t explicitly say that personal information from a Facebook or Instagram account will be used by the AI, but it’s likely, considering that Meta generally trains on public user data and has positioned Muse Spark as a personal superintelligence product.

If you’re evaluating this for any workflow involving sensitive inputs — client data, health questions, internal documents — read the Meta Privacy Policy before you type anything into that box. That’s not a warning. It’s a workflow step.

FAQ

Is Muse Spark free to use?

Yes. All modes of the model are free through meta.ai and the Meta AI app, though Meta may impose rate limits.

Is Muse Spark open source?

No. Muse Spark is proprietary, though Meta has expressed “hope to open-source future versions of the model.” This is a break from the Llama strategy.

Can Muse Spark generate images or video?

No. Muse Spark handles text, image, and voice inputs and produces text and interactive outputs (websites, mini-games, dashboards). The Vibes video feature currently relies on third-party models from Black Forest Labs.

When will Muse Spark API be publicly available?

No confirmed date. It’s currently in private preview for select partners only. Meta has signaled intent to offer broader API access but hasn’t committed to a timeline.

How does Muse Spark compare to GPT-5.4 and Gemini?

On the Artificial Analysis Intelligence Index, Muse Spark (52) trails GPT-5.4 (57), Gemini 3.1 Pro (57), and Claude Opus 4.6 (53). It leads on health benchmarks and multimodal vision, but falls behind on coding and agentic tasks. The comparison depends entirely on your use case.

I’ll keep watching how the Contemplating mode performs once it’s publicly available, and whether the API opens up in a way that’s actually usable for third-party builders. Right now Muse Spark is interesting for what it signals about Meta’s direction — but for most builder workflows, it’s not something you can integrate yet. That could change fast. Or it might not. Run it yourself when the API drops. That’ll tell you more than anything I say.

Previous posts: