GPT Image 2 Pricing in 2026: What Teams Pay

Understand GPT Image 2 pricing in 2026, including per-image costs, token-based image pricing, edit costs, and what teams should budget for production use.

I rebuilt my pricing spreadsheet three times last week. Each time, the per-image number came out different, and each time I was using the same model — gpt-image-2. The problem wasn’t the math. The problem was that I kept treating GPT Image 2 like it had a price tag, when what it actually has is a billing system. There’s a meaningful gap between those two things, and that gap is where most team budgets quietly start leaking.

I’m Dora. I spend more time than I’d like reading pricing pages. Most of what I write comes out of trying to answer a single question for a product team or a finance partner: what is this actually going to cost us at our volume? This piece is the version of that answer I wish I’d had two weeks ago, when a designer on my team asked me whether we could afford to generate 200 ad variants a week and I said “probably” — which turned out to be a 40% miss in either direction depending on the day.

If you’re trying to put a number next to “image generation” in a 2026 budget line, here’s how the model actually bills, where the costs hide, and what teams in production tend to pay.

How GPT Image 2 Pricing Works

Per-image pricing vs token-based image pricing

GPT Image 2 is sold two ways, and conflating them is the single fastest way to blow up your forecast.

The first way is the consumer-facing one: ChatGPT Plus at $20/month, Pro at $200/month, or the free tier with rate limits. That’s flat. You don’t think about it per image. You hit a usage cap or you don’t.

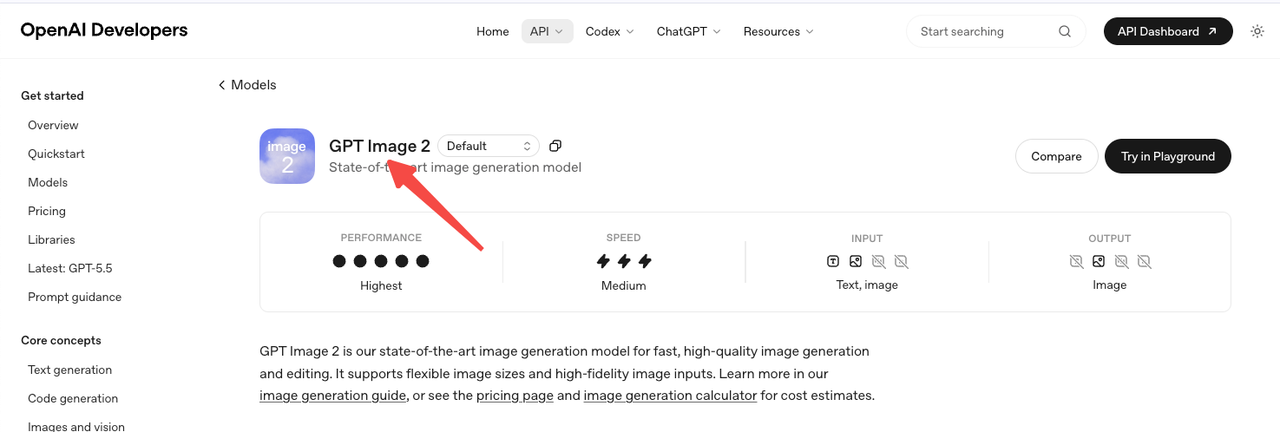

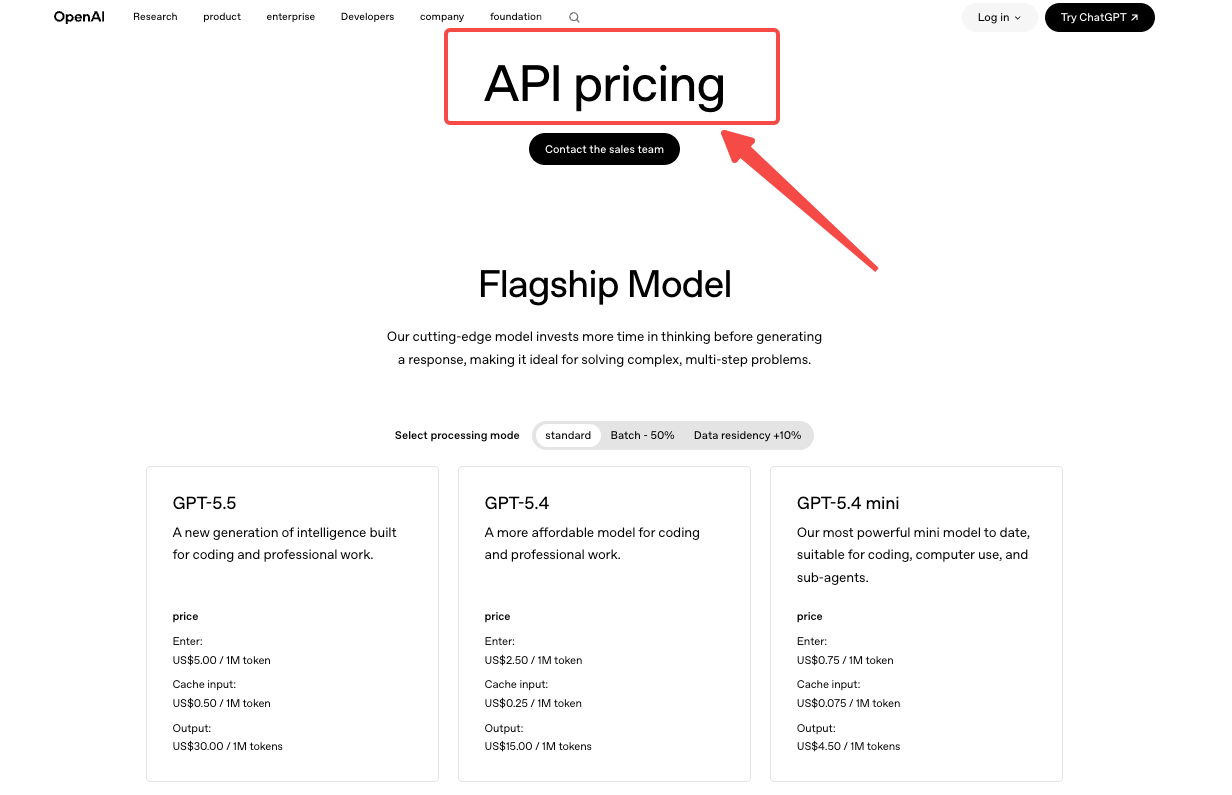

The second way is the API, and the API is token-based, not per-image. According to the OpenAI API pricing page, gpt-image-2 charges $8.00 per million image input tokens, $2.00 per million cached image input tokens, $30.00 per million image output tokens, plus $5.00 per million text input tokens. The “per-image” numbers you see quoted in articles — $0.006, $0.053, $0.211 — are estimates derived from the calculator, not list prices.

That distinction matters because token consumption isn’t constant. It moves with size, quality, edits, and how clean your prompt is. A team that thinks it’s paying $0.05 per image often finds out, three weeks in, that its actual blended cost is $0.11.

What changes with size and quality settings

Quality is the biggest lever. Per the calculator referenced in the OpenAI image generation guide, a 1024×1024 output runs roughly $0.006 at low quality, $0.053 at medium, and $0.211 at high. That’s a 35x spread on the same canvas size. Switch to 1024×1536 (portrait) and the same quality tiers come in around $0.005, $0.041, and $0.165 — slightly cheaper, which is counterintuitive until you accept it.

Size doesn’t move the bill predictably either. 4K via third-party hosts like fal.ai climbs to about $0.41 per image at high quality. Those are real numbers, but they’re not “official OpenAI” numbers — different surface, different contract.

The Real Cost Drivers Teams Miss

Edit inputs, retries, and large output sizes

Here’s the part the pricing page doesn’t shout about, and it cost me two days of confusion.

When you send an edit request — meaning you’re passing in a reference image — gpt-image-2 processes that input at high fidelity. You can’t turn that off. The official image generation guide is explicit: the input_fidelity parameter is locked, and every reference image is billed at the high-fidelity input rate regardless of what you set quality to on the output.

Practical translation: if your workflow looks like “generate, then edit four times until it’s right,” your real cost per finished asset is closer to 2–3x the quoted per-image number. A team doing product mockups, character consistency work, or ad variants — anyone who iterates on uploaded references — is in this bucket whether they realize it or not.

Retries compound the same way. A medium-quality image at $0.053 is cheap. The same prompt run five times because the first four came out wrong is $0.265, and you only kept one. Failure rate is a real line item that nobody puts on their pricing comparison.

Draft generation vs final asset generation

The cleanest cost-control move I’ve found is to separate exploration from delivery. Use low quality for ideation — at $0.006 per image, you can run 30 prompt variations for under twenty cents. Then re-render only the winners at high. According to The Decoder’s coverage of the launch, the model’s quality jump over GPT Image 1.5 is real but uneven across sizes, which makes “draft cheap, finalize expensive” actually viable rather than a marketing line.

The other lever: Batch API cuts both input and output token rates by 50% if you can tolerate up to 24 hours of latency. For weekly content runs, that’s free money. For real-time product flows, it’s not an option.

Budgeting GPT Image 2 for Team Workflows

I don’t trust monthly cost projections that come from a calculator alone. The math I actually run looks like this:

- Base per-image cost at the quality and size you’ll ship in production

- Multiply by your retry rate (mine sits around 1.4x for medium quality, 1.8x for high)

- Add edit overhead if reference images are part of the loop — budget another 30–60% on top

- Subtract Batch savings for any workload that doesn’t need synchronous response

A small marketing team running 200 medium-quality social images per month, with light editing, lands somewhere around $15–$25/month in raw API cost. The same volume at high quality with heavy iteration runs $80–$140/month. Neither number is the calculator number, and neither shows up on the pricing page. They’re what I see when I export billing.

The point isn’t the specific figures — your workflow will move them. The point is that “per-image cost” as published is a starting line, not a finish line.

When Direct Pricing Is Simple Enough and When It Is Not

For occasional use, ChatGPT Plus at $20/month is probably the right answer and you can stop reading. The math doesn’t favor the API until you’re past roughly 400 medium-quality images a month, or building image generation into a product where subscription access doesn’t apply.

Where direct pricing stops being simple: any workflow with reference image inputs, any product flow with unpredictable user volume, anything involving Thinking mode (which adds variable reasoning token overhead that isn’t published as a clean per-image figure), and anything you plan to host through a third party like fal.ai’s gpt-image-2 endpoint, where pricing is provider-owned and structurally different from OpenAI’s billing.

For anyone in those buckets, the honest budgeting move is a one-week pilot with cost logging on every call. The calculator is fine for sanity-checking. It’s not fine for committing a quarter of spend.

FAQ

How much does GPT Image 2 cost per image?

There isn’t one number. Via the API, a 1024×1024 image at low quality runs about $0.006, medium about $0.053**, and high about $0.211 — those are estimates from OpenAI’s calculator, not list prices. Actual cost depends on size, edits, and retries.

Are edits more expensive than fresh generations?

Yes, often substantially. gpt-image-2 always processes reference images at high fidelity, which adds image input tokens to every edit request. Edit-heavy workflows can run 2–3x the per-image baseline.

What settings increase cost fastest?

In order: quality tier (35x spread from low to high), output size at the top end (4K via third parties hits ~$0.41/image), and reference image inputs on edits. The output_format and compression settings don’t move the bill.

How should teams estimate monthly spend?

Run a one-week pilot at the quality and size you’ll actually ship. Log every call. Multiply weekly cost by 4.3 and add a buffer for retries. The gpt-image-2 model page points to the official calculator, which is fine for planning but undercounts edit overhead.

Can I cut costs without changing quality?

Yes — Batch API halves token rates if you can tolerate 24-hour latency, and cached text inputs drop from $5.00 to $1.25 per million tokens when prompts are reused. Both stack.

Conclusion

GPT Image 2 isn’t expensive. It’s just billed in a way that punishes anyone who treats it as a fixed-price product. The per-image numbers in the headlines are real, but they describe one specific request — synchronous, no edits, no retries, mid-range size. The bill you actually pay is shaped by the workflow you actually run.

The one habit worth building: log a real week of calls before you commit to a budget. The calculator will get you in the right zip code. Your billing export will tell you the street address.

Previous posts: