Is There a Muse Spark API? Status and Alternatives

The Muse Spark API is in private preview for select partners only. Here's the current status, what Meta has confirmed, and what developers can use today.

Hello, my friends. I’m Dora. I went looking for the Muse Spark API docs on launch day. Found nothing. Tried again three days later. Still nothing. If you’re building anything that touches a model API and you saw the Muse Spark benchmarks drop, you probably did the same thing — and landed on the same empty page.

This piece documents what Meta has actually confirmed about muse spark api access, what remains unconfirmed, and which alternatives have production-ready endpoints right now. No speculation dressed up as analysis.

Current API Status (As of Publication)

Private preview only — to partners Meta chose

Meta’s official announcement on April 8, 2026 states they are “offering the model in private preview via API to select partners.” That sentence is doing a lot of heavy lifting. “Select partners” means Meta picked them. Not the other way around.

The technical blog on ai.meta.com phrases it slightly differently — “opening a private API preview to select users” — but the outcome is identical. If you weren’t already in a conversation with Meta’s partnerships team, you’re not in this preview.

No public API. No confirmed timeline.

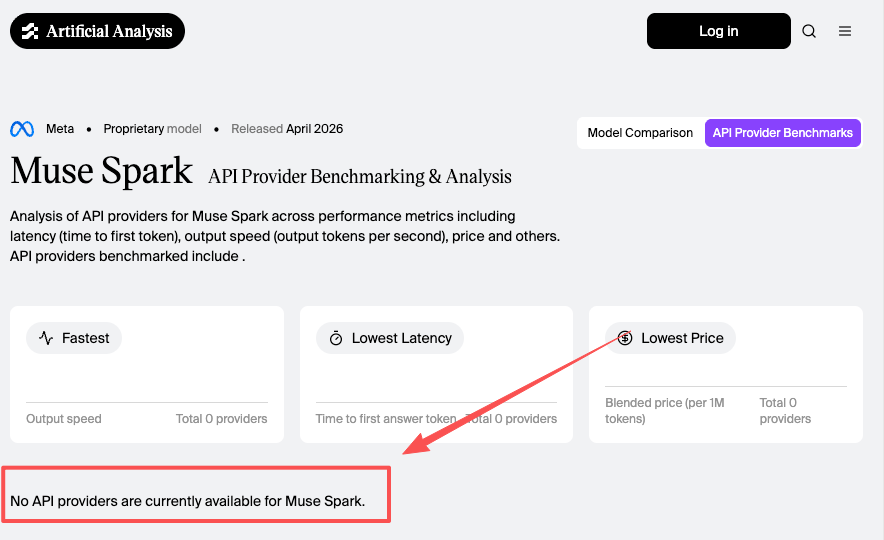

This is the part that matters for anyone writing integration code. As of mid-April 2026, Artificial Analysis confirms zero API providers are benchmarking Muse Spark. No endpoint documentation. No pricing page. No rate limit disclosures. No model card with a published context window figure (sources cite both 262K and 1M — Meta hasn’t clarified).

If you’re building a production integration, you’re waiting. That’s not editorial — it’s the current state of affairs.

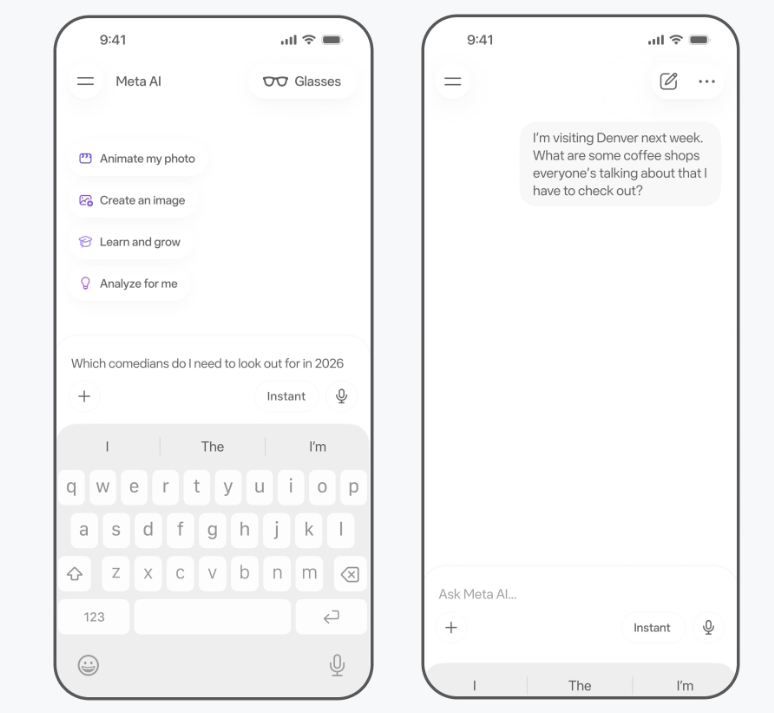

meta.ai and the Meta AI app: accessible, but not an API

You can use Muse Spark right now through meta.ai or the Meta AI app. Free. Requires a Meta account (Facebook or Instagram login). The model runs in Instant and Thinking modes through the chat interface, with a Contemplating mode rolling out later.

But a chat UI isn’t an API. You can’t call it programmatically, you can’t control parameters, you can’t integrate it into a pipeline. For evaluation purposes it’s useful. For production planning it’s irrelevant.

What Meta Has and Hasn’t Said About API Access

”Select partners” — what this actually implies

Meta hasn’t published eligibility criteria. No application form. No waitlist page. CNBC reported that Meta “plans to eventually offer paid API access to a wider audience at a later date,” and that Meta itself declined to comment beyond the initial announcement. “Eventually” and “at a later date” aren’t timelines. They’re placeholders.

The practical read: if you’re an enterprise partner already working with Meta on ad tech or platform integrations, you might have a path. If you’re an independent developer or a startup, there’s nothing to apply for right now.

No public application portal confirmed

I checked. There’s no developer signup, no waitlist form, no “request access” button on any Meta property tied to Muse Spark. This is different from how OpenAI and Anthropic handled their launches — both had public API access within days of announcement.

Open-source future versions: promised, not scheduled

Mark Zuckerberg posted on Threads that Meta plans to “release increasingly advanced models” including open-source ones. The official blog says Meta “hopes to open-source future versions of the model.” Hope isn’t a commitment. Axios reported plans for an open-source release, but details are absent. Given that Muse Spark is a sharp departure from Meta’s entire Llama strategy — closed weights, no local deployment, no self-hosting — treating this promise as actionable would be premature.

What the API Is Expected to Support (Based on Confirmed Info Only)

Multimodal input: text, image, audio

This is confirmed. Muse Spark accepts text, image, and audio inputs natively. Meta describes it as “built from the ground up to integrate visual information across domains and tools.” Output is text-only for now. The model handles visual STEM questions, entity recognition, chart reading, and image-based Q&A.

For API callers, multimodal input is table stakes in April 2026. Claude, Gemini, and GPT-5.4 all accept image input. Audio input support varies — but the point is that multimodal alone doesn’t differentiate Muse Spark at the API level.

Thinking mode via API: likely, not confirmed

The chat interface exposes Instant and Thinking modes. A Contemplating mode (multi-agent parallel reasoning) is rolling out. Whether these modes will be individually addressable via API parameters — the way Anthropic exposes extended thinking or OpenAI offers reasoning effort levels — hasn’t been stated.

I’d expect it. But expecting and confirming are different things.

What remains unconfirmed

Pricing. Per-token rates. Rate limits. Endpoint format. Authentication method. Context window (the 262K vs 1M discrepancy is still unresolved). Data retention policy for API inputs. Batch processing support. Whether it’ll be available through aggregators like OpenRouter. None of this has been published.

What Developers Can Use Right Now

If you need a frontier model API today — not next month, not “eventually” — here’s what’s actually available with published docs, pricing, and stable endpoints.

Claude Opus 4.6 / Sonnet 4.6 via Anthropic API

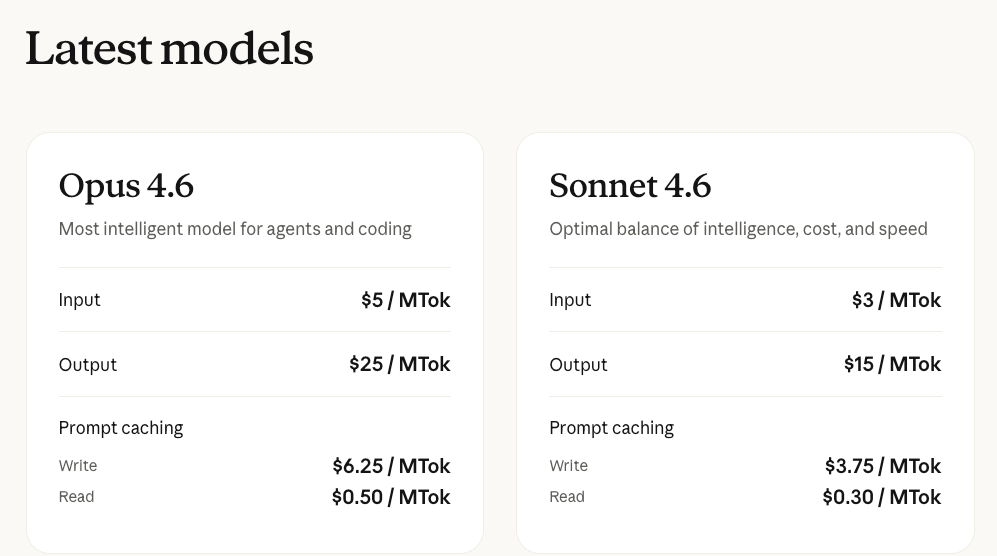

Opus 4.6 sits at $5/$25 per million tokens (input/output). Sonnet 4.6 at $3/$15. Both ship with a 1M context window at standard pricing — no surcharge until you cross 200K tokens. Prompt caching cuts repeat-context costs by up to 90%. Batch API gives a flat 50% discount for async workloads.

Where it’s strong: long-document processing, careful writing output, coding (80.8% on SWE-Bench Verified — still the top mark), and complex reasoning tasks. Artificial Analysis ranks it behind only GPT-5.4 on their Intelligence Index.

Where it’s not: if your workload is latency-sensitive at scale, Opus is slower than the lighter models. Sonnet 4.6 is the better fit there. The pricing is mid-tier — not the cheapest, not the most expensive.

Gemini 3.1 Pro via Vertex AI / Google AI Studio

$2/$12 per million tokens for contexts under 200K. Doubles above that threshold. Currently in preview — stable GA pricing may shift slightly. Supports text, image, audio, and video input. That last one matters. Gemini 3.1 Pro is the only frontier model in this tier that accepts video natively.

ARC-AGI-2 score of 77.1% is the highest in its class. Coding performance is competitive (80.6% SWE-Bench Verified). Thinking levels give you fine-grained cost control — Medium handles most tasks without paying for High.

The catch: preview status means the API contract could change before GA. For production code, pin your model version and test against updates.

GPT-5.4 via OpenAI API

$2.50/$15 per million tokens at standard context. Above 272K tokens, input pricing doubles. 1M+ context window. Released March 5, 2026 — the most battle-tested of the current frontier models by deployment volume.

Benchmark leader on OSWorld (75%, above the human expert baseline), strong on coding and knowledge work. The GPT-5.4 Pro tier exists at $30/$180 per million tokens for extreme reasoning — most workloads don’t need it.

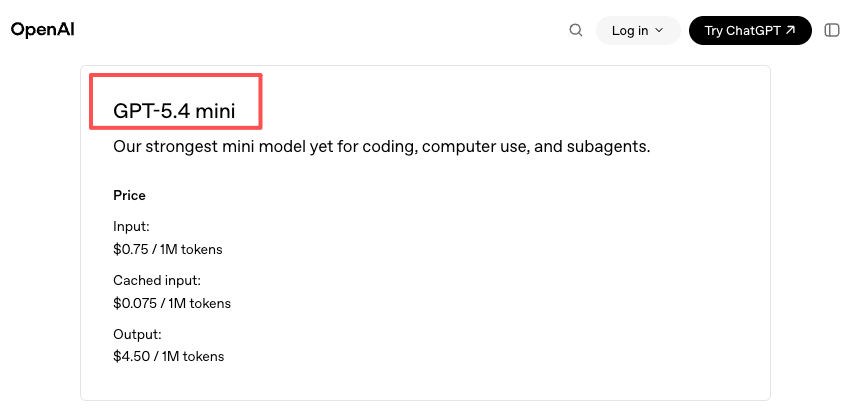

Mini variant ($0.75/$4.50) handles routine tasks at a fraction of the cost. For tiered architectures — route hard problems to 5.4, simple ones to Mini — it’s a practical setup.

Why monitoring the Muse Spark private preview is still worth doing

Muse Spark scored 52 on Artificial Analysis’s Intelligence Index — behind Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro, but ahead of Claude Sonnet 4.6. The model is token-efficient: 58M output tokens for the full evaluation suite, compared to 120M for GPT-5.4 and 157M for Opus 4.6. If that efficiency carries to API pricing, Muse Spark could be competitive on cost-per-quality.

The multimodal perception benchmarks are also notable — second-highest vision score among frontier models (80.5% MMMU-Pro). For workflows heavy on image understanding, chart analysis, or health-related queries, this model has a specific niche.

None of that helps you today. But if you’re designing a multi-model architecture, leaving a slot for Muse Spark when the API opens is reasonable. Just don’t block your roadmap on it.

FAQ

Is the Muse Spark API free?

Unknown. The chat interface at meta.ai is free. Meta has said it plans to offer paid API access eventually. No pricing has been announced. Don’t assume the API will be free — or that it won’t be. There’s literally no information to base either assumption on.

Can I apply for the private preview?

Not through any public channel. No application portal, waitlist, or signup form exists as of April 13, 2026. The private preview appears to be invitation-only through Meta’s existing partner relationships.

Will Muse Spark be on OpenRouter or other aggregators?

Not confirmed. OpenRouter, Together AI, and similar platforms haven’t listed Muse Spark. Given that Meta is keeping this closed for now, third-party availability likely depends on when — and how — Meta opens the API beyond select partners.

How long will the private preview phase last?

Meta hasn’t said. For reference, OpenAI’s GPT-5.4 went from announcement to public API in the same day. Anthropic’s Claude 4.6 models followed a similar pattern. Meta’s approach here is slower and more controlled. Could be weeks, could be months.

What should I do to prepare for when the Muse Spark API opens?

Keep your model routing flexible. If your architecture already supports switching between Claude, Gemini, and GPT endpoints, adding Muse Spark later is a configuration change, not a rewrite. Test your use case against the meta.ai chat interface to see if the model’s strengths align with what you need. And watch Meta’s developer blog — that’s where the announcement will come when it comes.

Previous posts: