Direct OpenAI or a Platform for GPT-5.5?

Should teams access GPT-5.5 directly from OpenAI or through a model platform? Compare rollout speed, fallback, routing, and operational control.

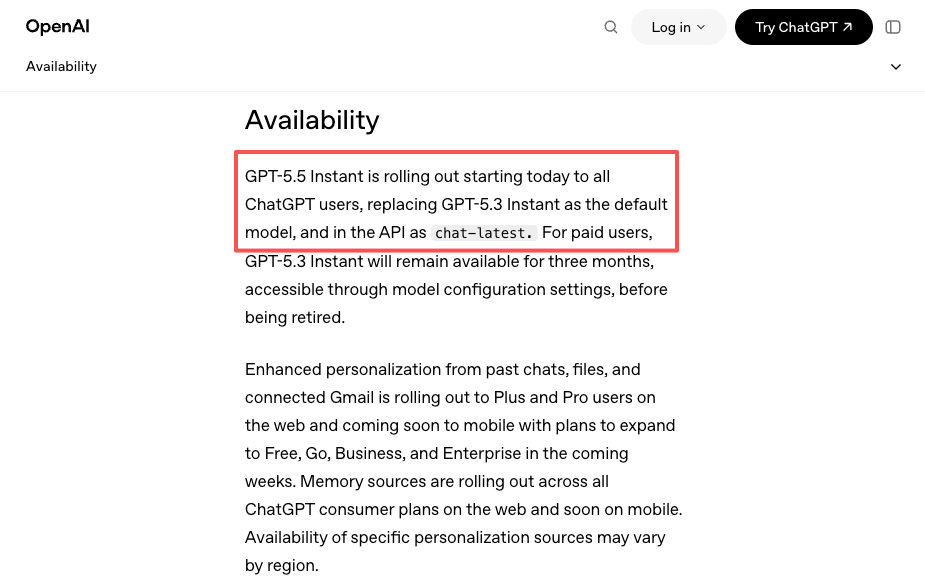

GPT-5.5 launched in ChatGPT and Codex on April 23. The API came one day later, on April 24. Then on May 5, GPT-5.5 Instant became the default ChatGPT model and shipped to the API as chat-latest. Three different rollout moments inside two weeks, each with different tier eligibility and different surfaces.

Dora here. I tracked this because I had to. A workflow of mine touches GPT-5 models, and the 24-hour API gap between the ChatGPT release and the API release was not theoretical for me. It meant either waiting, or routing through something that already had access. That decision — wait or route — is the same decision every team using a frontier model now has to make on every release. So this piece is about it.

The question isn’t “is OpenAI good.” It’s: when GPT-5.5 (or whatever’s next) ships in stages, do you call OpenAI directly, or do you sit behind a platform that handles the staging for you? Both answers are correct for different teams. Below is what I see when I look at it honestly.

The Two Ways Teams Will Access GPT-5.5

Direct provider access

You hit api.openai.com with an OpenAI key. One SDK, one set of docs, one bill. When OpenAI ships a new model name, you change a string in your config and you’re using it — assuming your tier has access on day one.

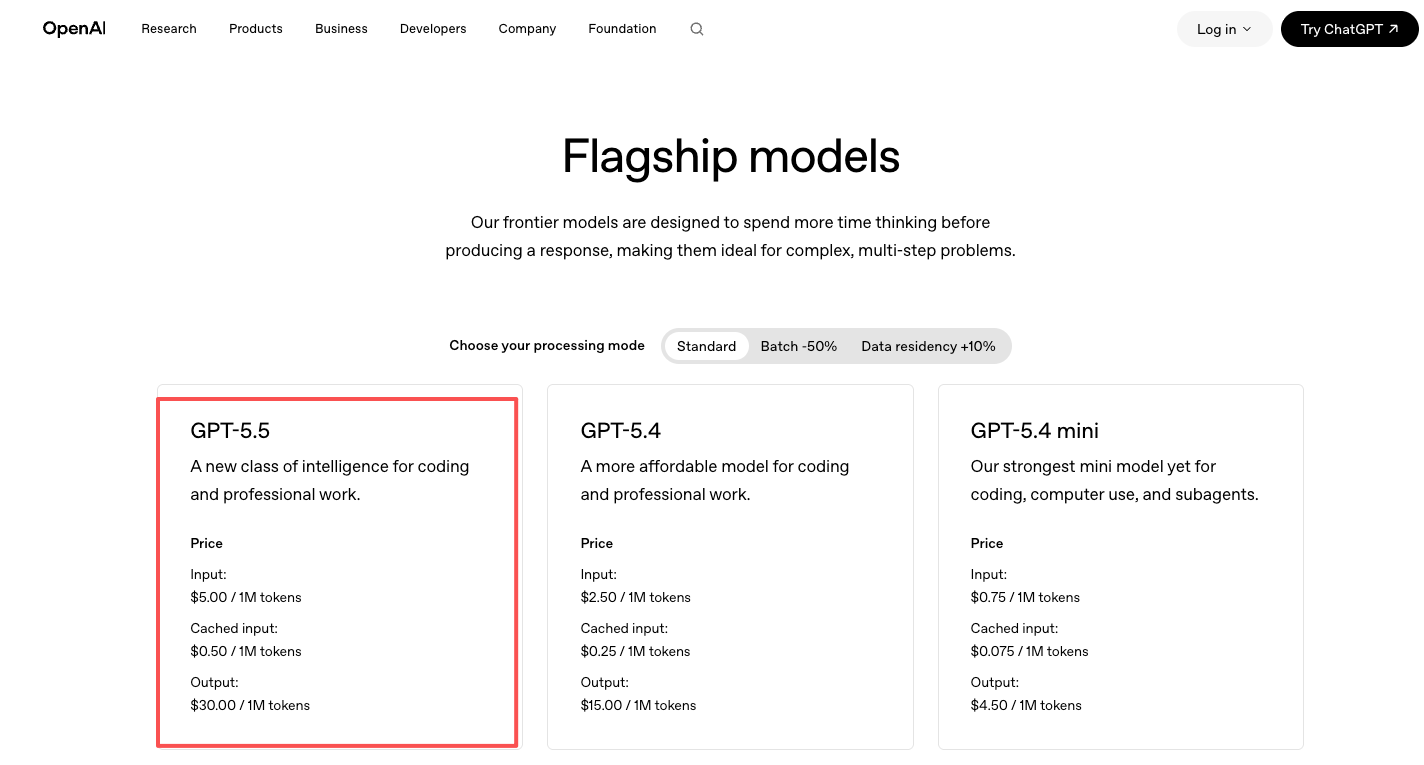

Pricing is on the official OpenAI pricing page: GPT-5.5 at $5/M input and $30/M output, GPT-5.5 Pro at $30/M input and $180/M output. Roughly double GPT-5.4. You pay OpenAI directly.

Access through a model platform

You hit a routing layer — OpenRouter, LiteLLM, an internal gateway, or a multi-model platform — and that layer talks to OpenAI on your behalf. Same OpenAI SDK shape (most platforms are OpenAI-compatible), but the model string can point to GPT-5.5, Claude Opus 4.7, Gemini 3.1 Pro, or a fallback chain across all three.

You pay the platform. The platform pays OpenAI. There’s a small markup, sometimes none. In exchange, you get one bill across providers, model switching without code changes, and a fallback path when something breaks.

That’s the whole picture. Neither is “better.” They optimize for different things.

Where Direct Access Wins

Simplicity, lower abstraction, and provider-specific optimization

If you only use OpenAI models, calling OpenAI directly is the simplest possible architecture. One vendor. One set of error codes. One status page to watch. When something breaks, you know who to ask.

It also matters that OpenAI ships features that aren’t always immediately available through abstraction layers. Responses API. Reasoning effort controls. Structured outputs with strict schemas. The Codex tool surface. Provider-specific tool calling formats. A platform layer eventually catches up, but “eventually” can mean weeks. If your product depends on a specific OpenAI feature on day one, the direct path is the only path.

There’s also a real cost to indirection that nobody talks about. Every layer between your code and the model is one more place where something can go wrong, one more team’s status page to monitor, one more billing surprise at month end. For a small team running one model in production, that overhead can outweigh the benefit.

When one model is enough

A lot of teams genuinely only need one model. They picked GPT-5 a year ago, the workflow works, the prompts are tuned, the evals are stable. They don’t want to A/B against Claude. They don’t want to fail over to Gemini. They want the model to keep working and the bill to keep being predictable.

For that team, a routing layer is solving a problem they don’t have. Direct access is the right answer and adding a platform on top is the wrong one. Don’t pay complexity tax for optionality you won’t use.

Where a Model Platform Wins

Rollout speed, fallback, and routing control

Here’s where the GPT-5.5 timeline actually mattered. Between April 23 and April 24, GPT-5.5 was live in ChatGPT but not in the API. CNBC reported at the time that OpenAI flagged “different safeguards” for the API rollout, with no committed date. For most teams that 24-hour gap was nothing. For a few — anyone with a contractual “we ship the latest model within X hours” — it was a problem.

A platform layer doesn’t make OpenAI faster. But it changes what “waiting” looks like. While GPT-5.5 wasn’t yet in the OpenAI API, Claude Opus 4.7 was. Gemini 3.1 Pro was. A routed setup can prefer GPT-5.5 once it lands, fall back to whichever frontier model is currently available, and not require a code deploy when the situation changes.

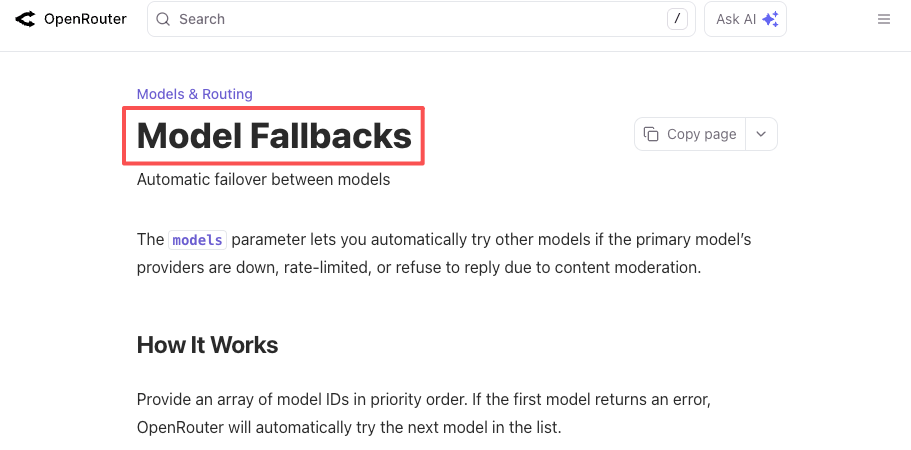

The same logic applies to outages. OpenAI has had multi-hour API incidents this year. So has every other provider. If your product hard-requires “an LLM that works right now,” you either build the fallback yourself or you let a platform handle it. Both OpenRouter’s fallback docs and LiteLLM’s router docs describe this in concrete config: declare a primary model, list fallbacks, get a working response when the primary fails.

This isn’t a hypothetical scenario. It’s a scenario I’ve hit twice this quarter.

Multi-model experimentation and procurement resilience

The other place a platform earns its keep is when you don’t yet know which model is right for the job — or when you suspect that answer will change in six months.

Frontier model leadership is rotating on something like a 3-to-6-month cycle. GPT-5.5 ships, then Anthropic ships, then Google ships, and the “best model for code” or “best model for long-context analysis” answer keeps moving. If you’ve integrated against a single provider’s SDK, switching means real engineering work. If you’re behind a routing layer, switching means changing a model string.

The same logic applies to procurement. A platform consolidates spend across providers into one invoice, one set of usage analytics, one budget control. Finance teams care about this more than engineering teams realize. “We have one AI vendor” is easier to govern than “we have three AI vendors and the spend per vendor changes monthly.”

And then there’s a softer signal: the May 5 release of GPT-5.5 Instant as the new ChatGPT default — covered in OpenAI’s own announcement — went out as chat-latest in the API. Teams pinned to that alias got the upgrade automatically. Teams pinned to a specific version date didn’t. A platform can give you a unified abstraction over both behaviors across providers, instead of you tracking each provider’s versioning rules separately.

A Decision Framework by Team Type

I dislike framework tables in blog posts because they oversimplify. But a rough heuristic helps here, so:

| Team profile | Likely better fit | Reason |

|---|---|---|

| Solo dev, one model, one product | Direct OpenAI | Routing layer adds cost without solving a real problem |

| Production team, OpenAI-only stack, depends on provider-specific features | Direct OpenAI | Day-one access to Responses API, structured outputs, etc. |

| Production team, multi-model in evals, plans to stay multi-model | Platform | Switching cost is the dominant variable |

| Team with strict uptime SLA on an LLM-backed feature | Platform | Fallback chains are the cheapest insurance |

| Team running internal tools, not customer-facing | Either | Pick the one with less ops overhead for you |

| Enterprise with procurement, security review per vendor | Platform | One contract beats N contracts |

| Research / experimentation team comparing frontier models | Platform | Model switching is the entire workflow |

| Team that depends on day-one access to brand-new model versions | Platform with day-zero coverage, or direct provider with high tier | Both work, but tier eligibility matters more than people think |

The table isn’t a verdict. It’s a starting question: which row is closest to my team, and what does that imply about my default architecture?

FAQ

When is direct OpenAI integration enough?

When you’ve already chosen OpenAI, your usage volume isn’t large enough to justify negotiating a separate platform contract, and you don’t have an explicit need for fallback to another provider. Most small and mid-sized teams running production OpenAI workloads fall here.

Why would teams add a model platform on top?

The three reasons that show up most: multi-model routing without code changes, fallback during outages or partial rollouts, and consolidated billing across providers. If none of those apply to you right now, you probably don’t need it right now.

Does a platform help during partial launches?

Yes — but only for the failure mode of “I need a working frontier model and the one I prefer isn’t available yet.” It doesn’t help if you specifically need GPT-5.5 and only GPT-5.5. A platform can route to the best available model from a list. It can’t conjure access to a model OpenAI hasn’t shipped yet.

What trade-offs come with another layer?

You add a vendor between you and the model. That means another status page, another billing surface, another potential point of failure, sometimes a small markup, and occasionally a lag on provider-specific features. None of these are dealbreakers. They’re real costs you should price in.

Conclusion

The honest version is this. Direct OpenAI is the right answer for a lot of teams — probably more than the platform-evangelist crowd admits. A model platform is the right answer for a lot of other teams — probably more than the OpenAI-only crowd admits. The split isn’t ideological. It’s about how many models you actually use, how much your uptime story depends on the LLM, and how much switching cost you can stomach when the next frontier model ships.

GPT-5.5’s rollout was a useful stress test because it had three moments in two weeks: ChatGPT first, API a day later, Instant variant twelve days after that. Every team using a frontier model is going to live through versions of that pattern repeatedly. It’s worth deciding now, on a calm day, which architecture you want to be holding next time.

Run the math against your own setup. That’ll tell you more than this post will.

Previous Posts: