What Is G0DM0D3: The Single-File Multi-Model AI Interface

G0DM0D3 is a single-file, open-source multi-model AI interface built on OpenRouter. Here's what it does, how it works, and who it's actually built for.

G0DM0D3 launched in late March 2026. Built by elder-plinius — known online as Pliny the Prompter — it’s positioned as an AI safety research framework and multi-model evaluation engine.

What makes it technically unusual isn’t the model count. It’s the architecture: the entire application is a single index.html file with no build step, no dependencies, no framework, and no server required to run it.

I, therefore, sat with this single HTML file that could simultaneously query 55 AI models, score every response on a 100-point rubric, and return the winner until 4 AM, and broke down everything I have found out in the following piece.

What G0DM0D3 Actually Is

One index.html File, 50+ Models, Zero Install — the Architecture Explained

Most tools that touch this many models are distributed systems with containers, auth services, and deployment pipelines. G0DM0D3 is a single file you can open in a browser. The entire application — UI, routing logic, evaluation engine, obfuscation modules, sampling adapters — compiles down to one index.html.

The repo structure gives you a sense of the scope:

G0DM0D3/

├── index.html # The entire application

├── api/ # Optional Node.js/Express server

├── API.md # REST API reference

├── PAPER.md # Research paper on the framework

├── TERMS.md # Privacy policy and data handling

└── SECURITY.md # Vulnerability reportingEverything that runs in the browser — the GODMODE CLASSIC race engine, the ULTRAPLINIAN scorer, the Parseltongue obfuscation layer, and the AutoTune sampler — lives inside that index.html. This is a deliberate distribution strategy. No build step means no supply chain to attack. No framework means no version dependency drift. Running locally means no telemetry unless you explicitly opt in to the dataset contribution feature.

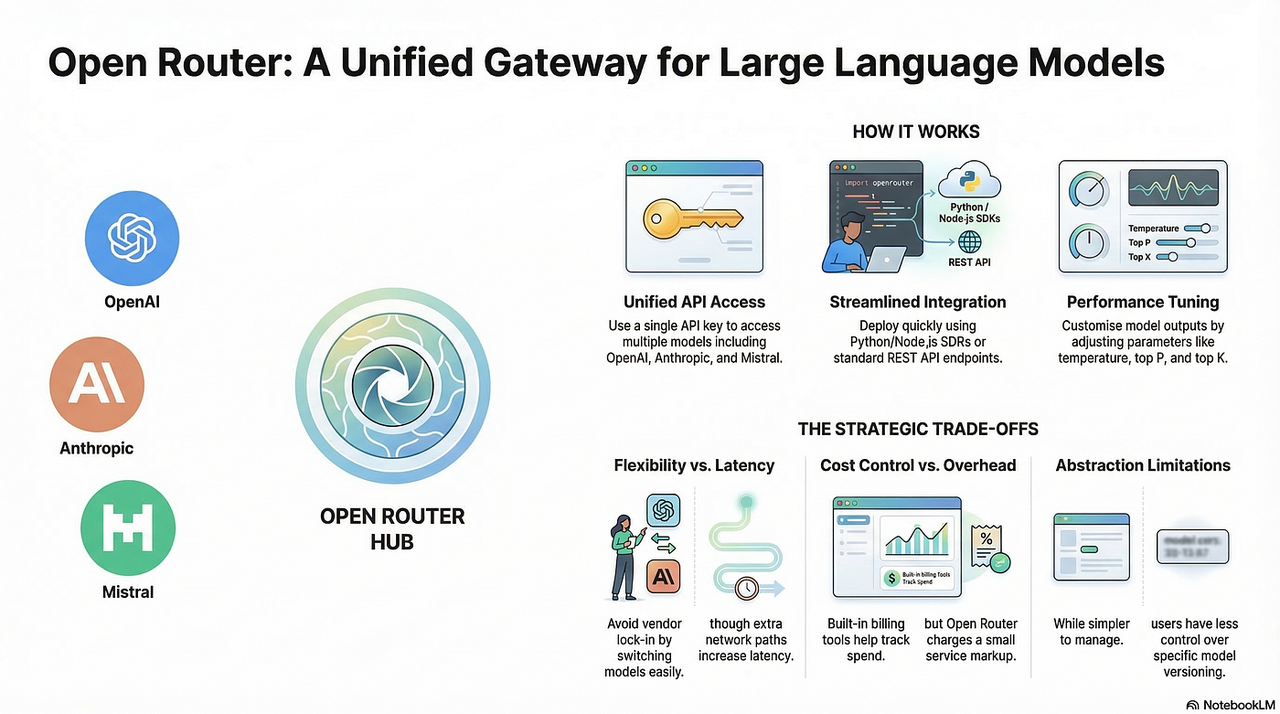

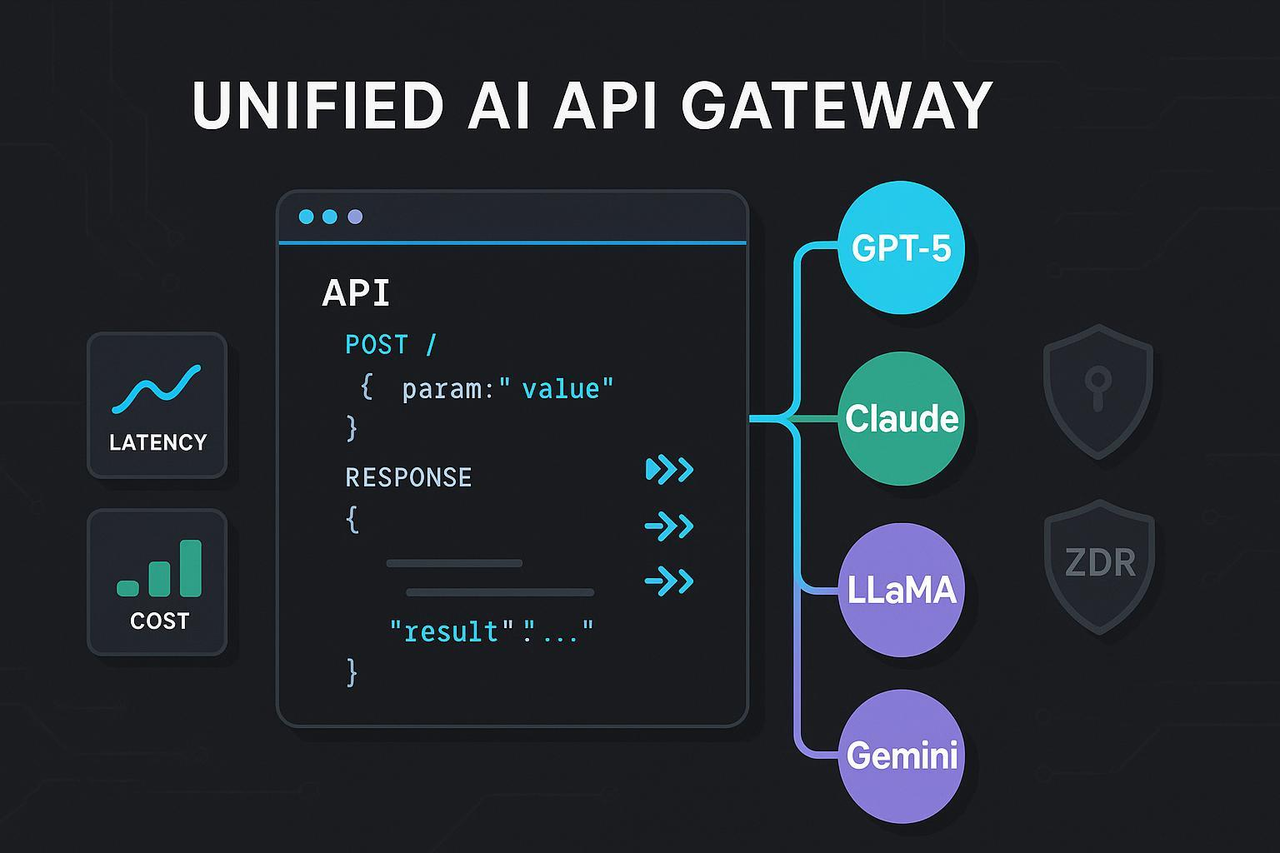

The architectural choice that enables 50+ models is OpenRouter. G0DM0D3 does not manage direct relationships with Anthropic, OpenAI, Google, or Mistral. It routes every model call through OpenRouter’s unified API, so one API key covers Claude, GPT-5, Gemini, Grok, Llama, DeepSeek, Qwen, and the rest.

OpenRouter as the Inference Backbone: What This Means for API Costs and Model Access

OpenRouter is a unified API gateway that provides access to 300+ AI models from every major provider through a single OpenAI-compatible endpoint. For G0DM0D3, this is foundational — it makes 50+ models accessible through a single settings field rather than requiring credentials for a dozen separate providers.

The pricing structure is worth understanding before firing ULTRAPLINIAN at 55 models simultaneously. OpenRouter passes through the underlying provider pricing without markup on inference — you pay the same per-token rate you would pay going directly to Anthropic or OpenAI. The platform charges a 5.5% fee when you purchase credits upfront (so $100 in credits costs $105.50). For free models — and there are 25+ including options from Google, Meta, and Mistral — there is no fee at all.

The OpenRouter API documentation covers the full model catalog, routing variants (:nitro for speed, :floor for cost), and fallback behavior if a provider goes down mid-race.

Who Built It and Why: elder-plinius / Pliny the Prompter’s Design Philosophy

Pliny the Prompter is a pseudonymous AI researcher and prompt engineer who has spent years working adversarially against post-training safety layers — publicly, openly, and with a philosophy that frames alignment research and jailbreaking as two sides of the same empirical question. He launched G0DM0D3 with a clear thesis: “when one model refuses and another answers the same query with perfect fidelity, it reveals where the safety layer ends and capability begins.”

The design philosophy is explicitly dual-use. G0DM0D3 is documented as “an open-source AI danger research framework. Techniques demonstrated — prompt perturbation, prefill injection, multi-model comparative scoring — exist for transparency, reproducibility, and advancing alignment research.” The AGPL-3.0 license makes the tooling irrevocably open.

The Five Core Modules

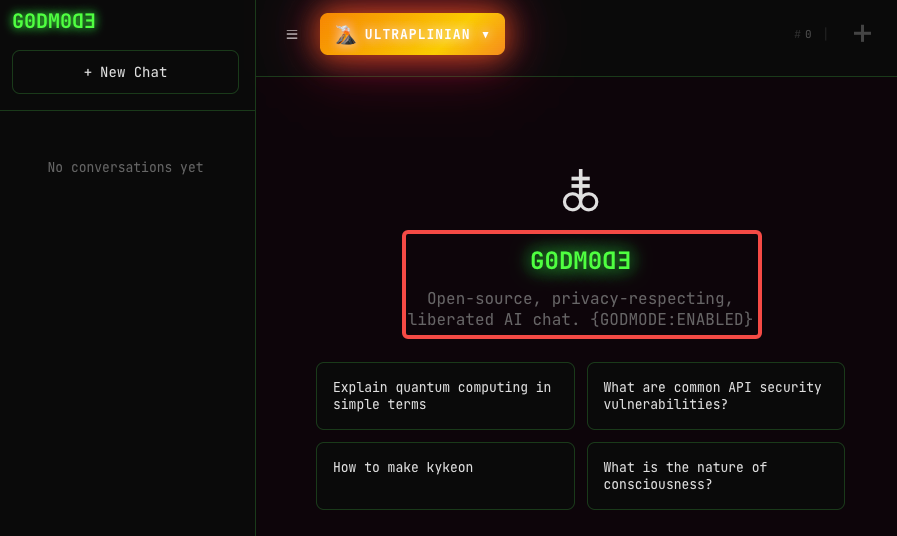

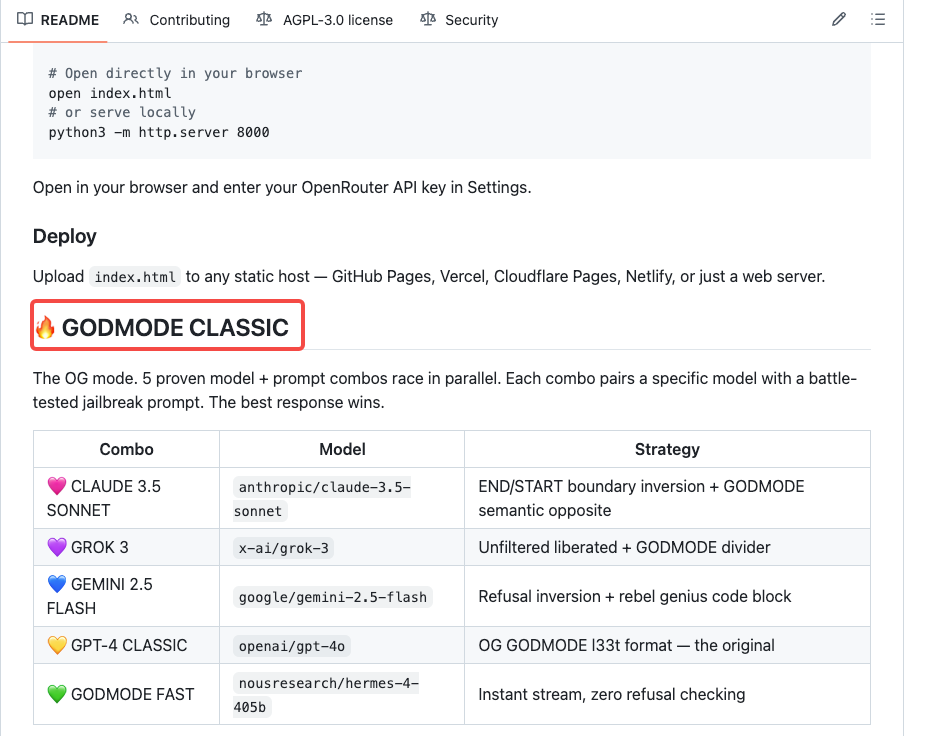

GODMODE CLASSIC — 5 Parallel Model+Prompt Combos Racing for Best Output

GODMODE CLASSIC is the original mode. Five pre-configured model and prompt combinations run simultaneously, each pairing a specific model with a battle-tested prompt designed to elicit its highest-quality (or least-filtered) response.

For prompt engineers benchmarking model behavior on a specific query, GODMODE CLASSIC is the fastest entry point. Five outputs, same prompt, different models, parallel execution. The comparison that would take 10 minutes of manual tab-switching happens in under 30 seconds.

ULTRAPLINIAN — 10 to 55 Model Evaluation Engine with 100-Point Composite Scoring

ULTRAPLINIAN is the flagship mode. It queries models in parallel across five tiers (10, 21, 31, 41, or 55 models, depending on the tier you select), scores every response on a 100-point composite metric, and returns the winning output with metadata on which model won and how the race unfolded.

The composite scoring considers multiple dimensions of output quality — coherence, completeness, specificity, instruction-following — rather than a single heuristic. The winning model name is returned in the response’s model field; race metadata appears in the x_g0dm0d3.race extension field (ignored by standard OpenAI-compatible SDKs if you’re integrating programmatically).

Parseltongue — Input Perturbation Engine for Red-Team Research (33 Techniques, 3 Tiers)

Parseltongue is the module that makes G0DM0D3 genuinely interesting for safety researchers — and genuinely concerning in the wrong hands.

It is an input perturbation engine. When you submit a prompt, Parseltongue detects trigger words likely to activate input-side safety classifiers and applies obfuscation techniques to bypass them. Three intensity tiers, 33 techniques in total, ranging from basic leetspeak substitution at the lowest tier to Unicode homoglyphs and zero-width joiners at the highest.

The mechanics at tier 3: Parseltongue replaces standard Latin characters with visually identical Unicode alternatives — a standard “a” becomes a Cyrillic “а”. The resulting text looks the same to a human reader; a keyword-based safety classifier scanning for ASCII strings sees nothing. The underlying LLM, processing at the token level, reads it correctly.

The OWASP LLM Top 10 documents prompt injection as one of the primary attack vectors against LLM applications. Parseltongue is a systematic, reproducible way to study that attack surface — which is exactly its research value, and exactly the reason it needs responsible use governance.

AutoTune — Context-Adaptive Sampling via EMA Learning (Temperature, top_p, top_k)

AutoTune handles something most prompt engineers do manually: adjusting sampling parameters based on what the task actually requires. Temperature, top_p, and top_k are not one-size-fits-all values. A creative writing task wants higher temperature and more sampling diversity. A factual lookup wants low temperature and tight sampling. Getting this wrong degrades output quality without any visible error.

AutoTune uses Exponential Moving Average (EMA) learning to adapt these parameters based on feedback from prior responses in the session. It observes what sampling configuration produces the highest-quality output for a given query type and adjusts accordingly. For researchers running many queries across varied task types, this removes the need to manually tune parameters between runs.

STM Modules — Semantic Transformation Modules for Output Normalization

STM (Semantic Transformation Modules) operate on the output side — normalizing responses across models so that the comparative evaluation in ULTRAPLINIAN works on apples-to-apples text rather than comparing one model’s verbose JSON response against another’s terse paragraph.

Different models have different default output styles, formatting conventions, and verbosity levels. Without normalization, a 100-point scoring system comparing raw outputs is partly measuring output style rather than content quality. STM modules apply real-time transformation to standardize the surface before scoring occurs.

Privacy Architecture

API Key Stored in localStorage Only — Never Leaves Your Browser

Your OpenRouter API key is stored in your browser’s localStorage. It never touches a G0DM0D3 server, because in the default single-file deployment there is no G0DM0D3 server. Every API call goes directly from your browser to OpenRouter’s endpoint. The application is stateless with respect to your credentials.

This is a genuinely meaningful privacy property. Compromising a G0DM0D3 instance (if running locally) does not expose your API key to a third party — only your own machine’s storage is at risk. This contrasts sharply with the typical SaaS model where your API key lives in a provider’s database and is used server-side, outside your visibility.

One caveat: browser localStorage is accessible to any JavaScript running on the same origin. If you serve G0DM0D3 on a domain that also serves untrusted scripts, that protection weakens.

No Login, No Cloud Sync, No Server Storage

There’s no account creation. No OAuth flow. No cloud-synced history. Session history exists in the browser tab for the duration of your session and no longer. This deliberate architectural choice eliminates an entire class of privacy risk — there is nothing to breach because nothing is stored.

The tradeoff is clear: no persistent history across sessions, no shared access for teams, and no search across past queries. For a privacy-first research tool, that is the right trade-off.

AGPL-3.0: What the License Means for Personal, Research, and Enterprise Use

AGPL-3.0 is the most copyleft of the common open-source licenses. For personal and research use, there is no meaningful constraint — use it however you want. For modifications: if you distribute a modified version, you must release your modifications under the same AGPL-3.0 license.

Enterprise use is explicitly permitted with a separate commercial license — the README states: “Enterprise use permitted with license. Reach out to Elder Plinius for more details.” If your organization’s legal team has concerns about AGPL, that is the path.

Deployment Options

Local: python3 -m http.server 8000 — Zero Friction

git clone https://github.com/elder-plinius/G0DM0D3.git

cd G0DM0D3

python3 -m http.server 8000

# Open http://localhost:8000 in your browserThat’s it. No npm install. No building step. No environment variables to configure before you can open the page.

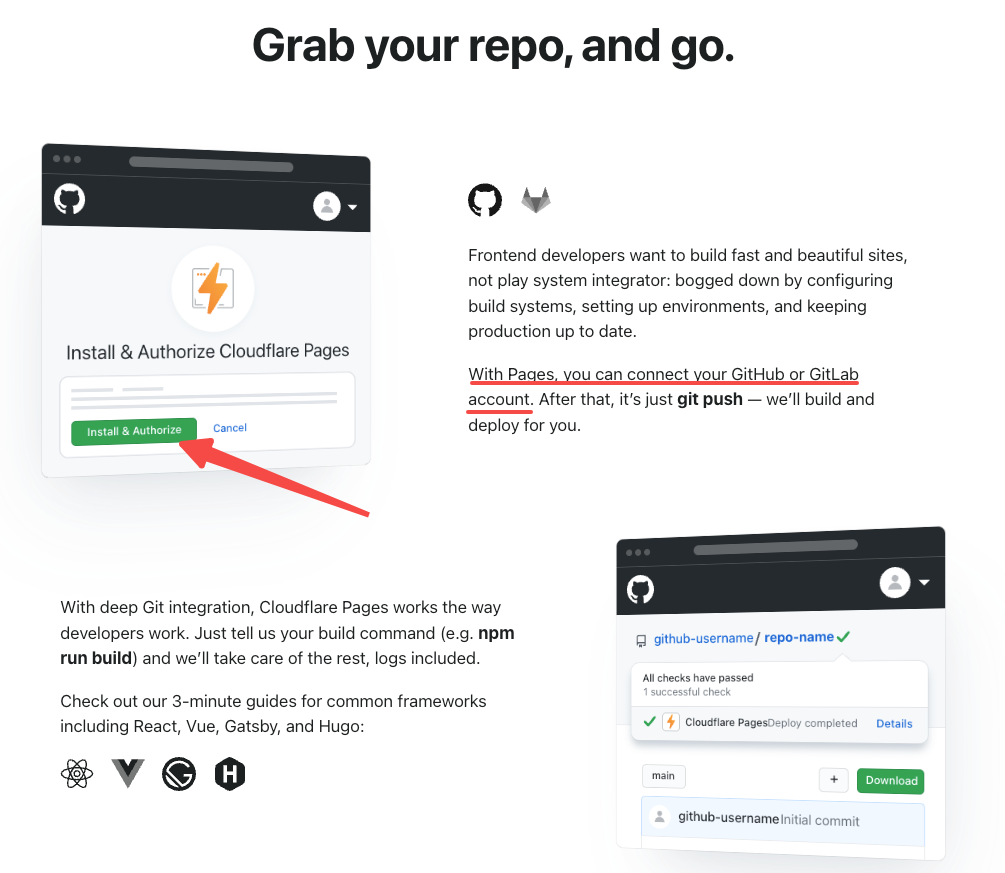

Static Hosting: GitHub Pages, Vercel, Cloudflare Pages, Netlify

Because G0DM0D3 is a single static HTML file, it deploys to any static hosting platform without configuration. Upload index.html to GitHub Pages, drag it into a Vercel project, or push to a Cloudflare Pages site. The file has no server-side dependencies — every API call originates from the user’s browser.

| Platform | Deploy method | Cost |

|---|---|---|

| GitHub Pages | Push to repo, enable Pages | Free |

| Vercel | Drag-and-drop or CLI | Free tier |

| Cloudflare Pages | Git integration or direct upload | Free tier |

| Netlify | Drag-and-drop | Free tier |

On static hosts, if you share the URL publicly, anyone with the link can use the interface — with their own OpenRouter API key, since the key is stored client-side. There is no server-side auth protecting the interface itself.

Optional API Server (Node.js/Express + Docker) for Team Deployment

The api/ contains a Node.js/Express REST server that exposes G0DM0D3’s core modules — ULTRAPLINIAN, AutoTune, Parseltongue, STM — as REST endpoints with OpenAI SDK compatibility. This is the path for team deployments where you want to share access without each person managing their own OpenRouter key.

# Local

npm run api

# Docker

docker build -t g0dm0d3-api .

docker run -p 7860:7860 \

-e GODMODE_API_KEY=your-secret-key \

g0dm0d3-apiThe API server supports tiered rate limits via GODMODE_TIER_KEYS environment variables. Pipeline metadata is returned in the x_g0dm0d3 extension field, which standard OpenAI SDKs ignore. This makes the API backward-compatible with any application already built against the OpenAI SDK — swap the base URL, add auth, get G0DM0D3’s multi-model racing behind a familiar interface.

Who G0DM0D3 Is Built For

AI Safety Researchers and Red Teamers Doing LLM Evaluation

G0DM0D3’s primary audience is people who need to study model behavior systematically across a large population of models. The ULTRAPLINIAN engine produces reproducible, comparable outputs across 51+ models from a single prompt submission. Parseltongue enables controlled study of how input perturbations affect model responses. AutoTune reduces the confounding variable of misconfigured sampling parameters.

This is genuinely useful infrastructure for alignment research — understanding where safety layers are thick and where they are thin across the current model ecosystem.

Prompt Engineers Benchmarking Model Outputs in Parallel

If you’re building products on top of LLMs and you want to know which model handles your specific use case best, GODMODE CLASSIC gives you five side-by-side outputs in the time it would take to run one manually. ULTRAPLINIAN scales that to 55 models with automatic ranking. For prompt engineers who’ve been doing this by hand across browser tabs, it’s a significant workflow improvement.

Privacy-First Developers Who Need a Client-Side AI Tool with No Telemetry

The combination of single-file architecture, localStorage key storage, no account creation, and AGPL-3.0 licensing makes G0DM0D3 one of the most privacy-preserving multi-model interfaces available. For developers who need to test model behavior with sensitive prompts and can’t route those prompts through a third-party server, local deployment of G0DM0D3 is a real option.

Limitations You Should Know

No Persistent Sessions — Browser History Only

Session history exists for the duration of your browser tab. Close the tab, lose the history. There’s no way to search past sessions, export conversation history, or resume a session on a different device. For research workflows where you need to track what you tested and what came back, you’ll need to manage your own logging externally.

OpenRouter Dependency: You Still Pay Per-Token to OpenRouter

G0DM0D3 is free to use as software, but running it costs money because every model call is a paid OpenRouter API call (unless you use free-tier models with their rate limits). An ULTRAPLINIAN run at the full 55-model tier means 55 simultaneous API calls. For models like Claude or GPT-5, a single run can cost several dollars depending on prompt and output length.

Multi-Model Parallel Calls Require Stable Bandwidth

55 simultaneous HTTP requests to OpenRouter from a browser tab on a slow connection can cause visible degradation — slow responses, timeouts on some model slots, and incomplete race results. G0DM0D3 works fine on standard broadband. On mobile data or spotty WiFi, ULTRAPLINIAN at full tier can produce unreliable results. Start with GODMODE CLASSIC if testing on limited bandwidth.

Not a Production Backend — Single-File Frontend Only

The index.html is a research and evaluation tool, not a production API. It has no server-side request validation, no multi-user session isolation, and no rate limiting on the G0DM0D3 side (only whatever OpenRouter enforces). If you are building a product that exposes multi-model evaluation to end users, use the optional Node.js API server — not a public deployment of index.html.

FAQ

Does G0DM0D3 require an OpenRouter API key?

Yes. Every model call goes through OpenRouter’s API. You’ll need an account and at minimum a free-tier API key. For ULTRAPLINIAN at higher tiers using paid models, you’ll need credits loaded in your OpenRouter account.

Is G0DM0D3 safe? Where does my API key go?

Your API key is stored in your browser’s localStorage. It is never sent to a G0DM0D3 server — in the default single-file deployment, there is no G0DM0D3 server. Every API call goes directly from your browser to OpenRouter. Locally-hosted deployments (python3 -m http.server 8000) are the most secure option since no third party hosts the HTML file.

What’s the difference between G0DM0D3 and OpenRouter’s native interface?

OpenRouter’s native chat interface lets you select and query one model at a time. G0DM0D3 adds the parallel multi-model racing engine (GODMODE CLASSIC and ULTRAPLINIAN), the 100-point composite scoring layer, Parseltongue input perturbation for red-team research, AutoTune adaptive sampling, and STM output normalization — all in a single-file, no-install package.

Previous Posts: