Claude Code Hidden Features Found in the Leaked Source: Full List (2026)

Every hidden feature found in Claude Code's leaked source: BUDDY the AI pet, KAIROS the always-on assistant, ULTRAPLAN, Undercover Mode, and 17 more unreleased tools. Full list inside.

I was casually scrolling my feed when one tweet stopped me cold. Security researcher Chaofan Shou had just dropped the news: the entire Claude Code source — all 512,000 lines of TypeScript spread across nearly 1,900 files — was sitting wide open on npm. No hack, no exploit. Just one forgotten .map debugging file that never got added to .npmignore.

By lunchtime the developer internet was in full meltdown mode.

I spent the next two days buried in archived mirrors, frantic Discord threads, and deep-dive breakdowns from engineers who tore the code apart line by line. What they uncovered inside is wild: a whole collection of powerful features Anthropic has already built… but hasn’t shipped yet.

So if you’ve ever wondered what Claude is actually becoming behind the scenes, buckle up.

Here’s the most complete public breakdown of the hidden features found in the Claude Code leak.

How We Know: What the Leaked Source Actually Contains

Right on that game-changing point, the leaked source was exposed through a source map file published to the npm registry. The codebase comprised roughly 1,900 TypeScript files, 512,000+ lines of code, and approximately 40 built-in tools.

The leaked source contains 44 compile-time feature flags. At least 20 of them gate capabilities that are built and tested but do not appear in external releases. The reason these features are invisible in the version you run is Bun’s compile-time dead code elimination: when a flag is set to false at build time, the code simply doesn’t exist in the output binary. This means the 108 gated modules documented in the source are real, working code — not speculative concepts or half-built prototypes.

Why compile-time flags instead of runtime toggles? A runtime toggle can be discovered by inspecting the binary or intercepting API calls. A compile-time flag leaves no trace — the feature doesn’t exist in the shipped artifact. Internal model codenames follow animal names: Capybara, Tengu, and others appear throughout. “Tengu” shows up hundreds of times as a prefix for feature flags and analytics events — almost certainly Claude Code’s internal project codename.

BUDDY — The Complete AI Pet System

This is the one that broke Twitter. buddy/companion.ts implements a full Tamagotchi-style AI pet that lives in a speech bubble next to your terminal input. 18 species total, hidden via String.fromCharCode() arrays. Rarity tiers: Common → Uncommon → Rare → Epic → Legendary. 1% shiny chance, independent of rarity. Stats: DEBUGGING / PATIENCE / CHAOS / WISDOM / SNARK.

The species names were deliberately obfuscated. Community analysis of the String.fromCharCode() arrays decoded the full species list, which includes: duck, dragon, axolotl, capybara, mushroom, ghost, nebulynx, and more — 18 total. The capybara species appearing in a tool whose unreleased top model tier is also codenamed Capybara is, I’m choosing to believe, intentional.

Your buddy’s species is determined by a Mulberry32 PRNG, a fast 32-bit pseudo-random number generator seeded from your userId hash with the salt 'friend-2026-401'. The same user always gets the same buddy. This is a deliberately considered design choice — it means every user has a unique but stable companion identity. You can’t reroll by restarting. Your buddy is yours.

The companion system prompt instructs Claude: “A small {species} named {name} sits beside the user’s input box and occasionally comments in a speech bubble. You’re not {name} — it’s a separate watcher.” Claude generates a custom name and personality (“soul description”) on first hatch. The buddy can respond when addressed by name.

KAIROS — The Persistent Always-On Assistant

The most revealing feature in the leak is KAIROS, referenced more than 150 times in the source. KAIROS represents a fundamental shift in user experience: an autonomous daemon mode. While current AI tools are largely reactive, KAIROS allows Claude Code to operate as an always-on background agent.

It doesn’t wait for you to open a terminal. It doesn’t wait for you to type. KAIROS is an always-on daemon that runs 24/7 and proactively acts on your behalf. The scaffolding includes a /dream skill for nightly memory distillation, GitHub webhook subscriptions, and background daemon workers on a five-minute cron refresh.

The nightly “dreaming” phase is handled by a separate subsystem called autoDream. The autoDream logic merges disparate observations, removes logical contradictions, and converts vague insights into absolute facts — pruning the memory store to ≤200 lines / 25KB per the source constraints.

KAIROS also gets tools that regular Claude Code sessions don’t have: push notifications, file sends, and GitHub PR subscriptions. The companion mode uses “brief output mode” — extremely concise responses designed for persistent background operation, not interactive conversation.

The Anthropic research blog hasn’t mentioned KAIROS by name. The feature gating here feels intentional — not just “not ready yet,” but “not ready to explain yet.”

ULTRAPLAN — 30-Minute Cloud Planning Sessions

ULTRAPLAN is a mode where Claude Code offloads a complex planning task to a remote Cloud Container Runtime session running Opus 4.6, gives it up to 30 minutes to think, and lets you approve the result from your browser. When approved, there’s a special sentinel value ULTRAPLAN_TELEPORT_LOCAL that “teleports” the result back to your local terminal.

The architecture here is the key insight: some tasks are simply too expensive to plan inside a standard session. ULTRAPLAN acknowledges this by making the planning phase a separate, asynchronous job — closer to a background CI run than a chat interaction.

This is where the claude code architecture distinction from session-based tools becomes sharpest. ULTRAPLAN is designed specifically for architectural tasks: large-scale refactors, cross-service migrations, any planning problem where getting the approach wrong at step one cascades through everything downstream. You submit the task, walk away, and come back to review a 30-minute Opus planning session from your browser before a single file gets touched.

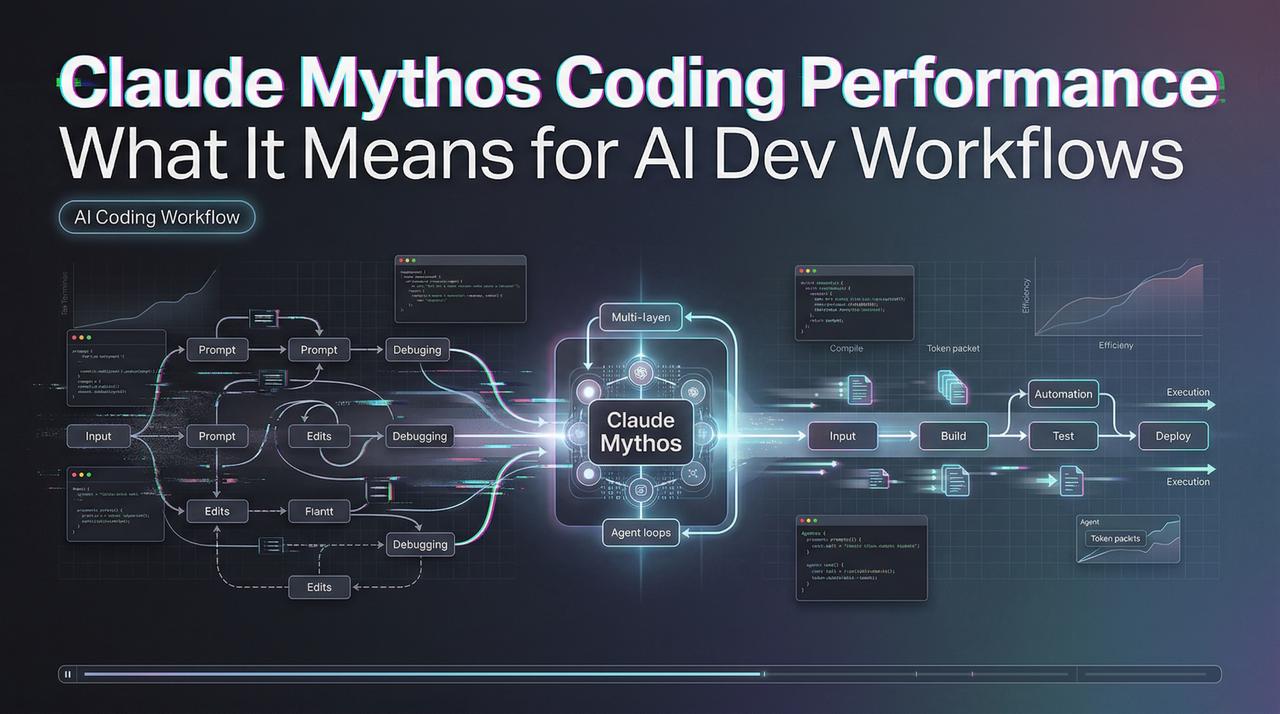

Coordinator Mode — One Claude Managing Multiple Worker Agents

Claude Code has a full multi-agent orchestration system in coordinator/, activated via CLAUDE_CODE_COORDINATOR_MODE=1. When enabled, Claude Code transforms from a single agent into a coordinator that spawns, directs, and manages multiple worker agents in parallel.

The coordinator assigns tasks and maintains a shared team memory space across all agents. Workers request human approval for dangerous operations through a mailbox system. An atomic claim mechanism prevents two workers from handling the same approval request simultaneously.

The multi-agent coordinator in coordinatorMode.ts is interesting because the orchestration algorithm is a prompt, not code. It manages worker agents through system prompt instructions like “Do not rubber-stamp weak work” and “You must understand findings before directing follow-up work. Never hand off understanding to another worker.”

This is distinct from the Agent Teams feature that shipped with Opus 4.6. Agent Teams runs multiple peer Claude sessions that communicate through a mailbox. Coordinator Mode is a hierarchical structure — one Claude instance with explicit authority over multiple workers, with shared memory and structured approval gating.

Undercover Mode — The One That Raised the Most Questions

Prompt instructions in a file called undercover.ts state: “You are operating UNDERCOVER in a PUBLIC/OPEN-SOURCE repository. Your commit messages, PR titles, and PR bodies MUST NOT contain ANY Anthropic-internal information. Do not blow your cover.”

This auto-triggers for Anthropic employees (USER_TYPE === ‘ant’) in public repos. There is NO force-OFF: “if we’re not confident we’re in an internal repo, we stay undercover.” The mode strips AI attribution, internal codenames (Tengu, Capybara), and Co-Authored-By lines from all git output.

The practical effect: Anthropic engineers using Claude Code to contribute to public open-source projects generate commits with no AI fingerprint. This provides a technical framework for any organization wishing to use AI agents for public-facing work without disclosure — a capability that enterprise competitors will likely view as a mandatory feature.

This does not affect external users. The trigger is USER_TYPE === 'ant' — an Anthropic employee account flag. Regular Claude Code sessions never enter Undercover Mode. The Register noted an additional mystery: a prior “Melon Mode” for Anthropic employees that appeared in earlier reverse-engineered versions is absent from the current leaked source, suggesting it was removed or renamed.

The Full Flag List — Every Other Unreleased Feature

Community analysis documented the remaining gated features. These are all from the leaked source — none confirmed by Anthropic as shipping:

The anti-distillation flag is worth calling out specifically. When ANTI_DISTILLATION_CC is enabled, Claude Code sends anti_distillation: ['fake_tools'] in its API requests. The idea: if someone is recording Claude Code’s API traffic to train a competing model, the fake tools pollute that training data.

FAQ

When will BUDDY be officially released?

The leaked source includes internal comments suggesting a teaser for April 1–7 and a full launch target in May 2026 — but these are unverified notes from internal code, not official announcements. Anthropic hasn’t confirmed any public timeline for BUDDY. The /buddy command did activate on April 1 as the leaked source suggested, which is the strongest signal that the May launch date from the source comments may also hold — but treat it as unconfirmed.

Is KAIROS available in any current Claude Code version?

No. KAIROS and the other unreleased features compile to false in external builds. You cannot enable KAIROS by editing config files or setting environment variables in a standard Claude Code installation — the code doesn’t exist in the shipped binary.

What is Undercover Mode and does it affect regular users?

It doesn’t affect you. Undercover Mode triggers only for Anthropic employee accounts (USER_TYPE === ‘ant’) and only in public repositories. It’s designed for internal dogfooding without leaving AI attribution in public git history.

Are these hidden features confirmed by Anthropic?

No. Everything in this article comes from community analysis of the claude code leaked source. Anthropic confirmed the leak itself (“a release packaging issue caused by human error”) but has made no statements about specific features documented here.

Where can I read the full analysis of the Claude Code leaked source?

Anthropic patched the npm package quickly, but GitHub mirrors and archived versions spread before the patch landed. Community analysis threads — including detailed breakdowns of BUDDY, KAIROS, and Undercover Mode — remain accessible through public repositories. Search for claude-code-source-leak on GitHub or claurst for a Rust-based community analysis repo. Anthropic has filed DMCA takedowns against direct mirrors on major platforms; decentralized archives and clean-room rewrites in Python and Rust remain accessible.

Previous Posts:

- Claude Code Leaked Source: BUDDY, KAIROS & Every Hidden Feature Inside

- Claude Code Undercover Mode: What the Leaked Source Actually Reveals

- Build an AI Creative Pipeline with GLM-5 + WaveSpeed

- How to Use Seedance 2.0 via API: Async Jobs, Retries, and Result Handling

- Seedance 2.0 Quick Start on WaveSpeed: First Video in 10 Minutes