How to Use Seedance 2.0 via API: Async Jobs, Retries, and Result Handling

Hello, everybody. Dora is coming. You know, I kept nudging a long-running task in the Seedance 2.0 API and catching myself alt-tabbing to check if it finished. Not broken, just a low-grade drag. Over a few days, I ran a handful of real jobs (content transforms and batch extractions) and paid attention to the parts that actually changed how my day felt.

What follows is the handful of patterns that made the work steadier: how I submit, track, and collect results: how I package inputs: what I retry (and what I don’t): and the basic guardrails that kept me from tripping over keys, costs, and logs. If you’re already juggling APIs, this will feel familiar, on purpose.

API job lifecycle (submit → status → result)

I tried to keep the Seedance 2.0 API simple in my head: three moves, submit, check status, fetch result. When I actually treated it that way, the mental load dipped.

Submit: I send a job with a clear, self-contained payload and a client-generated idempotency key (more on that later). I write down, in code comments for myself, what I consider “done.” Not a philosophical thing: just the exact shape of success (e.g., JSON with fields X, Y, Z: a checksum that matches: no partials).

Status: I stopped thinking of status as one thing. I bucket it:

- In-progress (safe to poll)

- Blocked (needs my action, usually bad input)

- Terminal (succeeded or permanently failed)

That tiny split changed how I checked. If it’s in-progress, I back off and wait. If it’s blocked, I fix inputs. If it’s terminal, I move on. I don’t over-interpret intermediate labels.

Result: When a job finishes, I pull outputs in a format I can trust later, usually JSON with a stable schema and a simple content hash. If the API supports webhooks, I still keep polling as a fallback. Webhooks are great until a firewall rule or queue glitch eats one. Polling is boring and reliable.

Two small field notes:

- Early runs didn’t save time. After a few iterations, I noticed they saved attention. Fewer “did that finish?” checks, more “I’ll see it when it’s truly done.”

- I avoid chaining jobs inside the API when I can help it. One job, one result. If I need fan-out or dependency logic, I keep that in my system. It makes blame and retries cleaner.

If you’re building around this, a simple state machine helps. No drama, just a few enum states and clear transitions. It’s not fancy, but it absorbs edge cases without turning into spaghetti.

Payload design (text + reference packaging)

Most of my friction came from payloads. Not failures, just mismatches. When I lifted the structure a bit, things clicked.

I stopped sending giant text blobs inline when I didn’t have to. Instead, I:

- Send concise text instructions and parameters inline.

- Pass large artifacts (docs, media, prior outputs) by reference, signed URLs or object keys, with versioned identifiers.

This split made retries safer and reduced upload churn. It also made logs saner: I could see what changed between runs without scrolling through megabytes of content. If the Seedance 2.0 API needs both text and references, I keep them under a single “input” object with clear names. Future me appreciates not hunting for stray fields.

Validating inputs before submit

Before I send anything, I run three checks locally:

- Shape: Does the payload match my own schema? Required fields present, types correct, enums valid. I use a JSON Schema validator for this.

- References: Do URLs resolve and meet size/type rules? I preflight HEAD requests and attach content-length and checksum when available.

- Expectations: Are the parameters consistent with the kind of job I’m asking for? If I say “summarize,” I don’t also pass “full_transcript=true.” Silly, but it happens.

These checks don’t make errors disappear: they move them to the cheapest place to fix, before network hops, before rate limits, before I’m reading logs at midnight.

Reliability patterns

After a week of steady use, most of my headaches came from retries I couldn’t reason about. The cure was simple patterns I could explain to a teammate in a sentence.

I split failures into two piles:

- Safe to retry (transient network issues, 5xx, timeouts before server work starts)

- Don’t retry blindly (validation errors, quota exceeded, unknown states)

Once I did that, the rest fell into place.

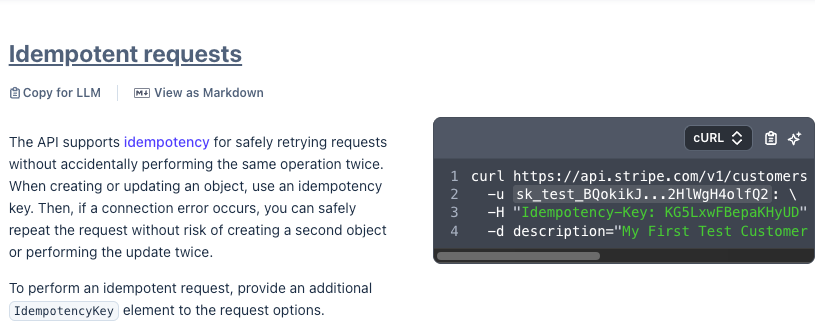

Idempotency keys + safe retries

I add a unique idempotency key to each job submission. The server should treat repeats with the same key as the same request. In practice, I assume I might not know whether a request reached the server. So I make retries safe by design.

What helped:

- Derive the key from stable inputs (e.g., a UUID plus a hash of the normalized payload) so accidental duplicates collide on purpose.

- Store the key and the intended effect with a short TTL on my side. If I lose a response, I can confidently retry.

- Treat non-idempotent operations (like “start and bill”) as idempotent at the client boundary. Either the server enforces it or I avoid automatic retries.

If you want a solid mental model, the way payments APIs handle this is clear. Stripe’s idempotency keys docs are concise and worth a skim, even if you’re not moving money.

Timeouts, backoff, and retry caps

I keep three numbers close by: request timeout, initial backoff, and max attempts.

My default shape looks like this:

- Timeouts: conservative but not stingy. Long enough for typical server work, short enough to avoid zombie sockets. If a job is truly long-running, I prefer a quick submit call and separate polling.

- Backoff: exponential with jitter. Jitter matters. Without it, synchronized retries behave like a small DDoS.

- Caps: hard limits on total retries and total wall-clock time per job. After I hit a cap, I surface a human-friendly error and stop. No quiet thrashing.

In practice, these numbers changed twice: once after the first day (too aggressive), and once after I saw a pattern of short spikes around the hour mark (I added more jitter). None of it was fancy. It just made the system feel calmer.

Observability (logs, failure buckets, cost monitoring)

I don’t chase full tracing unless I need it. For Seedance 2.0 API work, three views were enough:

- Request logs with correlation IDs: I tag each submit, status, and result call with the same correlation ID. When something goes sideways, I can follow one job end to end without guesswork. OpenTelemetry’s semantic conventions are a helpful nudge if you’re setting this up fresh.

- Failure buckets: I group failures by cause (validation, auth, quota, timeout, 5xx, schema mismatch). Buckets make trends visible. If “quota” suddenly grows teeth on Mondays, I plan around it instead of firefighting.

- Cost lens: I log estimated cost per job, inputs, outputs, retries included, and roll that up weekly. The point isn’t precision: it’s feeling the slope. A simple percentile view (P50, P95) shows if a few outliers are quietly eating the budget.

A small note on alerts: I keep them boring. No fireworks, just thresholds that map to action: “failure bucket > X for Y minutes” or “cost P95 jumps > Z% week over week.” I’d rather notice late than live in false positives. The energy saved pays off elsewhere.

Security & compliance basics (keys, user content handling)

Nothing fancy here, and that’s kind of the point. The basics do most of the work.

- Keys: I keep API keys out of code and rotate them on a schedule. Per-environment keys, least privilege if scopes exist, and no sharing across teams. If the API supports short-lived tokens, I use them.

- User content: I don’t log raw user data. I log hashes, sizes, and references. If I need samples for debugging, I scrub or redact first, with a clear retention timer.

- Data handling: I tag every job with a tenant or user ID and carry that tag into logs and storage. It’s mundane, but it keeps access checks from turning into folklore.

- Storage: Results land in a bucket or database with server-side encryption and tight ACLs. Audit trails matter more than cleverness here.

- Compliance posture: If a team needs SOC 2 or GDPR comfort, I write down exactly what goes where, who can see it, and for how long. No promises in the dark. When in doubt, I check the vendor’s security page and data-processing terms instead of guessing.

The test for me is simple: could I explain this setup to a privacy-minded colleague without hand-waving? If not, I haven’t simplified it enough.

One last note

I came in looking for speed. What I got was steadiness. The Seedance 2.0 API didn’t remove steps: it made them predictable. That was enough to make the work feel lighter. I’m still watching how costs trend over a month, and whether my buckets hold up under new job types. Quiet questions, but good ones. Do you think so?