What We Know About oai-2.1 So Far

oai-2.1 has surfaced in leak chatter, but there is no official OpenAI model page for it. Here is what builders should and should not assume.

Dora here. Someone on my team forwarded a screenshot last month. A Codex model picker dropdown with names nobody had seen before — oai-2.1, arcanine, glacier-alpha, two variants of glacier-alpha-block. The reaction in our channel was instant: “Is this the next thing?” Followed by the question I actually cared about: should we be planning around it.

So I went to check. As of writing, there is no official docs page for oai-2.1. No pricing entry. No API reference. No deprecation notice. The name showed up in a dropdown, briefly, on some Pro accounts, then disappeared. That’s it.

This piece is not about what oai-2.1 might be. I have no idea, and anyone telling you otherwise is guessing. This is about what teams that care about new models should — and shouldn’t — do with information at this level of certainty. Mostly the shouldn’t.

Why oai-2.1 Is Being Discussed

Where the name appears

The sequence, as far as I can reconstruct it: on or around April 22, 2026, a Codex Pro user posted a screenshot of the model selector showing names beyond the publicly available roster. gpt-5.5 was on the list — at that point unreleased — alongside oai-2.1 and codenames. The screenshot circulated. TestingCatalog amplified it. Hacker News picked up a thread. The names were pulled from the dropdown shortly after.

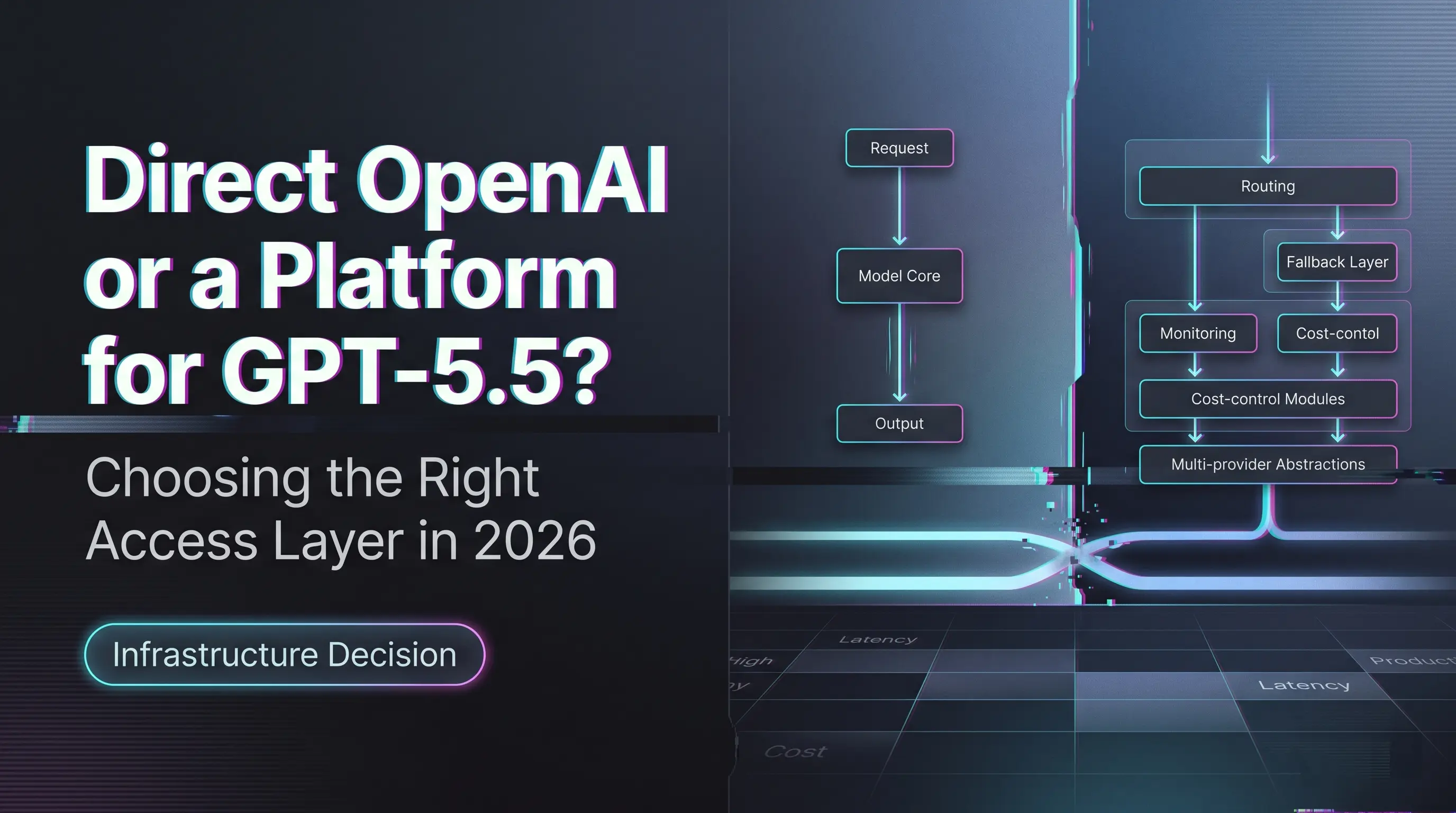

GPT-5.5 has since shipped. It now appears in the official Codex models catalog as the recommended frontier model for complex coding and agentic workflows. So one of the leaked names crossed from rumor into product.

The others — oai-2.1, arcanine, glacier-alpha, and the block variants — have not. They are still where they were on April 22: not in the docs, not in the API, not in pricing.

Why that does not equal a launch

A model name in a UI dropdown is one of the weakest possible signals in this category. It can mean a model is close to release. It can also mean an internal test slug, a routing variant, a renamed checkpoint, an A/B branch, a deprecated experiment that was never pruned from a config file, or something that will never see daylight. The same dropdown surfaced both a name that became a real product (gpt-5.5) and several that — at the time of writing — have not.

The fact that one leaked name resolved into a launch is not evidence the others will. It’s evidence that internal naming spaces are larger than public catalogs.

I paused here when sketching this article. Treating one confirmed launch as predictive of the others is the same reasoning error as treating a deprecation rumor as a deprecation notice. The signal type is wrong.

What Is Confirmed and What Is Not

Official model catalog reality check

If I take everything I can verify against OpenAI’s own surfaces today, the picture is short.

What is confirmed: gpt-5.5 exists, is documented, has an API entry, and is the current recommended Codex frontier model alongside gpt-5.4, gpt-5.4-mini, and the gpt-5.3-codex family. The OpenAI API changelog records its release with 1M context, image input, structured outputs, function calling, prompt caching, batch, and a long list of tool support. Concrete, dated, dollarized.

What is not confirmed: anything about oai-2.1. There is no model card. No SKU. No pricing tier. No context window number. No modality list. No deprecation schedule. No association with any known capability cluster. The string oai-2.1 does not appear in any public-facing OpenAI documentation I can find as of the date this was written.

I want to be precise here, because the absence is the point. Not “the docs are sparse on oai-2.1.” Not “oai-2.1 has limited public information.” There is no public information about oai-2.1 beyond the fact that the string showed up in a UI selector. That is the entire knowable surface.

Why internal labels should not drive roadmap decisions

Internal model labels at large labs are not the same as products. They live in different lifecycles. Products are committed to: documented, priced, supported, governed by the public deprecation policy. Internal labels are working state. They get renamed, merged, split, killed, retired silently. Treating a working-state label as a product roadmap input is a category error.

The cost of that error compounds. Once a team starts saying “we’ll wait for oai-2.1” or “we should plan for oai-2.1,” it shows up in sprint planning, in vendor conversations, in capacity decisions. None of those should hinge on a name that has no documented existence.

That’s all I can confirm. The rest you’ll need to verify yourself, against the official surfaces, when and if something appears.

What Builders Should Watch Before Treating It as Real

This is where I want to be useful. If you are running a team that cares about new model releases — which is most of the engineering and product leaders reading this — here is the checklist I use before letting a rumored model influence any decision. I wrote this down for myself after the third time someone pinged me about oai-2.1 asking if we should “do something.”

A rumored model is real enough to plan around when, and only when, all of the following are true:

- It has an entry in the official model catalog page for the relevant API surface (Codex, Responses, Chat Completions, Realtime).

- It has a documented pricing row, in dollars per million tokens or per call, on the published pricing page.

- It has at least one explicit capability claim from the vendor — context window, modality support, tool support, snapshot date.

- It has an API model string that returns a valid response when called against your account, not a 404 or model-not-found.

- It appears in the changelog with a release date, not just in a UI dropdown.

If any of these are missing, the model is not real for planning purposes. The dropdown showing the name is not one of these conditions. I checked.

A separate question: should you be watching at all? At what frequency? My rule is roughly weekly for the official changelog, never for screenshots. The signal-to-noise on screenshots is bad enough that the time spent triaging them costs more than the lead time it could possibly buy. OpenAI’s own guidance, embedded across its docs, lands on the same point: pin production applications to specific model snapshots, build evals that measure behavior across version changes, treat model selection as a stability decision. That’s the workflow that absorbs new model releases gracefully. Chasing leaked names is the opposite of that workflow.

The other thing I’d flag: if your team’s interest in oai-2.1 is really about something else — frustration with current model capabilities, anxiety about competitors moving faster, pressure to show forward motion — chasing the rumor won’t solve those. It will look like motion. It isn’t.

FAQ

Is oai-2.1 an official OpenAI model?

No. As of the writing of this article, oai-2.1 does not appear in OpenAI’s public model catalog, API reference, pricing page, or changelog. The only basis for the name is a brief appearance in a Codex model selector dropdown around April 22, 2026, which was subsequently removed.

Is there any API, docs, or pricing page for it?

No. Calling the OpenAI API with the model string oai-2.1 is not supported through any documented route. There is no docs page, no pricing entry, no model card, and no associated deprecation or stability commitment.

Why do leaked model names spread so fast?

A few reasons. UI-level exposure feels closer to production than insider tips, because it implies the model is wired into a real system. Naming patterns invite speculation — glacier-alpha versus arcanine sounds like a story even when it isn’t one. And there is a standing audience of builders looking for early signals on capability shifts. None of that changes the underlying signal quality, which is low.

What should teams verify before planning around a rumored model?

The checklist above: documented in the official catalog, priced, capability-described, callable via API, and released in the changelog. The OpenAI production best practices guide lands in the same neighborhood — model selection is a stability question, not a release-date one.

Conclusion

Here’s what I’d land on, if I had to give my team a one-line answer to “what about oai-2.1.” There is a string that appeared in a dropdown. There is no product. The two are not the same thing. Plan against the product when it exists.

I don’t know if oai-2.1 will become a public model. Better than making something up. If it does, it will appear on the official catalog page, with pricing and capability claims, and at that point it becomes worth a real evaluation. Until then, the most expensive thing a team can do is let a rumored name change a real decision.

To be verified. I’ll come back to this when something concrete shows up.

Previous Posts: