50 % Rabatt auf Vidu Q3 & Q3 Pro — nur bei WaveSpeedAI | 20. Mai – 2. Juni

Hunyuan i2v turns images and text prompts into high-quality videos, generating coherent short clips from descriptive inputs. Ready-to-use REST inference API, best performance, no coldstarts, affordable pricing.

image-to-video

Eingabe

Bereit

$0.4pro Durchlauf·~25 / $10

BeispieleAlle anzeigen

Ähnliche Modelle

README

Hunyuan Video I2V — wavespeed-ai/hunyuan-video/i2v

Hunyuan Video I2V is an image-to-video model that turns a single reference image into a short animated clip guided by a text prompt. Upload an image to lock in subject, composition, and style, then describe the action and camera behavior you want. The model is well-suited for cinematic motion, character-driven beats, and atmospheric scenes where you want the “still” to come alive with coherent movement.

Key capabilities

- Image-to-video generation from one reference image

- Prompt-driven motion: actions, expressions, environment changes, camera movement

- Stable composition anchored to the input image

- Good for cinematic, dramatic, and stylized shots

- Supports duration and inference-step controls for quality vs. speed tradeoffs

- Multiple output sizes (e.g., 1280×720)

Use cases

- Animate key art, posters, and character portraits into short clips

- Cinematic micro-stories: close-up → reveal, slow push-ins, mood-heavy scenes

- Atmosphere and VFX-style motion: rain, fog, embers, neon flicker, drifting particles

- Social content loops from a single still image

- Rapid previsualization for scenes before full video production

Pricing

| Output | Price |

|---|---|

| Per run | $0.40 |

Inputs

- image (required): reference image to anchor subject and style

- prompt (required): action + camera directions

Parameters

- duration: clip length in seconds

- num_inference_steps: sampling steps (higher often improves coherence/detail)

- seed: random seed (-1 for random; set for reproducible results)

- size: output resolution (e.g., 1280×720)

Prompting guide (I2V)

Write prompts like a director’s brief:

- Subject: who/what is on screen

- Action: what changes over time (gestures, expression, environment)

- Camera: push-in, pull-back, pan, tilt, handheld vs. locked-off

- Mood/lighting: candlelight, moonlight, neon, fog, rim light

- Motion constraints: “subtle movement”, “no shaky camera”, “smooth dolly”

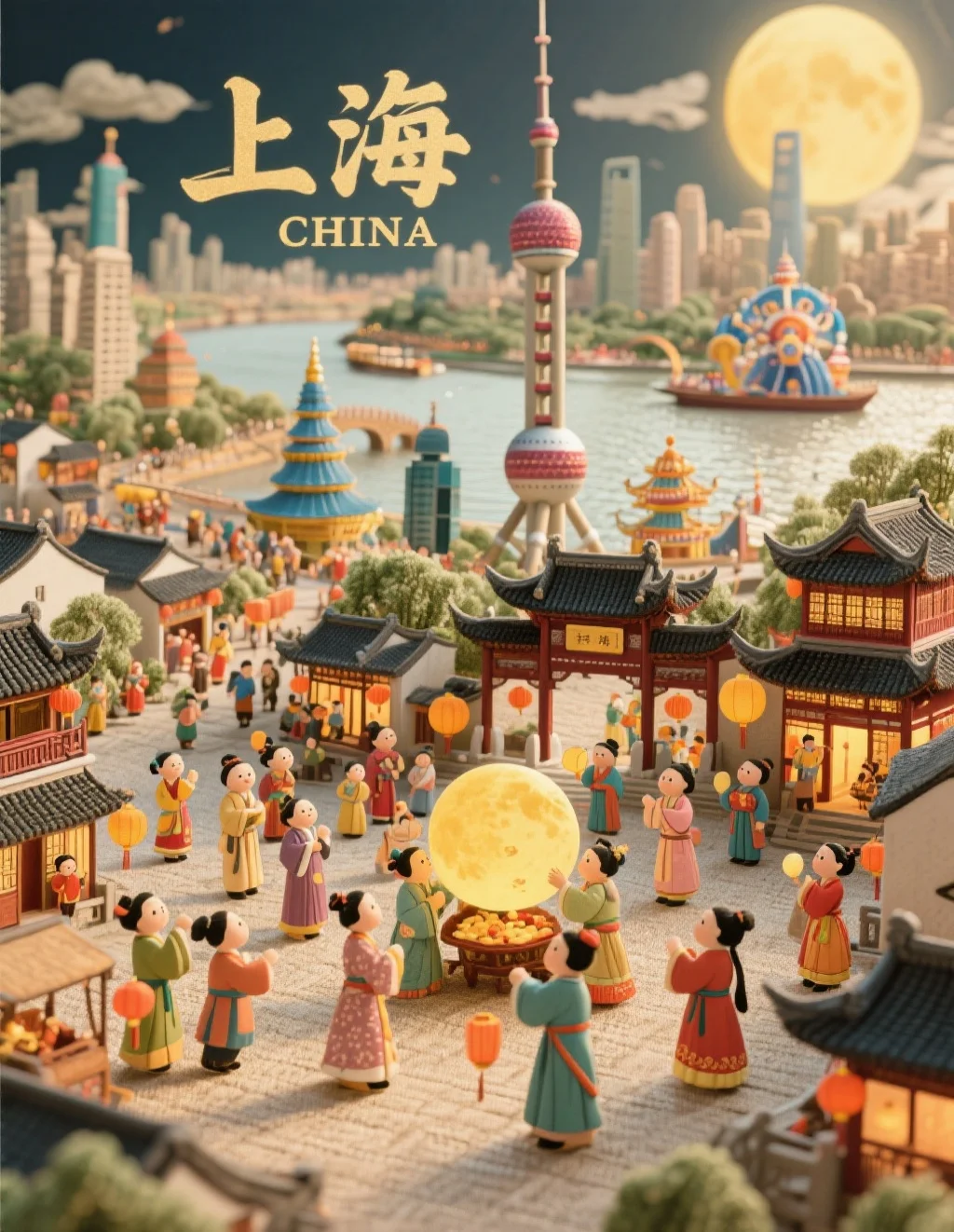

Example prompts

- A pale vampire woman stands at a candlelit window, crimson eyes glowing. She slowly raises her hand and taps long nails against the glass. Her expression shifts from seductive to dangerous as bats flutter past outside. Slow cinematic push-in, soft candle flicker, subtle fog, smooth motion, dramatic lighting.