claw-code Pricing and Access in 2026: What You Actually Pay

claw-code is open source, but using it isn't free. Here's a full breakdown of actual costs vs. Claude Code's subscription in 2026.

If you landed here after reading about the Claude Code source leak, you’re probably trying to figure out whether claw-code — the open-source rewrite that went from zero to 100K GitHub stars overnight — is a viable cost alternative for your team.

The honest answer is: it depends entirely on how you count the costs. “Open source” doesn’t mean free. Let me break down the actual numbers.

claw-code Is Open Source — But What Does That Actually Mean for Cost?

Licensing and Distribution Status

claw-code is an open-source AI coding agent framework built in Rust and Python — a clean-room rewrite of the Claude Code agent harness architecture, created by Sigrid Jin after the March 2026 source code leak. The project reimplements core architectural patterns — including the tool system, query engine, multi-agent orchestration, and memory management — without copying any proprietary source code.

The legal status here matters for your procurement decision. Anthropic’s DMCA takedowns targeted direct mirrors of the leaked source. The clean-room rewrite theory is that a Python/Rust reimplementation cannot be touched by DMCA — but this legal question has never been adjudicated in court. Anthropic retracted most DMCA notices targeting clean-room rewrites as of April 1, 2026, focusing enforcement only on direct leaked-source repositories.

Bottom line for an engineering manager: claw-code is currently available, actively maintained, and not subject to active takedown. Whether Anthropic might pursue further legal action against clean-room rewrites is unresolved. The current Python workspace is not yet a complete one-to-one replacement for the original system. Rust rewrite work is ongoing with no stable release date published.

What You Still Pay for: API Calls, Infrastructure, Maintenance

This is the number that kills the “free tool” narrative. claw-code’s harness architecture is provider-agnostic — it supports multiple LLM providers including Claude, OpenAI, and local models. But the harness doesn’t generate the intelligence. The LLM does. And the LLM costs money regardless of which harness you use to call it.

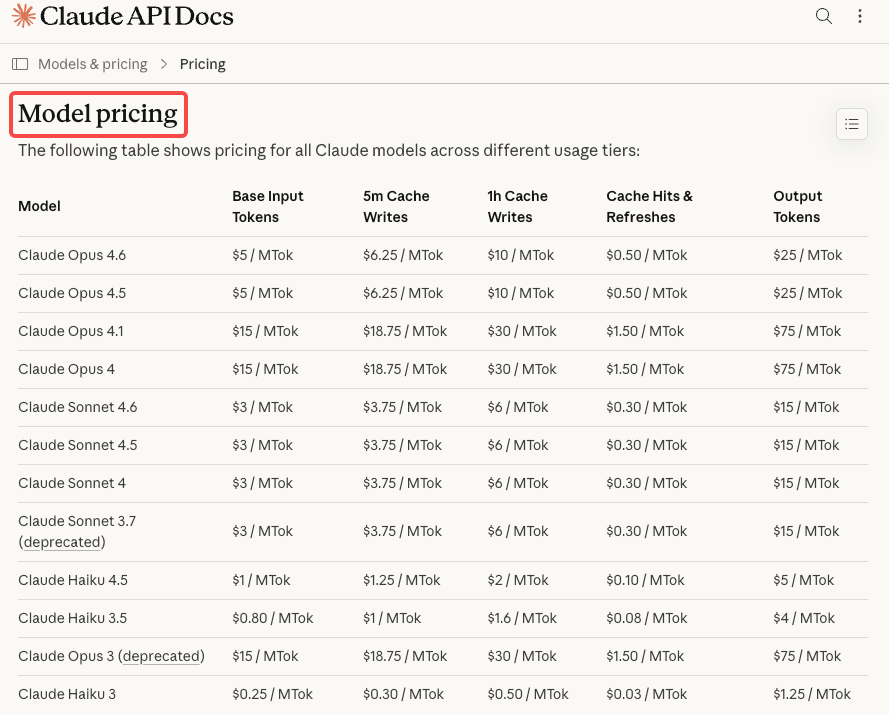

If you route through Anthropic’s API to get Claude-level quality, you pay full API rates: Sonnet 4.6 at $3 input / $15 output per million tokens, Opus 4.6 at $5 input / $25 output per million tokens. There is no subscription tier benefit — you’re on pure pay-as-you-go.

If you route to a cheaper provider to reduce costs, the capability gap is real. Gemini 3.1 Pro at $2/$12 per million tokens is the closest competitor on coding benchmarks, but Claude Opus 4.6 leads SWE-bench Verified at 80.8% for a reason — the model quality difference is measurable on complex multi-file tasks.

Beyond API costs, add: infrastructure if you self-host or run CI/CD pipelines through claw-code; developer time to install, configure, and maintain an experimental codebase; and the cost of debugging issues in a project that explicitly tracks parity gaps with the original via its built-in parity_audit.py. That last point matters — you’re maintaining a tool that is, by design, not yet feature-complete.

Claude Code Pricing in 2026

Subscription Tiers and Token Access

Claude Code pricing works through two separate paths: subscription plans (Pro at $20/month, Max at $100–200/month) give you unlimited usage within rate limits, while API pay-as-you-go charges per token starting at $3 per million input tokens for Sonnet 4.6.

One critical billing detail that trips teams up: if you have an ANTHROPIC_API_KEY environment variable set on your system, Claude Code will use this API key for authentication instead of your Claude subscription, resulting in API usage charges rather than using your subscription’s included usage. Many developers who think they’re on a flat subscription plan are silently billing to a pay-as-you-go API account instead.

Rate Limits and Usage Caps

Anthropic does not frame Pro and Max as clean public token contracts. The official pages describe usage as variable, shared between Claude chat and Claude Code, and sensitive to message length, codebase size, conversation length, model choice, and current capacity.

In practice: Pro plan users typically have access to approximately 44,000 tokens per 5-hour period, which translates to roughly 10–40 prompts depending on the complexity of the codebase. Agent Teams sessions burn quota roughly 3x faster per agent spawned, which makes the Max 5x tier a near-requirement for anyone running multi-agent workflows.

Total Cost Comparison: claw-code vs. Claude Code for a Typical Dev Team

Solo Developer

Claude Code (Pro): $20/month flat. Handles focused daily sessions without hitting limits if you’re not running Agent Teams continuously. Clean billing, no infrastructure to manage. Annual: ~$240.

claw-code (via Anthropic API, Sonnet 4.6): According to Anthropic’s own data from March 2026, the average developer spends approximately $6 per day on Claude Code. At API rates, that’s $180/month — more than the Pro subscription. Light users (~1–2 hours/day, simpler tasks) might spend $50–80/month via API, which beats the subscription.

Verdict for solo devs: Claude Code Pro wins on cost for anyone coding more than ~2 hours daily. claw-code’s provider flexibility becomes relevant only if you’re willing to route to a cheaper model (Gemini, Sonnet via OpenRouter) and accept quality trade-offs on complex tasks.

Small Team (2–5 Devs)

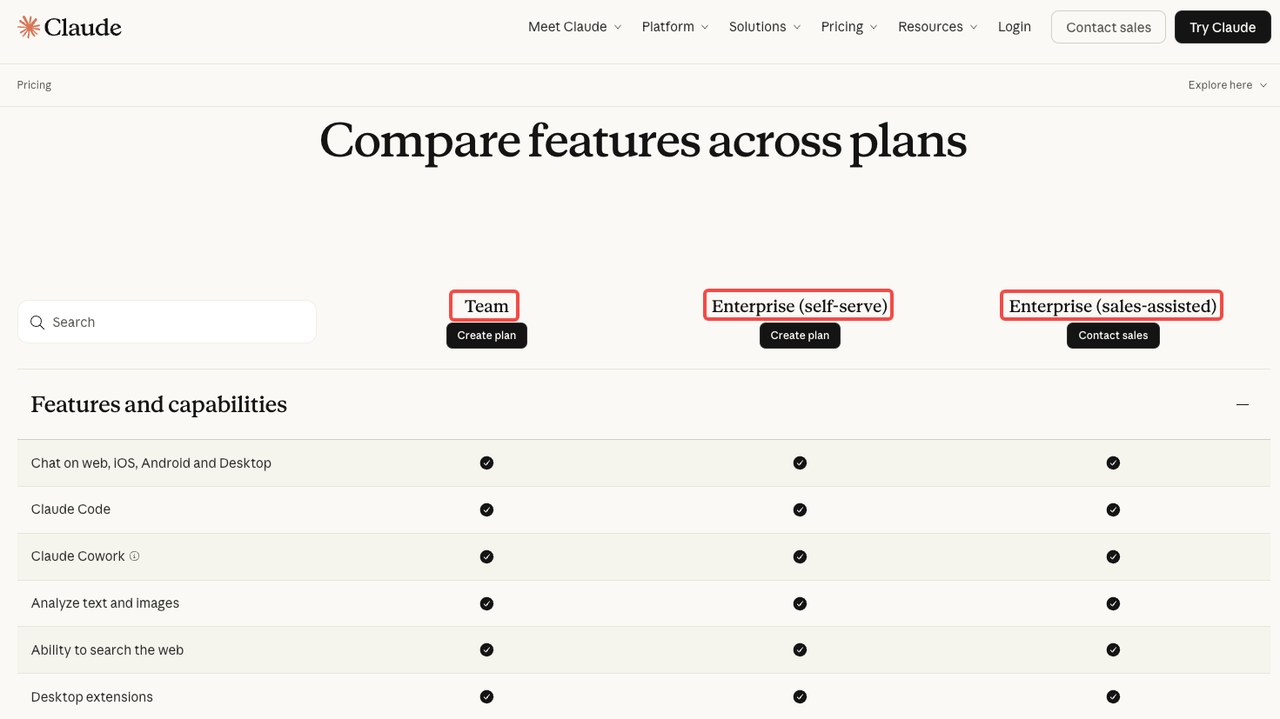

Claude Code (Team Premium): $100/seat/month, minimum 5 seats. Minimum monthly bill: $500. Includes shared context, centralized billing, SSO, and Claude Code access for all seats.

claw-code (via API, 3 devs, Sonnet 4.6): At ~$6/day/developer in API costs, 3 developers run ~$540/month. At 5 developers: ~$900/month. Claude Code’s average team cost runs $100–200/developer per month with Sonnet 4.6, with large variance depending on usage intensity.

Hidden claw-code overhead for small teams: Someone on the team owns maintenance, keeps up with the parity gaps documented in parity_audit.py, handles integration issues as the Rust rewrite evolves, and manages provider key rotation. If that’s 2–4 hours/month at a $150/hour loaded engineer cost, add $300–600 to the monthly TCO.

Verdict for small teams: Comparable raw API costs, but claw-code carries real maintenance overhead. The multi-provider flexibility is the genuine advantage — routing some tasks to Gemini or local models can cut costs 40–60% on high-frequency, simpler requests.

Enterprise Evaluation

For enterprises, the legal uncertainty around claw-code is the first line item in the risk register, not a footnote. The legal question of whether a codegen clean-room rebuild constitutes copyright infringement has never been challenged in court. For any company that runs legal review on third-party software (which is most enterprises), claw-code goes on the “requires legal opinion” list before deployment.

Claude Code Enterprise pricing is custom via Anthropic sales, and includes HIPAA readiness, SSO, audit logging, 500K context windows, and compliance APIs. There is no equivalent offering from claw-code — it is a community-maintained harness, not a commercially supported product.

Hidden Costs and Risks

Maintenance Burden of Self-Hosting an Experimental Project

The claw-code Python workspace is not yet a full runtime-equivalent replacement for the original TypeScript system — the Python tree still contains fewer executable runtime slices than the archived source. The Rust rewrite is in progress with no committed timeline. Both facts mean you’re not adopting a stable product — you’re adopting a moving target that requires ongoing attention.

When Claude Code ships a new feature (Agent Teams improvements, new tool integrations, CLAUDE.md changes), claw-code will lag. Your team either waits, contributes upstream, or patches locally. Each of those options has a cost.

For a team that has a dedicated platform engineer who finds harness engineering interesting, this is manageable. For a product team that just wants coding agents to work, it’s a tax on every sprint.

Legal Uncertainty and Its Operational Implications

Anthropic partially retracted its original DMCA notice as of April 1, 2026, retracting notices as to all repositories except the original leaked source clones and 96 specifically listed fork URLs. The clean-room rewrite — claw-code itself — was not targeted. That’s the current state of play.

But “not currently targeted” is not the same as “legally cleared.” If Anthropic decides to pursue action against clean-room rewrites in the future, teams that have built workflows around claw-code face migration costs. The probability is speculative; the cost of migrating a bespoke agent harness mid-project is not.

For teams in regulated industries, financial services, or healthcare: the absence of a commercial support contract, SLA, and official security advisory process is itself a procurement blocker, separate from the copyright question entirely.

FAQ

Is claw-code completely free to use?

The software itself has no license fee. Your actual cost is: Anthropic or other LLM API calls (typically $50–$180+/dev/month at moderate usage), infrastructure if you self-host, and engineering time for maintenance. “Free” applies only to the harness software.

Do I still need an Anthropic API key to use claw-code?

Only if you want Claude-quality model output. claw-code supports multiple LLM providers including Claude, OpenAI, and local models. You can run it against OpenAI, Gemini, or local models via Ollama with no Anthropic key required. The trade-off is model capability — Opus 4.6 leads on complex coding tasks, and that lead is measurable in production.

Can claw-code connect to other model providers to reduce API costs?

Yes — this is the strongest cost argument for claw-code over Claude Code. You can route simpler high-frequency tasks to Gemini 3.1 Flash ($0.50/$3 per MTok) or GPT-5 Mini ($0.25/$2 per MTok), and reserve Opus calls for complex reasoning tasks. A well-configured hybrid routing strategy can cut API spend 40–60% compared to routing everything through Opus. Claude Code is locked to Anthropic models and cannot do this natively — though enterprise AI gateways like LiteLLM can add multi-provider routing on top of Claude Code.

Does claw-code have rate limits?

The harness itself has no rate limits. The API providers you connect to do. Anthropic’s API has the same rate limits whether you call it via Claude Code or claw-code. If you’re on API pay-as-you-go, there are no 5-hour rolling windows — just provider-level request rate limits by tier.

Is there a hosted version of claw-code?

No confirmed hosted service exists as of April 2026. The claw-code project is self-hosted only. *[Note: This may change as the ecosystem develops — verify at the official repository before making decisions based on this.*] Claude Code, by contrast, runs entirely in Anthropic’s managed infrastructure and requires no self-hosting.

Previous Posts: