Claude Code Agent Harness Architecture: Key Insights from the Leak

The Claude Code source exposure revealed how a production agentic coding agent is actually built. Key architectural patterns for builders.

I’ve been sitting with this one for a couple of days, trying to figure out what’s actually worth saying.

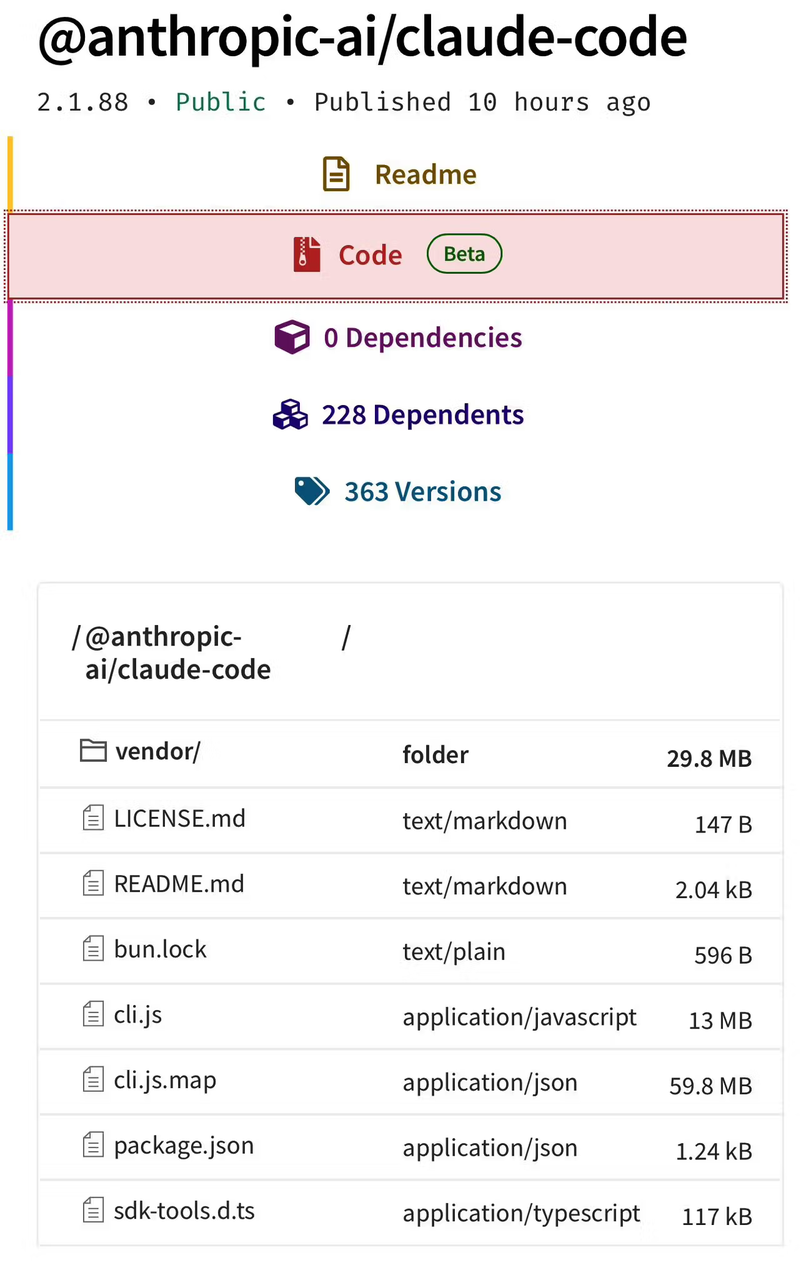

On March 31, 2026, a debug artifact — a source map file — got accidentally bundled into version 2.1.88 of @anthropic-ai/claude-code on npm. Within hours, roughly 512,000 lines of TypeScript were mirrored across GitHub and picked apart by thousands of developers. As Axios reported, Anthropic confirmed it quickly: a packaging error, no customer data exposed, measures incoming.

I’m Dora. What interested me wasn’t the drama. It was the engineering. Because what leaked — the “agentic harness” — is not the Claude model. It’s the scaffolding around it: how it uses tools, manages memory, coordinates subagents, enforces permissions. That’s the part most teams building similar systems get quietly wrong.

Legal note: The leaked code remains Anthropic’s intellectual property. Anthropic has issued DMCA takedowns. This article draws on public analyses and reporting only. If you downloaded or mirrored the source, consult legal counsel before incorporating anything into commercial work. The patterns discussed here are general engineering ideas — not reproductions of proprietary code.

What the Leak Revealed About Production Agent Harness Design

The first thing that caught me off guard was the scale. Claude Code is not a thin wrapper. The codebase spans roughly 1,900 files. QueryEngine.ts alone runs to approximately 46,000 lines. Tool.ts is around 29,000. This is serious software doing things that don’t show up in the UI.

The architecture organizes around three layers: an orchestration loop that manages planning and execution, a permission-gated tool system, and a multi-layer memory architecture. Each was doing things that most open-source harnesses skip.

The Core Orchestration Loop

QueryEngine.ts handles all LLM API calls — prompt construction, streaming, token counting, cost tracking, prompt caching, retry logic. It’s the brain. But the interesting part is what surrounds it.

The orchestration loop includes five distinct strategies for managing context pressure as sessions grow: time-based clearing of old tool results, conversation summarization, session memory extraction, full history summarization, and oldest-message truncation. These aren’t afterthoughts. The source suggests context management is treated as a first-class correctness concern — not something added when things start breaking in production.

This is worth thinking about for anyone building long-running agents. VentureBeat’s analysis called this solving “context entropy” — the tendency for agents to drift or hallucinate as sessions extend. The harness has multiple fallback layers for it.

Multi-Agent Coordination and Worktree Isolation

Claude Code supports three distinct execution models for subagents, as Superframeworks documented in their breakdown:

| Model | How it works | Practical use |

|---|---|---|

| Fork | Byte-identical copy of parent context, hits prompt cache | Parallel work where cache reuse makes parallelism essentially free |

| Teammate | Separate pane in tmux/iTerm, communicates via file-based mailbox | Loose coordination between independent workstreams |

| Worktree | Own git worktree, isolated branch per agent | Exploratory or risky work that shouldn’t touch main context |

The primary orchestrator assigns subtasks and spawns subagents that each run in their own context window with a restricted tool set. Subagents return only their output — not their full working context — to the orchestrator. That isolation is deliberate: risky work can’t contaminate the main agent’s state.

One detail worth noting: the orchestrator’s behavior is defined in natural language in the system prompt, not in branching code logic. Instructions like “Do not rubber-stamp weak work” and “Never hand off understanding to another worker.” Because it’s prompt-driven, you can update orchestration behavior without a redeploy. There are tradeoffs — harder to test formally — but the iteration speed is real.

Permission and Sandboxing Architecture

The tool system has approximately 40 discrete capabilities — file reads, bash execution, web fetches, LSP integration, subagent spawning — each permission-gated independently. The key design: the model decides what to attempt, but the tool system decides what is permitted. Those two things are architecturally separate.

Protected File Lists and Path Traversal Prevention

Every tool carries explicit permission requirements checked before execution. The approval sequence runs in three stages: trust establishment at project load time, a permission check before each tool runs, and explicit user confirmation for high-risk operations like file writes and bash commands. The order matters — CVE-2025-59828, patched earlier in 2025, was a vulnerability where code could execute before directory trust had been established. Trust sequencing is treated as a correctness concern, not just a security checkbox.

Read-only operations run concurrently. Mutating operations run serially to avoid conflicts. That’s a deliberate choice about when parallelism is safe.

Permission Explainer as a Separate LLM Call

The “Auto” permission mode runs a separate LLM classifier call on each proposed action to predict whether the user would approve it. It’s not the same inference that generated the action — it’s a second call specifically to evaluate the action before execution.

Three permission modes exist: Bypass (no checks — fast, dangerous), Allow Edits (auto-allows file edits in working directory), and Auto (the classifier approach). The cost implication of Auto is real. But separating “what should I do” reasoning from “is this allowed” reasoning matters whenever trust boundaries are meaningful.

Memory and Context Management Patterns

This is where I found myself reading the same analyses twice. The memory design doesn’t look like anything most developers would build by default.

Session Persistence and Transcript Storage

Every conversation is saved as JSONL and supports resumption via --continue or --resume. The session memory extracts task specs, file lists, errors, and workflow state across compactions. But the structure around it is what’s interesting — three layers:

- Layer 1 — MEMORY.md: A lightweight index of short pointers (~150 characters each), always loaded into context. A table of contents, not the book.

- Layer 2 — Topic files: Detailed project notes, pulled in on demand, not preloaded.

- Layer 3 — Raw transcripts: Accessed via search only, never loaded wholesale.

The agent is instructed to treat its own memory as a “hint” and verify against the actual codebase before acting. It doesn’t trust what it remembers. That’s a design pattern worth borrowing regardless of what stack you’re building on.

The Kairos Background Daemon and “Dreaming” Mechanism (Feature-Flagged, Not in Production)

Important: Kairos is feature-flagged and compiled to

falsein the external build. It did not ship in the public version of Claude Code. What follows describes what the source suggests about its design — not a live capability.

The flag KAIROS — named after the Ancient Greek concept of “at the right time” — appears over 150 times in the source. Based on the code, it describes a persistent daemon mode where the agent continues working while the user is idle.

A process called autoDream runs as a forked subagent during idle periods. It appears to merge observations, remove logical contradictions, and consolidate notes into cleaner facts. The use of a forked subagent for this maintenance work is a meaningful choice: background tidying runs separately so it can’t corrupt the main agent’s working context.

The concept isn’t unusual — sleep-based memory consolidation is a real thing. Implementing it safely in an always-on agent is a harder engineering problem. We’ll find out how it works in practice when it ships.

What This Means for Teams Building Their Own Agentic Tools

Patterns Worth Studying

Separate permission enforcement from model reasoning. The model deciding what to try and the system deciding what’s allowed should be different layers. A lot of agent harnesses conflate them.

Treat memory as a hint, not ground truth. Having the agent verify against live state before acting reduces a specific class of bugs that surfaces when long-running agents act on stale context.

Design for context pressure from the start. Having five fallback strategies for context overflow isn’t over-engineering if your sessions run longer than 20 minutes. Most harnesses add this while debugging a broken production session.

Prompt-defined orchestration behavior. If coordination logic lives in code, every behavioral tweak needs a deploy. If it lives in a system prompt, you can iterate without one. There are tradeoffs, but the flexibility is real.

What to Avoid Replicating

The scale of this codebase isn’t a feature to copy. QueryEngine.ts at 46,000 lines exists because it handles things most teams don’t need. Starting with that level of abstraction is a good way to build something unmaintainable.

The Bypass permission mode — no checks at all — exists for internal speed. For anything customer-facing or running with broad filesystem access, it’s the kind of shortcut that shows up in incident reports.

FAQ

Q: Is the leaked Claude Code architecture stable to build on?

No, and building on it directly creates legal exposure. The code is Anthropic’s intellectual property. The patterns — permission-gated tools, layered memory, subagent isolation — are general engineering ideas. Reference those, not the implementation.

Q: What is Kairos in Claude Code?

A feature-flagged background daemon mode, not yet shipped. Based on the source, it allows the agent to continue working during user idle time, with an autoDream process for memory consolidation. It was compiled out of the public release.

Q: Does claw-code replicate the full harness?

No. Open-source ports can replicate structural patterns, not the model-specific behavior or tuning. A Python reimplementation of the orchestration logic with a different model is a different product.

Q: What are the main architectural differences between Claude Code and other agentic tools?

The most notable are the three-tier memory system, the three subagent execution models with worktree isolation, and the architectural separation of permission enforcement from model reasoning. Most alternatives handle some of these. Claude Code‘s distinction is that all three are present and documented at production scale.

Q: Is the leaked information legally usable for building commercial tools?

Genuinely unclear — get actual legal counsel. Anthropic has issued DMCA takedowns. Some commentators have noted ambiguity given the DC Circuit’s March 2025 ruling on AI-generated code and copyright. “Legal ambiguity” is not “legally safe.”

The thing that’s stayed with me: the harness is the hard part. Everyone knew Claude was good. What the leak showed is how much careful engineering sits around it — five context management strategies, three subagent models, a permission system with its own LLM call, a memory architecture that actively distrusts itself.

Most teams building agentic tools aren’t at that scale. But the patterns make sense at smaller scales too. Whether your agent’s memory is a hint or a source of truth — that question applies whether you’re running 100 sessions or 100,000.

I’m still thinking about the Kairos design. An agent that tidies its own memory while you’re away, then shows up the next morning with a cleaner picture of where things left off. Whether it works as well as it sounds is something we’ll find out when it ships.

Previous Posts:

- Claude Code architecture Deep Dive: What the Leaked Source Reveals

- Build an AI Creative Pipeline with GLM-5 + WaveSpeed

- How to Use Seedance 2.0 via API: Async Jobs, Retries, and Result Handling

- How to Keep Character Consistency in Seedance 2.0 (Reference Pack + Rules)

- How to Fix Flicker, Jitter, and Temporal Artifacts in Seedance 2.0