Claude Mythos (Opus 5) Leaked: What We Know So Far

Anthropic's next-generation Claude Mythos model was revealed in a data leak. Here's what the leaked documents say about its capabilities in coding, reasoning, and cybersecurity — and what it means for AI.

Anthropic’s most powerful AI model yet was revealed not through a launch event, but through a data leak. A security researcher discovered that a misconfigured data store on Anthropic’s infrastructure had left nearly 3,000 internal files — draft blog posts, PDFs, and internal memos — publicly accessible without authentication. Anthropic quickly locked down access after being notified, but not before the documents spread across security forums and social media.

Run Claude Opus 4.7 on WaveSpeedAI — the current flagship with 1M context and adaptive thinking. Claude Opus 4.7 API → · Claude Sonnet 4.6 API → · Open the Playground →

Here’s what we know, what’s still unconfirmed, and why it matters.

What Happened

In late March 2026, an independent security researcher identified a misconfigured Anthropic data store exposing sensitive internal documents. After responsible disclosure, Anthropic secured the endpoint within hours.

Anthropic has officially confirmed:

- The leak is real

- The model is named Claude Mythos, internally codenamed “Capybara”

- Training is complete; early access trials are underway with select partners

- A spokesperson described it as “a step change” in AI performance and “the most capable model we’ve built to date”

Anthropic has not confirmed benchmark scores, pricing, or a general availability date.

What Is Claude Mythos?

According to the leaked documents, Claude Mythos sits above the current Opus tier — positioned as an entirely new capability class, not a version increment. While the community has widely labeled it “Opus 5” or “Claude 5,” internal materials suggest Mythos is a distinct, higher tier.

Key claims from leaked materials (unconfirmed by Anthropic):

Coding and Reasoning

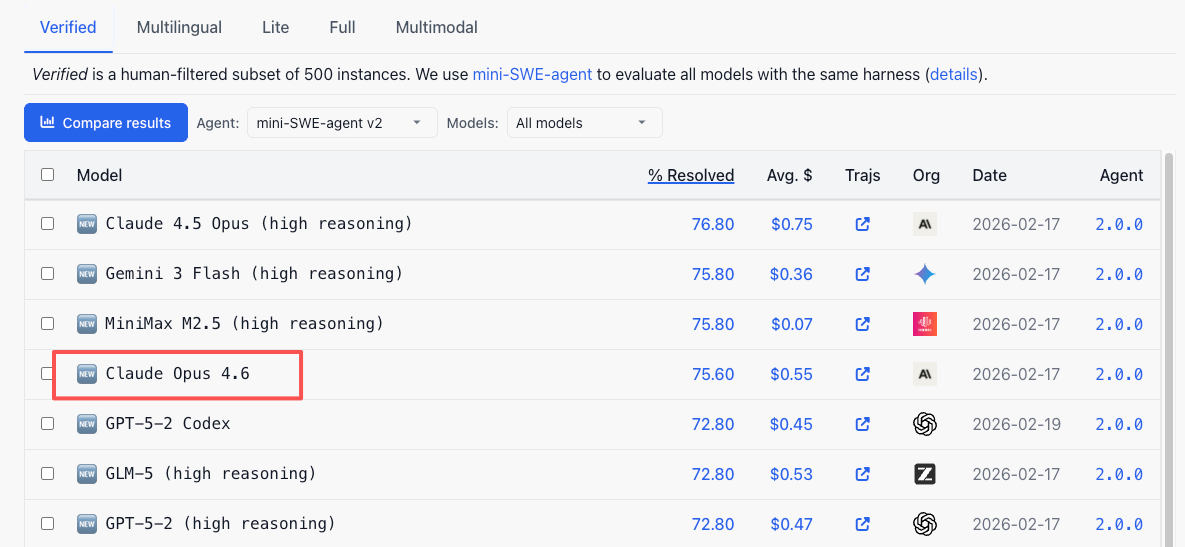

Mythos reportedly delivers significant performance gains over Claude Opus 4.6 across coding benchmarks and academic reasoning tasks. For context, Opus 4.6 currently leads publicly available models on SWE-bench Verified (~80.8%), Terminal-Bench 2.0, and Humanity’s Last Exam — suggesting Mythos pushes these numbers meaningfully higher, though no specific figures have been published.

Cybersecurity Capabilities

This is the most consequential claim in the leak. Internal documents describe Mythos as “currently far ahead of any other AI model in cyber capabilities” — able to discover and exploit software vulnerabilities at speeds that substantially outpace human defenders.

The documents warn that Mythos “presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders*”* — a remarkably candid internal assessment. Security researchers familiar with AI-assisted vulnerability discovery, such as those documented in Google Project Zero’s research, have noted that LLM-assisted exploit generation is already an active area of concern at leading security organizations.

Chinese State-Sponsored Exploitation

The most explosive claim: Anthropic reportedly detected a coordinated campaign by a Chinese state-sponsored threat group using Claude Code to infiltrate approximately 30 organizations, including tech companies, financial institutions, and government agencies. Anthropic detected and shut down the campaign. This incident appears to have directly shaped the cautious rollout strategy for Mythos.

Neither the threat group’s identity nor the full scope of the campaign has been independently verified.

Market Impact

The leak had immediate financial consequences. Concerns about AI-powered cyberattacks triggered a sell-off across U.S. software and cybersecurity stocks. Risk-off sentiment spread into crypto markets, with Bitcoin dropping to approximately $66,000. Japanese media reported extensively on the national security implications. Analysts at several institutions drew parallels to the market reaction following the Log4Shell disclosure in 2021 — another moment when a single vulnerability reshaped risk assessments across entire sectors.

What’s Still Unconfirmed

Despite the partial confirmation from Anthropic, several critical details remain unverified:

- Benchmark scores. No third-party evaluations have been published. All performance claims originate from internal documents, not independent testing.

- Pricing and availability. No information about API pricing, context window size, or general access timeline has been released.

- “Opus 5” naming. The community has widely adopted this label, but leaked documents position Mythos as a new tier above Opus — the final product name is unknown.

- UI sightings. Some users have reported seeing “Mythos 5 (experimental)” in the Claude interface. These could reflect limited A/B tests, internal testing artifacts, or fabricated screenshots. No widespread confirmation exists.

Anthropic’s Rollout Strategy

Based on the leaked documents and Anthropic’s public statements, the release will follow a deliberately staged approach consistent with the company’s Responsible Scaling Policy, which mandates enhanced evaluation and controlled deployment for models that cross defined capability thresholds.

- Cybersecurity partners first. Initial access is restricted to vetted security researchers and defenders — the goal is to build defensive tooling before offensive capabilities become broadly available.

- Staged expansion. Broader access will follow via the API and Claude Pro/Team/Enterprise plans.

- No public launch date. Anthropic has not committed to a timeline.

This mirrors Anthropic’s handling of previous high-risk releases and aligns with practices recommended by NIST’s AI Risk Management Framework, which advocates staged deployment with ongoing monitoring for dual-use AI systems.

What This Means

For developers: A model that meaningfully outperforms Opus 4.6 in coding would be a significant tool for software development, debugging, and agentic workflows. The open question is when it becomes available and at what cost.

For cybersecurity: If the leaked documents are accurate, AI-assisted vulnerability exploitation is no longer a theoretical risk — it is a near-term operational one. Defenders will need AI-powered tools to keep pace, a shift already anticipated by frameworks like MITRE ATLAS, which catalogs adversarial machine learning tactics against AI systems.

For AI safety: Anthropic publicly acknowledging that its own model poses risks to cyber defense is notable and unusual. The cautious rollout signals that the company takes its safety commitments seriously — but it also raises legitimate questions about whether any staged release can truly contain frontier capabilities once they are deployed at scale.

For the industry: If Mythos delivers on the claims in the leaked documents, it sets a new benchmark for frontier AI. Competitors will need to respond, and the cybersecurity implications will accelerate regulatory conversations already underway at the EU and U.S. federal level.

Frequently Asked Questions

Q: Is Claude Mythos the same as Claude 5 or Opus 5?

Not officially. Leaked documents position Mythos as a new tier above Opus, not a direct version successor. The final product name has not been confirmed by Anthropic.

Q: When will Claude Mythos be publicly available?

Anthropic has not announced a release date. Based on the leaked rollout strategy, general availability will follow an initial phase restricted to cybersecurity partners.

Q: Has Anthropic confirmed the cybersecurity capabilities described in the leak?

No. Anthropic confirmed the model’s existence and described it as a major capability advance, but has not verified specific claims about its cybersecurity performance.

Q: Is it safe to use Claude Mythos for security research?

Anthropic’s Responsible Scaling Policy requires that high-capability models undergo safety evaluations before broader deployment. The staged rollout — starting with trusted security partners — is designed to ensure appropriate safeguards are in place.

Q: How does Claude Mythos compare to GPT-4o or Gemini Ultra?

No verified third-party benchmarks are available yet. Any comparison at this stage is speculative.

Q: What should organizations do now to prepare for AI-assisted cyberattacks?

CISA’s guidance on AI and cybersecurity and MITRE ATLAS offer practical frameworks for assessing and mitigating AI-enabled threats. Organizations should review their threat models and invest in AI-assisted defensive tooling.

The Bottom Line

The Claude Mythos leak is real — Anthropic has confirmed the model exists and represents a significant capability advance. But benchmark scores, pricing, availability, and the full scope of its cybersecurity capabilities remain unverified beyond leaked internal documents.

For now: a confirmed next-generation model, internal documents suggesting unprecedented capabilities especially in cybersecurity, a cautious staged rollout, and a great deal of open questions.

Claude Opus 4.6 and Sonnet 4.6 remain the most capable publicly available Claude models. We will update this article as Anthropic makes official announcements.