Uno AI transforms input images into new visuals guided by text prompts, blending reference images with your creative directions for precise, style-aware edits. Ready-to-use REST inference API, best performance, no coldstarts, affordable pricing.

Inattivo

$0.05per esecuzione·~20 / $1

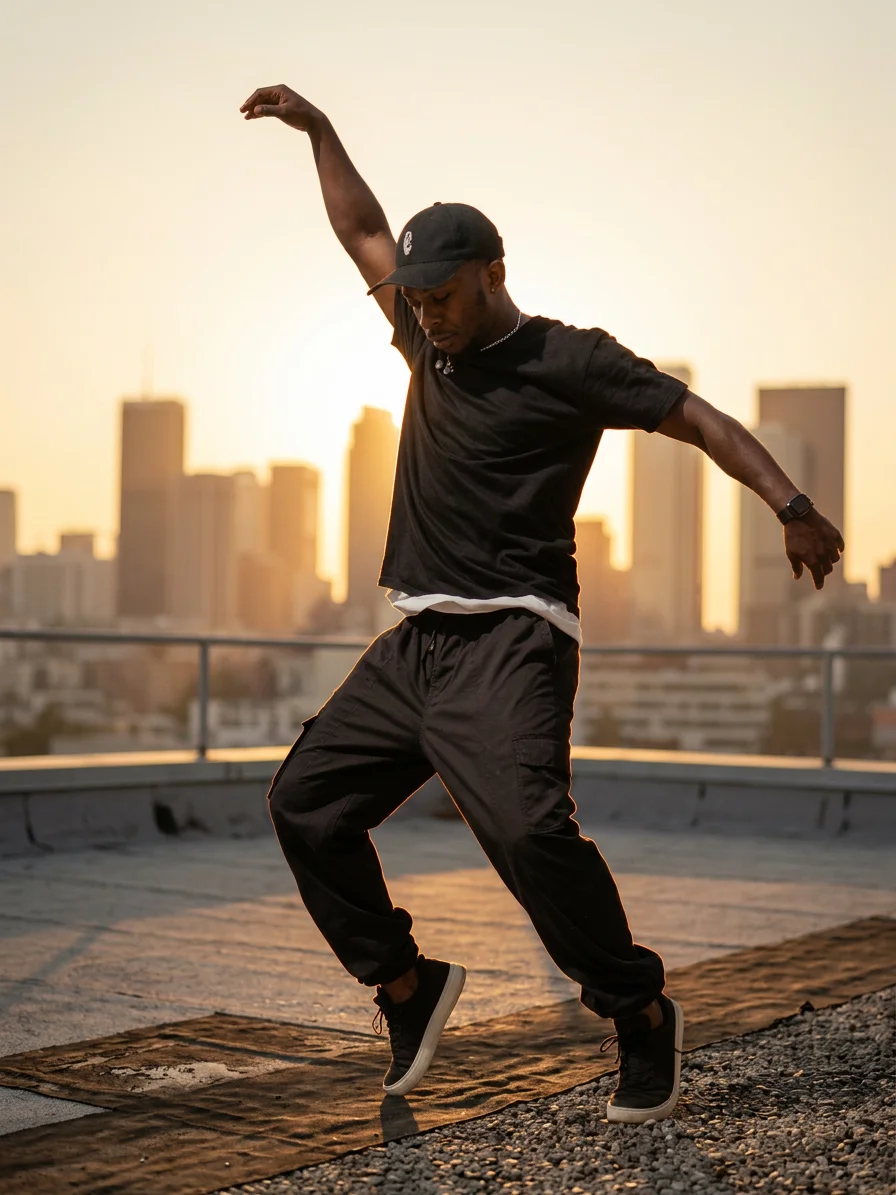

EsempiVedi tutto

Modelli correlati

README

UNO – Universal In-Context Diffusion Transformer

UNO is a subject-driven image generation model from Research. It takes a small set of reference images plus a text prompt and synthesizes new scenes where the same subjects re-appear with high identity consistency and strong style control. It works for both single-subject and multi-subject prompts.

What UNO is good at

-

Subject-consistent generation Keep the same person, character, or product recognizable across new scenes and poses.

-

Single → multi-subject scenes Start from one subject or combine several references into a coherent group image.

-

Layout & style control Use the prompt and image_size to steer framing, setting, and visual mood while preserving identity.

-

Flexible aspect ratios Supports portrait, landscape, and square formats suitable for thumbnails, posts, key art, and ads.

Input Parameters

images (required)

1–5 reference images of your subject(s). These define identity, clothing, and overall look.

- Use multiple angles or expressions for better robustness.

- You can mix people, products, or characters, as long as the prompt makes their roles clear.

prompt (required)

Text description of the scene you want to generate, for example:

- “Santa Claus is standing in front of the Christmas tree.”

- “Two cartoon astronauts posing on the moon, product bottle in the center.”

UNO will combine the prompt with your references to place the subjects into the requested scene.

image_size

Controls aspect ratio and framing:

- square_hd – high-res square

- square – standard square

- portrait_4_3, portrait_16_9

- landscape_4_3, landscape_16_9

Choose based on where the image will be used (feed post, story, banner, thumbnail, etc.).

seed

Randomness control:

- Empty / unset → a random seed each time.

- Any integer → reproducible output for the same settings.

num_images

Number of images to generate per run (e.g., 1–4). Higher values give more options at once.

num_inference_steps

Number of diffusion steps (e.g., around 20–30 by default):

- Fewer steps → faster, slightly less detailed.

- More steps → slower, more refined and stable.

guidance_scale

Classifier-free guidance strength:

- Lower values → more creative, looser interpretation of the prompt.

- Higher values → closer adherence to the prompt and reference identity.

output_format

File format of the generated images:

jpegpng

Designed For

- Character & IP creators – Keep mascots or VTuber avatars on-model across many scenes.

- Product & e-commerce teams – Generate consistent hero shots and lifestyle scenes for the same item.

- Brand & marketing – Multi-subject key art where specific people or products must stay recognizable.

- Concept artists – Rapidly explore compositions using a small library of reference looks.

How to Use

- Upload 1–5 images of your subject(s).

- Choose an image_size that matches your target placement (square, portrait, or landscape).

- Write a clear prompt describing the scene, style, and relationships between subjects.

- Optionally set seed, num_images, num_inference_steps, guidance_scale, and output_format.

- Run the model, review the generated images, and iterate by tweaking prompt or references to refine identity and style.

Pricing

- Per image just need $0.05!

- Total price is 0.05 * num_images.