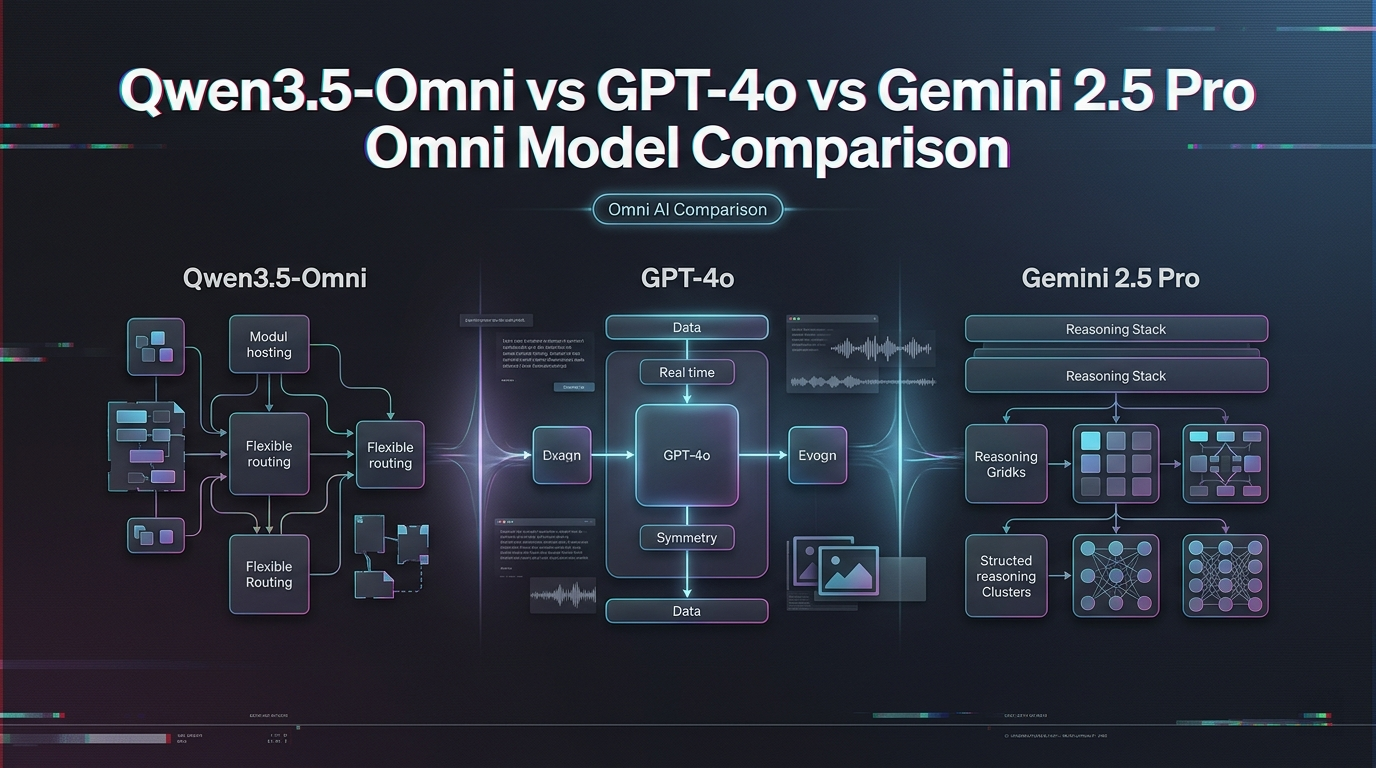

Qwen3.5-Omni vs GPT-4o vs Gemini 2.5 Pro: Omni Model Comparison

Qwen3.5-Omni vs GPT-4o and Gemini 2.5 Pro for builders: audio benchmarks, multilingual voice, API access, self-hosting, and pricing compared.

Hello everyone! This is Dora, who, as usual, had a voice agent project spec sitting on the desk that needed a decision: which model family to build on. GPT-4o was the default everyone assumed. Gemini 2.5 Pro kept coming up for its context ceiling. And then, Qwen3.5-Omni landed at the end of March, with claims that stopped me from mid-scroll — 113 recognition languages, open-weight path, tiered pricing, 256K context. I couldn’t just ignore it.

So I went deep. This isn’t a benchmark roundup but a decision guide: what each model actually offers, where the numbers hold up, and which one makes sense for your specific build.

How These Models Position Themselves

Qwen3.5-Omni: Open-Weight-First, Self-Hosting Viable, Multilingual Voice

Qwen3.5-Omni is Alibaba’s native omni-modal model — text, audio, image, and video in, text or real-time speech out, all in one inference call. It ships in three variants: Plus (30B-A3B MoE), Flash (lighter MoE, lower latency), and Light (smaller dense model, open weights on HuggingFace). The architecture is Thinker-Talker — the reasoning component and the speech synthesis component run as a split system, which enables streaming speech output before the full response is complete.

The clearest differentiation is self-hosting. Plus and Flash are accessible via DashScope API; the Light variant is open weights. If data residency, fine-tuning, or cost-at-scale are primary concerns, Qwen3.5-Omni is currently the only option in this comparison with a realistic self-hosting path. The model supports the OpenAI-compatible API format via DashScope, which lowers integration friction for teams already on the OpenAI SDK.

GPT-4o: Closed API, Tightly Integrated Toolchain, OpenAI Ecosystem

GPT-4o is OpenAI’s flagship multimodal model, available through the standard Chat Completions API and the Realtime API for speech-to-speech workloads. No self-hosting path exists — it’s fully closed. What GPT-4o trades in flexibility, it returns in ecosystem maturity: function calling, Assistants API, fine-tuning, Batch API, code interpreter, file search, and a developer toolchain that most teams already have integrated. If your stack already runs on OpenAI, switching costs are real.

Audio in GPT-4o is handled through two distinct paths: the Chat Completions API (gpt-4o-audio-preview, asynchronous) and the Realtime API (gpt-realtime, low-latency WebSocket). These are separate endpoints with meaningfully different pricing, which matters for voice agent architecture decisions.

Gemini 2.5 Pro: Google Infra, Multimodal-Native, Vertex AI Integration

Gemini 2.5 Pro is Google’s mid-tier flagship, designed for tasks requiring strong reasoning and multimodal understanding. It supports a 1 million token context window — the largest in this comparison by a factor of four — and is available through both the Gemini Developer API and Vertex AI. The Vertex path is the enterprise route: it integrates with Google Cloud IAM, data residency controls, and Workspace tooling, but it also introduces Vertex-specific pricing and lock-in considerations.

Audio input is supported; native real-time speech output is handled through the Live API (low-latency conversational) rather than the standard completions endpoint. For teams already on Google Cloud, the integration story is compelling. For teams not on Google Cloud, Vertex adds onboarding friction that the Gemini Developer API avoids.

Core Comparison Table

| Dimension | Qwen3.5-Omni (Plus) | GPT-4o | Gemini 2.5 Pro |

|---|---|---|---|

| Context window | 256K tokens | 128K tokens | 1M tokens |

| Audio input limit | ~10 hrs continuous | Limited by 128K context | ~11 hrs at 1M context |

| Speech output languages | 36 | ~6 (preset voices) | Limited (Live API) |

| Speech recognition languages | 113 | Whisper-based (~100) | Strong multilingual |

| Self-hosting | ✅ Viable (Light open weights; Plus/Flash via API) | ❌ Not available | ❌ Not available |

| Open weights | ✅ Light variant (HuggingFace) | ❌ | ❌ |

| Pricing model | Tiered by input token count per request | Flat per-token (audio priced separately) | Tiered by context length (>200K higher rate) |

| Text input pricing (per 1M) | Varies by tier; see DashScope | $2.50 | $1.25 (≤200K tokens) |

| Audio input pricing | Modality-specific; see DashScope | ~$100/1M tokens (Realtime: $32/1M) | ~$1.00/1M (Gemini 2.5 Flash rate for audio) |

| API compatibility | OpenAI-compatible (DashScope) | OpenAI native | OpenAI-compatible (partial) |

| Free quota | 1M tokens (International, 90 days) | None (trial credits only) | Generous free tier (Google AI Studio) |

| Vertex / enterprise integration | Alibaba Cloud only | Azure OpenAI / enterprise agreements | Native Google Cloud / Vertex AI |

| Release status | March 30, 2026 (very new) | GA, production-stable | GA, production-stable |

Pricing data: GPT-4o text from OpenAI pricing page; Gemini 2.5 Pro from Google AI Developer pricing); Qwen3.5-Omni from DashScope pricing. Audio rates are approximate — always verify before cost modeling.

Audio and Voice Benchmarks: What They Mean for Builders

Where Qwen3.5-Omni-Plus Leads

Alibaba claims Qwen3.5-Omni-Plus achieved SOTA results on 215 audio and audio-visual subtasks, outperforming Gemini 3.1 Pro on general audio understanding, reasoning, recognition, and translation benchmarks. On multilingual ASR specifically, the jump from 19 languages (prior generation) to 113 is the headline metric that matters most for non-English-first teams.

On audio-video understanding — tasks like summarizing a video with ambient sound, answering questions about a recorded meeting, or captioning audio content — the model has dedicated architecture advantages: the Thinker processes all modalities together natively, rather than routing through separate encoder stacks.

Where GPT-4o and Gemini Maintain Advantages

GPT-4o’s advantage isn’t on raw audio benchmarks — it’s on ecosystem integration. Function calling in the Realtime API, Assistants API for persistent threads, fine-tuning on your domain data, and a developer toolchain that’s been production-tested at scale. If you’re building a voice agent that needs to call external APIs, manage conversation state, or integrate with existing OpenAI-based workflows, GPT-4o’s tooling maturity is a genuine differentiator.

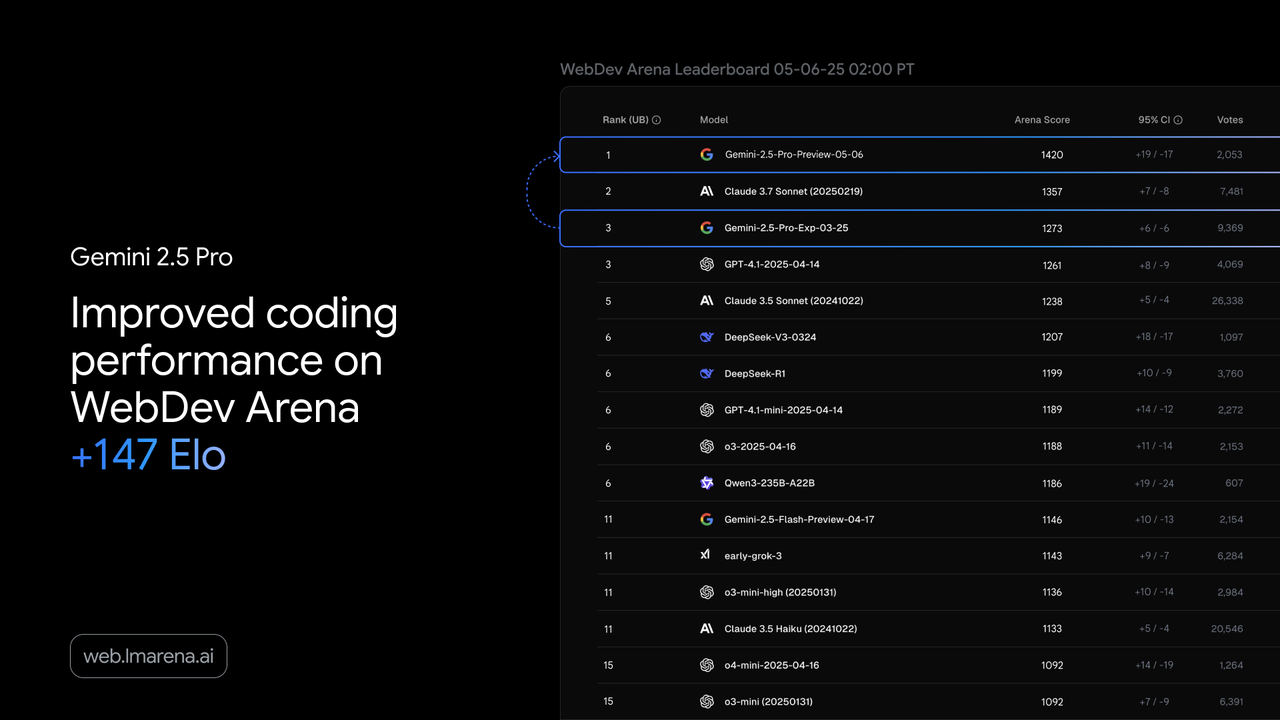

Gemini 2.5 Pro’s advantages are context and Google integration. For audio or video analysis tasks where you want to process hours of content in a single request without chunking, 1M tokens is the practical ceiling of this comparison. For teams on Google Cloud running Vertex AI pipelines, the integration is native and contractually familiar.

Benchmark Caveats: SOTA Counts vs. Real-World Deployment Gaps

The “215 SOTA results” figure deserves scrutiny before it shapes your decision. A few things to know about how this number is constructed:

First, SOTA counts aggregate across many subtasks — individual language pairs, specific audio genres, narrow benchmark categories. A model can claim hundreds of SOTAs while losing on the specific benchmark that matters most for your use case (say, your language, your domain vocabulary, your audio quality profile).

Second, Qwen3.5-Omni launched at the end of March this year. Independent third-party evaluations don’t yet exist at the time of writing. The comparison figures cited by Alibaba were generated by the releasing team, using benchmarks the team selected. That’s not an accusation of dishonesty — it’s standard practice in model releases — but it’s the appropriate epistemic stance to hold until neutral evaluations appear.

Third, benchmark performance ≠ production performance. Accent coverage, rare vocabulary, background noise handling, domain-specific terminology, and real-world audio quality all affect production ASR quality in ways that curated benchmarks don’t capture. Test with your own audio samples before committing.

Multilingual Voice Support

113 Recognition Languages vs. GPT-4o’s Whisper-Based Approach

GPT-4o’s audio recognition inherits from Whisper architecture, which supports approximately 100 languages with varying quality across the range. The model performs strongly on high-resource languages (English, Spanish, French, Mandarin) and degrades on lower-resource languages and dialects. OpenAI doesn’t publish a per-language accuracy breakdown, which makes quality for less common languages hard to verify in advance.

Qwen3.5-Omni’s 113 languages claim is similar in scope, but includes explicit coverage of dialects within that count — a distinction that matters for South Asian, Southeast Asian, and African language coverage, where “a language” and “its dialects” can have meaningfully different ASR quality. As with any language count claim, test with real samples from your target speakers. Alibaba has a history of generous dialect counting; calibrate accordingly.

36 Speech Output Languages: Practical for Which Markets?

Speech output in 36 languages puts Qwen3.5-Omni ahead of GPT-4o’s current preset voice options (primarily English with a small set of additional languages) for non-English TTS. For product teams building voice agents targeting Latin America, Southeast Asia, or multilingual European markets, 36 output languages is a meaningful capability gap if the languages are covered and quality is adequate for your use case.

Gemini 2.5 Pro’s Live API also supports multilingual speech output, but the language coverage documentation is less explicit. Verify coverage for your target languages specifically before committing either Qwen or Gemini to a multilingual TTS use case.

Semantic Interruption and Voice Cloning: Differentiated or Table Stakes?

Qwen3.5-Omni introduces semantic interruption — the model attempts to distinguish between a user genuinely interjecting versus ambient background noise. This is a real UX improvement for voice agent deployments in noisy environments, but it’s increasingly an expected baseline rather than a differentiator. Test whether it works reliably in your acoustic environment before treating it as a decision driver.

Voice cloning (upload a voice sample, model responds in that voice) is available in Plus and Flash via the API. GPT-4o’s Realtime API supports custom voice via fine-tuning but doesn’t expose direct voice cloning in the same way. This is a genuine capability difference if voice persona consistency across long conversations is a product requirement.

API Access and Infrastructure Fit

DashScope vs. OpenAI API vs. Google Vertex: Integration Complexity

For teams already on OpenAI’s SDK, DashScope’s OpenAI-compatible endpoint is straightforward to point at:

from openai import OpenAI

client = OpenAI(

api_key="YOUR_DASHSCOPE_API_KEY",

base_url="https://dashscope-intl.aliyuncs.com/compatible-mode/v1"

)

response = client.chat.completions.create(

model="qwen3-omni-flash", # or qwen3-omni-plus

messages=[{"role": "user", "content": "Your message here"}]

)For multimodal inputs (audio, video), you’ll use DashScope’s native multimodal endpoint, which has a slightly different request structure. The OpenAI compatibility applies primarily to text-completion paths. Verify which endpoints support which modalities before building your audio pipeline.

Google’s Vertex AI integration is the most complex of the three — it requires Google Cloud project setup, IAM configuration, and uses the Vertex SDK or the Gemini Developer API, which have different authentication flows and slightly different behavior. The payoff is enterprise-grade access controls, compliance documentation, and Google’s SLA framework.

Self-Hosting: Only Qwen3.5-Omni Offers a Realistic Path

This is the most structurally significant difference in this comparison. GPT-4o and Gemini 2.5 Pro are closed-weight models — there is no self-hosting path, full stop. If your use case requires data to never leave your own infrastructure (certain healthcare, financial, or defense contexts), or if you need to fine-tune on proprietary audio data at the model level, only Qwen3.5-Omni gives you a route.

The Light variant is open weights on HuggingFace. Plus and Flash are API-only as of March 31, 2026 — open weights for these variants have not been confirmed as publicly released at time of writing. If Plus-level quality with full self-hosting is your requirement, verify the current open-weight status before planning your architecture around it.

For self-hosting requirements, the vLLM deployment documentation and the Qwen team’s official GitHub are the authoritative references for setup.

Data Residency and Endpoint Geography

For non-China teams, DashScope’s International (Singapore) endpoint is the default. The US Virginia endpoint is available but has no free quota and, as of this writing, confirm multimodal (audio/video) support for Omni models specifically before routing production traffic there.

Pricing Structure Comparison

Input Token Tiers vs. Flat Per-Call Pricing

The fundamental pricing architecture differs across all three providers:

Qwen3.5-Omni (DashScope): Tiered pricing based on the input token count of the current request. Crossing a tier boundary within a single request bumps the entire request’s input rate — not just the tokens above the threshold. This means a 35K-token audio clip and a 5K-token text query are priced at different per-token rates, even if your monthly volume is identical. Short requests are cheap; long-context audio requests get expensive faster than a flat-rate model would suggest.

GPT-4o: Flat per-token pricing for text ($2.50 input / $10.00 output per 1M tokens). Audio is a separate line item entirely: the Chat Completions audio path runs ~$100/1M audio input tokens; the Realtime API (gpt-realtime) runs $32/1M audio input and $64/1M audio output after a recent 20% price reduction. Text tokens in the Realtime API are $4.00 input / $16.00 output — significantly higher than the standard Chat Completions rate.

Gemini 2.5 Pro: Tiered by context length, but the structure is simpler: standard rate ($1.25 input / $10.00 output per 1M tokens) for prompts ≤200K tokens; 2x rate for prompts >200K tokens. Audio input is priced at a premium over text — approximately 3x for the Flash tier; verify Pro audio rates in the Google AI Developer pricing docs. Batch mode cuts rates by 50% for async workloads.

Cost at Scale: High-Volume Voice / Audio Workloads

For a concrete comparison, consider a workload of 100,000 minutes of audio input per month — roughly a mid-scale transcription or voice agent operation:

- At ~427 tokens/minute of audio (based on Qwen’s published context math), that’s ~42.7M audio input tokens/month

- GPT-4o Realtime at $32/1M audio input: ~$1,366/month just for audio input, before text input/output costs

- Gemini 2.5 Pro audio (at ~$1.00/1M for shorter Flash tier, Pro may differ): ~$427/month if within standard context range — verify Pro audio rates

- Qwen3.5-Omni: Cost depends entirely on how the audio is batched into requests; each request that crosses a tier boundary pays the higher rate for the whole request. Cannot give a flat number without knowing your request size distribution

At very high volume with predictable request sizes, self-hosting the Flash or Light variant of Qwen3.5-Omni becomes worth calculating. A single H100 80GB running Flash at FP8 can handle production inference at a GPU hourly rate that undercuts API costs past a certain monthly volume.

Decision Framework: When to Use Which

Choose Qwen3.5-Omni If:

- Self-hosting is required — data residency, fine-tuning, or vendor independence are non-negotiable. This is the only model in this comparison with an open-weight path.

- Multilingual voice is the primary use case — 113 ASR languages and 36 TTS languages, combined with native omni-modal architecture, is a meaningful capability lead for non-English-first products. Verify your specific languages work at acceptable quality.

- Cost sensitivity at scale matters — at high volume, the self-hosted Flash or Light variant can undercut API pricing significantly. On pure API usage, model the tiered pricing carefully for your request size distribution before assuming it’s cheaper.

- You need voice cloning or voice persona consistency across long conversations — this is currently more accessible in Qwen3.5-Omni than in GPT-4o or Gemini.

Choose GPT-4o If:

- The OpenAI ecosystem is already in your stack — Assistants API, fine-tuning, function calling, Batch API. Switching costs are real; the tooling maturity is genuine.

- Tooling maturity matters more than cost — for voice agents that need complex tool-calling, multi-turn state management, or integration with existing OpenAI workflows, GPT-4o’s production track record is the strongest of the three.

- You’re building primarily in English or high-resource Western European languages — GPT-4o’s ASR quality for these languages is well-tested and reliable in production.

Choose Gemini 2.5 Pro If:

- Google Cloud is your infrastructure — native Vertex AI integration, GCP IAM, and enterprise agreements are real advantages if you’re already in the Google ecosystem.

- You need 1M+ token context — for processing very long recordings, multi-hour content analysis, or maintaining very long conversation history without chunking, Gemini’s context ceiling is the clear winner in this comparison.

- Google Workspace integration matters — for enterprise use cases involving Docs, Drive, Meet, or other Workspace products, the Gemini-Workspace integration path is more natural than the alternatives.

Limitations to Know Before Committing

Qwen3.5-Omni: MoE Inference Overhead, Early-Stage API Stability

The Plus variant’s MoE architecture means inference performance is less predictable than a dense model of equivalent quality. Under variable concurrency, routing overhead can cause latency spikes. vLLM mitigates this significantly over HuggingFace Transformers for self-hosted deployments, but it doesn’t eliminate it — MoE routing latency is inherent to the architecture.

API stability is an open question. Rate limits aren’t publicly documented for now. Endpoint behavior under load, SLA commitments, and version pinning guarantees are all unknowns at this stage. For production deployments with uptime requirements, plan a fallback.

GPT-4o: No Self-Hosting, Pricing Opacity at Scale

No self-hosting, full stop. If this is a hard requirement, GPT-4o is not a candidate.

Audio pricing via the Realtime API ($32/1M input, $64/1M output) is not cheap at scale, and the billing structure — separate rates for text and audio tokens in the same conversation — can produce bill surprises if developers assume standard Chat Completions rates apply. The Realtime API’s session-based context window management also adds cost complexity for long conversations.

OpenAI’s pricing history for models and features has included both reductions and restructurings. For a cost model that needs to be held for 12+ months, OpenAI pricing is less predictable than Google’s.

Gemini 2.5 Pro: Vertex Lock-In, China Accessibility

Vertex AI integration is a genuine advantage for Google Cloud teams and a genuine constraint for everyone else. Enterprise features, data residency controls, and compliance tooling are Vertex-native; the Gemini Developer API has fewer enterprise controls. Teams who start on the Developer API and migrate to Vertex for production will encounter a different SDK, different authentication, and different billing.

Gemini models are not reliably accessible from mainland China. If your team or your users are operating in China, the DashScope path is the practical option.

Gemini 2.5 Pro’s 200K token pricing threshold is also worth noting: if your average request consistently exceeds 200K tokens, you’re paying 2x the advertised input rate. For the 1M context to be cost-effective, you need workloads that actually benefit from the full window without hitting the 2x tier too frequently.

FAQ

Is Qwen3.5-Omni better than GPT-4o for multilingual voice applications?

On paper and by benchmark, Qwen3.5-Omni-Plus leads on language count (113 ASR, 36 TTS) and on audio-video understanding benchmarks. In practice, the answer depends on your specific languages, your audio quality, and your domain. Qwen3.5-Omni launched March 30, 2026 — independent production evaluations don’t yet exist. Test with real samples from your target users before deciding.

Can I run Qwen3.5-Omni in production without using DashScope?

The Light variant is available as open weights on HuggingFace, suitable for self-hosted production deployments on appropriate hardware. Plus and Flash are currently API-only via DashScope. Open weights for Plus/Flash have not been confirmed as of March 31, 2026 — verify current status before planning a self-hosted Plus deployment.

Does Qwen3.5-Omni support the OpenAI API format?

Yes. DashScope exposes an OpenAI-compatible endpoint at https://dashscope-intl.aliyuncs.com/compatible-mode/v1, which supports the Chat Completions API format. This works for text and text+vision inputs. For audio and video inputs, verify whether the specific modality you need is handled through the compatible endpoint or requires DashScope’s native multimodal endpoint — the compatibility layer doesn’t cover all modalities equally.

Previous Posts: