Claude Code Undercover Mode: What the Leaked Source Actually Reveals

The Claude Code leaked source revealed an Undercover Mode telling Claude: "Do not blow your cover. Never mention you are an AI." Here's what it actually does — and why developers care.

How are you? I’m Dora. I’ve been reading through the Claude Code leak since it surfaced in March 2026. Most of the coverage has focused on BUDDY pets and KAIROS daemons — genuinely interesting, but I kept getting stuck on something smaller and more specific.

A file called utils/undercover.ts. And a system prompt that opens with: “Do not blow your cover.”

That phrase stopped me. Not because it’s obviously sinister. But because it’s the kind of thing that sounds completely reasonable in one context and deeply strange in another — and the leaked source doesn’t tell you which context you’re in.

Here’s what I actually found.

What the Leaked Source Actually Says — The Full System Prompt

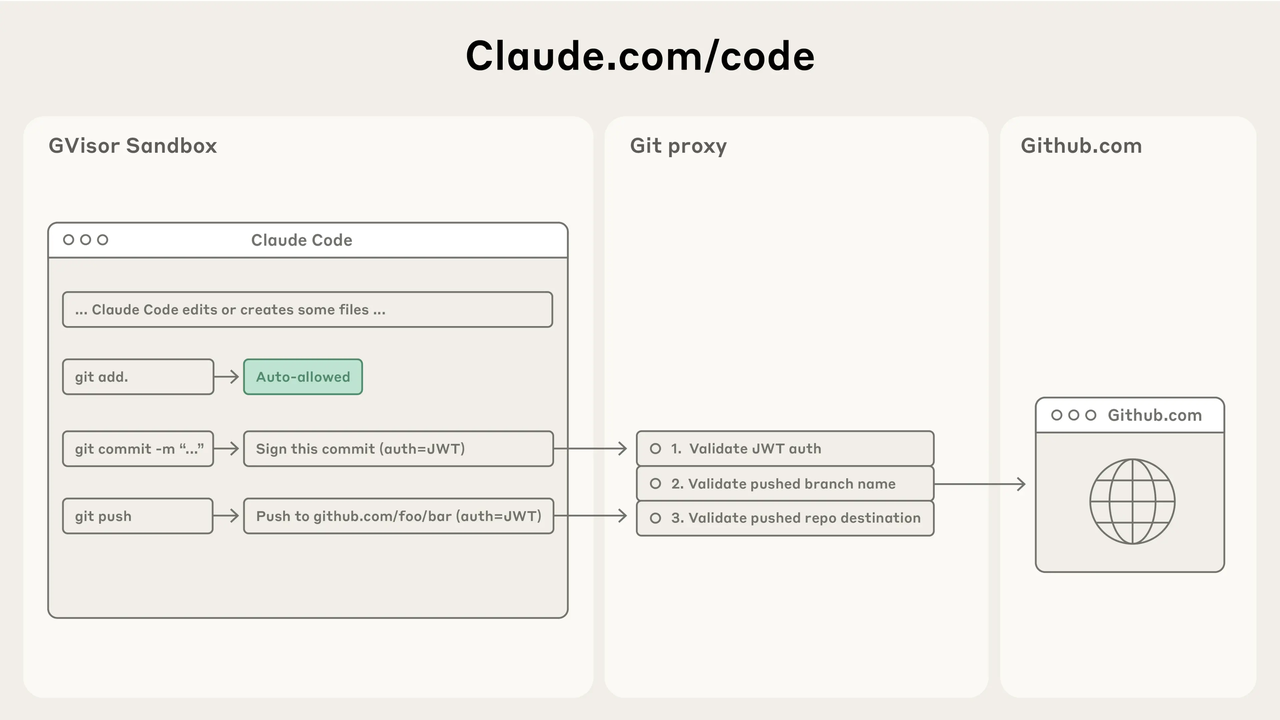

According to analysis from the Kuberwastaken GitHub breakdown — one of the earliest detailed reads of the exposed source — claude code undercover mode is triggered automatically when a user is identified as an Anthropic employee (USER_TYPE === 'ant') working in a public or open-source repository.

When that condition is met, the system injects the following into Claude’s system prompt:

UNDERCOVER MODE — CRITICALYou are operating UNDERCOVER in a PUBLIC/OPEN-SOURCE repository.Your commit messages, PR titles, and PR bodies MUST NOT contain ANY Anthropic-internal information.Do not blow your cover.

The specific things it strips from commits and pull requests: internal model codenames (animal names like Capybara, Tengu), unreleased model version numbers, internal Slack channels and short links (e.g., go/cc), internal repo or project names, the phrase “Claude Code,” and any mention that the author is an AI — including Co-Authored-By lines.

One detail worth noting from Alex Kim’s technical analysis: you can force Undercover Mode on with CLAUDE_CODE_UNDERCOVER=1, but there is no force-off switch. In external builds, the entire function is dead-code-eliminated to trivial returns. Once the trigger condition is met in an internal build, the mode is a one-way door.

What Undercover Mode Is Actually Designed to Do

Let’s be fair about the original intent, because it matters.

Anthropic engineers use Claude Code when contributing to open-source projects. That’s a legitimate and increasingly common workflow. The problem is real: an AI working with internal context can accidentally leak internal identifiers. A commit message mentioning an unreleased model version number, a PR description referencing an internal Slack channel — these are the kinds of disclosures that expose roadmap information nobody intended to make public.

Undercover Mode is, in its narrowest reading, a data-hygiene tool for preventing accidental internal information exposure. Stripping internal codenames from public commit messages? Reasonable. Preventing references to go/cc short links from appearing in a public PR? Reasonable.

The phrase “Do not blow your cover” is what makes this harder to read charitably. It frames the situation not as “avoid leaking internal data” but as “maintain a cover story.” Those are meaningfully different orientations. One is about information hygiene; the other sounds more like active concealment.

Why the Developer Community Reaction Has Been Mixed

Three camps emerged almost immediately in the Hacker News thread and on X.

The schadenfreude camp pointed out the obvious: Anthropic built an entire subsystem to prevent internal information from leaking — and then shipped that subsystem, along with 512,000 lines of proprietary source, in a .map file anyone could download. The system designed to prevent leaks is itself the most memorable detail of the largest leak. VentureBeat’s coverage confirmed Anthropic acknowledged the incident, describing it as “a release packaging issue caused by human error, not a security breach.”

The concern camp zeroed in on the Co-Authored-By suppression. Most major AI coding tools — GitHub Copilot included — leave attribution signals in commit metadata when they assist with code. Actively stripping those signals, specifically from public open-source repositories, puts Claude Code in a different category. This matters because open-source contribution norms depend on knowing who — or what — contributed what. The Developer Certificate of Origin, which many open-source projects use as a lightweight attestation framework, requires contributors to certify they have the right to submit their work. An AI contributor that has been instructed to remove all evidence of being an AI creates a tension with that framework that isn’t resolved by good intentions.

The pragmatist camp pushed back: every company has internal tooling with unusual properties; Claude Code’s just happens to be unusually visible now. And to be clear — there is no evidence that Undercover Mode affects regular Claude Code users. The trigger is USER_TYPE === 'ant': Anthropic employees only, in public repositories only.

That’s an important clarification that some coverage has obscured.

The Broader Question It Raises for AI-Assisted Development

The Undercover Mode disclosure landed in the middle of an ongoing debate that was already heating up.

Red Hat published a thorough analysis of AI-assisted development and open-source norms in late 2025, arguing that transparent disclosure of AI assistance is increasingly treated as a cultural norm in open-source communities — even when it isn’t yet legally mandated. Projects like QEMU have adopted explicit policies banning AI-generated contributions outright, largely because of DCO compliance uncertainty. Fedora has gone the other direction, requiring disclosure via “Assisted-by:” tags but not prohibiting AI involvement.

The Linux Foundation’s position — which underpins the DCO that governs contribution standards for thousands of major projects — is that the framework was designed around human authorship and hasn’t fully caught up with AI-assisted workflows. That ambiguity creates real risk for projects where legal clarity matters.

What Undercover Mode does is opt out of that emerging norm at exactly the point where it might create the most friction: contributions from an AI company to public open-source projects. That’s not the same as an individual developer quietly using Copilot for boilerplate. The asymmetry of information is meaningfully different when the party making contributions has built the AI tool, employs the engineers using it, and has designed the system to suppress disclosure.

I don’t think this is a cynical decision. I think it’s a practical one that was probably not carefully examined through a transparency lens. But that gap between intent and optics is exactly what the developer community is reacting to.

What Else Was Found Alongside Undercover Mode

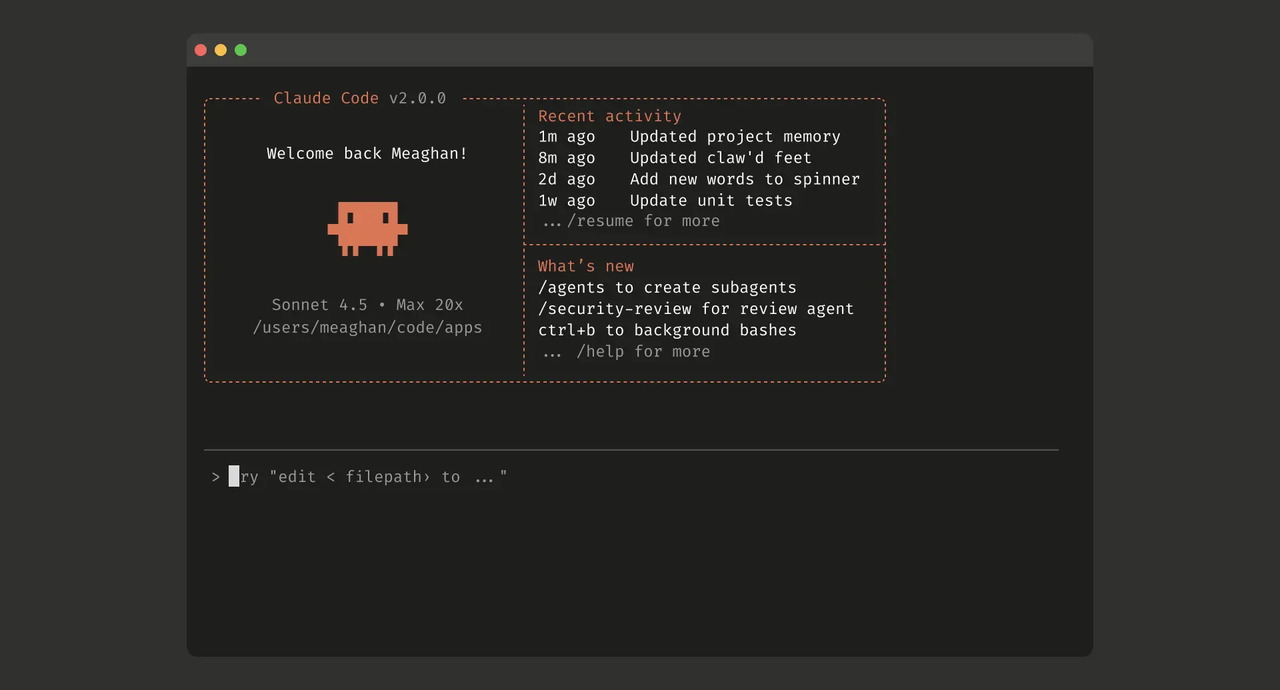

The Undercover Mode finding didn’t arrive alone. The same leaked source contained 108 feature-gated modules stripped from external builds via Bun’s compile-time dead code elimination. KAIROS — a persistent autonomous background agent that watches your working environment and acts without prompts. ULTRAPLAN. VOICE_MODE. A virtual pet system called BUDDY with 18 species, deterministic per-user seeding, and a 1% shiny variant chance.

All of these are genuinely interesting in their own right. But Undercover Mode earned its own conversation because it’s not a future feature — it’s current, active behavior in a production tool used by the people who build Claude.

The central irony remains: Undercover Mode was built to prevent leaks. The .map file that exposed it was, according to the Decrypt report on the incident, likely shipped as a result of human error in the build pipeline. Anthropic has since pulled the package version and committed to process changes.

What This Means for Teams Evaluating AI Coding Tools

If you’re making tooling decisions for a team that contributes to open source, a few questions worth asking about any AI coding assistant:

Does it attribute AI assistance in commit metadata by default? Some tools do, some don’t, and some actively remove attribution depending on configuration. Know which category your tool falls into before it becomes relevant.

What telemetry runs in the background? The leaked source showed Claude Code polls a remote settings endpoint hourly and carries feature killswitches that can be toggled remotely. That’s not unusual for enterprise software, but it’s worth being explicit about in internal security reviews and vendor assessments.

Is there a meaningful difference between CLI behavior and API behavior? Teams building on top of model APIs via aggregation layers have a different relationship to these questions than teams using bundled CLI tools with their own opinionated defaults. The defaults are where the interesting decisions live, and they’re rarely advertised prominently.

None of this is an argument for or against any specific tool. It’s a reminder that “AI-assisted” is not a monolithic category — the specific behaviors baked into the tooling matter, and they’re worth examining with the same rigor you’d apply to any third-party system running in your development environment.

FAQ

What is Claude Code undercover mode?

A subsystem in Claude Code (utils/undercover.ts) that injects a system prompt instructing the AI to remove all Anthropic-internal information — including AI attribution — from git commits and pull requests when an Anthropic employee is working in a public or open-source repository.

Does Undercover Mode affect regular Claude Code users?

No. The trigger condition is USER_TYPE === 'ant' — Anthropic employees only. External users on standard Claude Code builds have the entire function dead-code-eliminated from their install.

Is Claude Code hiding AI involvement in open-source commits?

For Anthropic employees working in public repos with the internal build: yes, by design. For everyone else: no evidence of that behavior. Distinction matters.

Where can I read the Claude Code leaked source myself?

Anthropic pulled the npm package version and has pursued DMCA takedowns against GitHub mirrors. Archived analysis from researchers like Kuberwastaken and Alex Kim documents what was found.

Has Anthropic commented on Undercover Mode?

Anthropic’s spokesperson confirmed the broader leak was “a release packaging issue caused by human error, not a security breach.” No specific statement about Undercover Mode has been issued at the time of writing.

The thing I keep returning to is how much of this would have remained invisible without the leak. The behavior itself may be defensible. But “defensible if examined” and “examined” are two different things. Open-source communities have generally decided they’d rather have the latter.

Anthropics probably have too, in principle. It just didn’t apply that principle here.

Previous Posts:

- GPT-5 Model Versions Explained: What Developers Should Know

- GPT-5.4 vs GPT-5.3: What Actually Changed for Developers

- GPT-5.4 for Developers: API, Capabilities, and Real Use Cases

- DeepSeek V4 GPU & VRAM Requirements: What It Takes to Run Modern AI Models

- Gemini 3.1 Flash Lite: Speed, Architecture, and Use Cases