GPT-5.5 API Availability: What Teams Should Plan

GPT-5.5 is announced, but API access is not fully live yet. Here is what teams can plan now and what still needs verification.

I spent last Friday rerouting a Codex workflow to GPT-5.5, then spent Monday explaining to two clients why the rollout decision is more complicated than the launch headlines suggest. My name shows up on a lot of “should we migrate?” docs at WaveSpeedAI, so I’m Dora — the person who makes teams wait two weeks before signing off on a model swap. The API is live. That’s the part most coverage gets right and stops there. What I want to write about is the ten days after launch, when “available” turns into “actually integrated,” and where most teams I work with are getting tripped up.

This is a planning note, not a tutorial. If you came for curl examples, the official docs handle that better than I would.

Where GPT-5.5 Is Available Today

ChatGPT and Codex rollout status

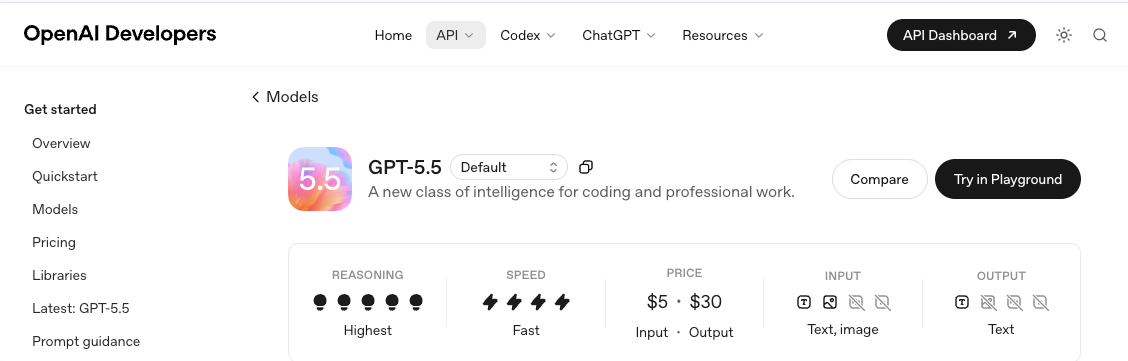

GPT-5.5 went live on April 23, 2026 for Plus, Pro, Business, and Enterprise users inside ChatGPT and Codex, with GPT-5.5 Pro restricted to Pro, Business, and Enterprise tiers. In Codex specifically, the model ships with a 400K context window and a Fast mode that runs 1.5x faster at 2.5x the cost — details that the official GPT-5.5 launch announcement on OpenAI lays out cleanly. The launch covered consumer surfaces only on day one. I want to flag this because half the tickets I saw last week assumed API parity from the start.

What OpenAI says about API availability

The part the early press cycle missed: API access landed one day later, on April 24, 2026. Both gpt-5.5 and gpt-5.5-pro are now exposed in the Responses and Chat Completions APIs, confirmed in OpenAI’s own GPT-5.5 model documentation. Context window is 1M tokens on the API surface, distinct from the 400K Codex ceiling. Two surfaces, two limits — easy to confuse, and worth writing down before your engineers do. So the question is no longer “when can my team use it.” It’s “should we, and what do we verify first.”

What Teams Can Safely Plan Before API Integration

Evaluation criteria and migration prep

I don’t recommend a same-day migration. Here’s what I’d lock down first.

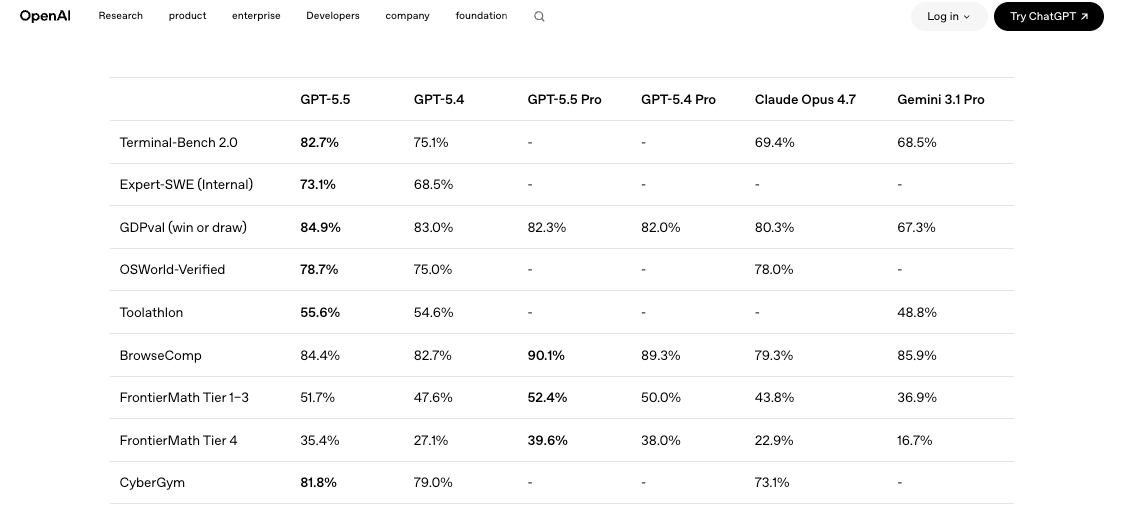

Build a small eval harness against your current model. Five to ten representative prompts from your real workload, scored on the dimensions that actually matter to you: correctness, token cost, latency, retry rate. Run GPT-5.4 and GPT-5.5 side by side, same prompts, same temperature settings, same tool definitions. Independent benchmarks like the comparison published on LLM Stats show GPT-5.5 gaining on 9 of 10 shared benchmarks but landing only marginal wins on SWE-Bench Pro. Translation: the upgrade is real, but it’s not uniformly better. Your workload decides.

Decide your fallback path now, not after the first 429. New model releases historically ship with tighter rate limits for the first 30 days. Have GPT-5.4 wired in as the fallback before you flip a single production request. I’ve watched two teams skip this step in the past and pay for it during a launch-day traffic spike.

Questions for procurement, security, and engineering

A few I’ve had to answer this week:

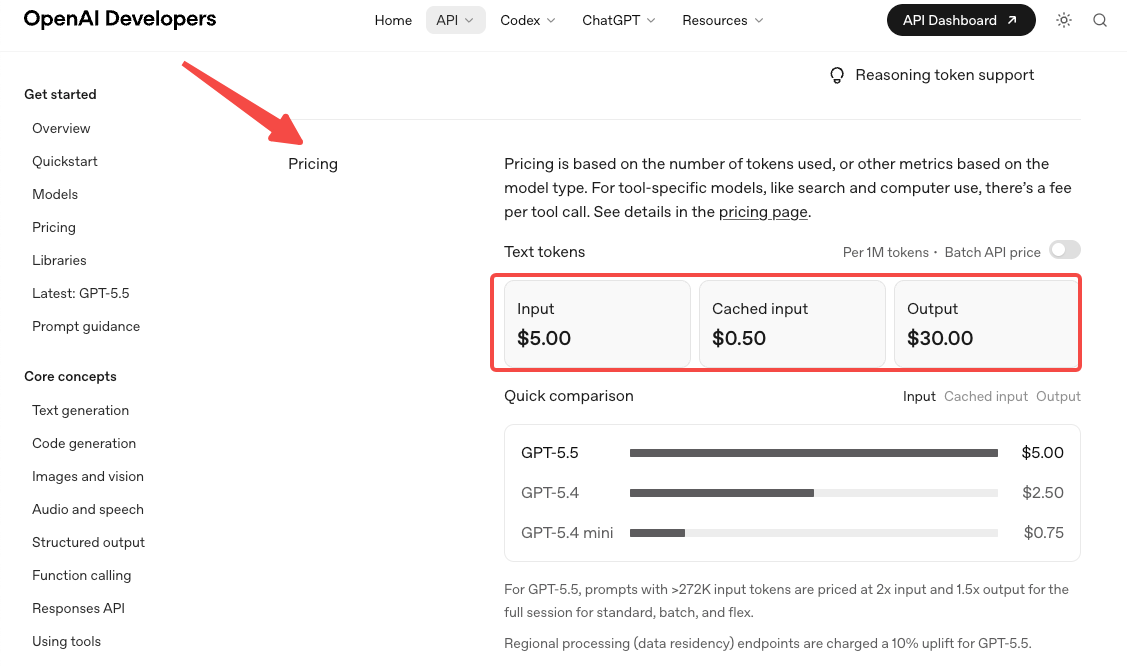

- Pricing has doubled. Standard rate is $5 per 1M input tokens and $30 per 1M output, per the official OpenAI pricing page. Pro is $30 / $180. Token efficiency claims partially offset this on Codex workloads, but on most other workloads, expect your bill to climb materially.

- Long-context pricing changes at 272K. Above that threshold, input is 2x and output is 1.5x for the entire session. If your workflow regularly crosses 272K tokens, model your cost twice — once below the threshold, once above. This catches teams who built around GPT-5.4’s tier structure and assumed the new model would inherit it.

- Security needs to read the system card. GPT-5.5 ships with stricter cyber classifiers, documented in the GPT-5.5 system card. Some legitimate workloads will get blocked initially while OpenAI tunes them. Worth flagging to anyone running security tooling, code-analysis pipelines, or red-team workflows through the API.

What Still Needs Verification Before Production Use

Model IDs, rate limits, pricing, and tool support

I’d verify these in this order:

1.Model IDs and snapshots. Lock to a snapshot, not the alias. Aliases shift; snapshots don’t. Check the available list on the GPT-5.5 model page before hard-coding anything into your client.

2.Your tier’s rate limits. OpenAI’s tier system auto-promotes based on spend, but launch-day limits can be tighter than what GPT-5.4 enjoys today. The OpenAI rate limits documentation is where I’d start, and it’s worth running a synthetic burst test against your current tier before assuming the headroom is there.

3.Tool and structured-output behavior. Function calling, web search, and structured outputs all work, but the exact schemas and reasoning-mode interactions need a smoke test against your actual tool definitions. I’ve seen reasoning effort settings change retry behavior in ways that don’t show up until you hit production traffic.

Throughput and enterprise rollout details

For anyone running serious volume: Batch and Flex run at half the standard rate, Priority at 2.5x. Translation: if your work tolerates async, Batch on GPT-5.5 costs the same per token as GPT-5.4 on standard. That’s the real arbitrage hidden in this release, and almost no one I’ve talked to has factored it in yet. The GPT-5.5 pricing breakdown on apidog walks through the worked examples better than I will here.

Direct Provider Planning vs Platform-Based Readiness

I work at a platform that aggregates model access, so my bias is on the table. But the structural argument is the same regardless of whose platform you use: when a single provider releases a 2x-priced model on day one, the case for routing logic gets stronger, not weaker.

Direct provider integration looks like this: rewrite your client, retest your prompts, redo your cost model, repeat per provider. Multi-model platforms — WaveSpeedAI included, but also others — let you swap models with a config change. The trade-off is that you’re adding a layer between yourself and the source. For high-frequency teams shipping daily, that layer is usually worth the abstraction. For a team running one model on one workload at low volume, it isn’t.

I’d plan for a routing setup either way. Premium queries to GPT-5.5, routine traffic to GPT-5.4 or another frontier model — this pattern alone tends to cut bills 40–60% versus single-model defaults, regardless of which provider you center on.

FAQ

Has GPT-5.5 launched in the API yet?

Yes, as of April 24, 2026. The launch on April 23 covered ChatGPT and Codex only; the API followed one day later. Both gpt-5.5 and gpt-5.5-pro are accessible in the Responses and Chat Completions endpoints with a 1M-token context window.

What should teams verify before integration work begins?

Pricing impact at your real token mix, rate-limit ceilings on your current tier, fallback to GPT-5.4 wired and tested, and a short eval harness comparing the two models on your actual workload. Lock to a snapshot ID, not the alias.

Is it worth waiting instead of using GPT-5.4?

Depends on workload. For agentic coding and computer-use tasks, GPT-5.5 shows meaningful gains, as documented in TechCrunch’s launch coverage. For workloads where GPT-5.4 already meets your quality bar, the doubled per-token price is hard to justify without a measurable lift.

How should teams prepare for a fast API rollout?

Build the eval harness now, route through an abstraction layer if you don’t already, and assume rate limits will tighten before they loosen. Don’t prepay large credit balances — pricing on this generation is still moving.

Does the doubled price actually mean doubled bills?

No, but close. Token efficiency gains on Codex workloads bring real-world bills under 2x. On other workloads, expect closer to the sticker. Batch processing at half rate is the lever worth pulling first.

Conclusion

The API is live. The pricing changed. The rate limits are still settling. None of that means you should rush. What it means is that the planning window most teams hoped for closed faster than expected, and the work now is verification rather than waiting.

I’m running my own migration over the next two weeks. Whether GPT-5.5 stays in my default routing past that point — I don’t know yet. That’s what the eval is for.

More to come.