GPT-5.4 Leak: What Developers Should Know

Hi, I’m Dora. I wasn’t hunting for a new model. I was just cleaning up a build pipeline when I saw a thread passing around screenshots of a commit that mentioned “GPT 5.4.” No fanfare, just a small line in a pull request. I paused, not because I needed another acronym in my day, but because quiet, accidental hints often say more than splashy launches.

Over the first week of March 2026, I followed the bread crumbs: cached diffs, developer chatter, and a PR that seemed to come and go. I didn’t test GPT 5.4 (there’s nothing official to test), but I looked closely at what the code appeared to reference and how it was handled. The tone of the trail mattered almost as much as the content.

How GPT-5.4 Surfaced in the Wild

The Codex PR references

I first noticed mentions of GPT 5.4 tied to a short-lived change in a repository that appeared to touch “Codex” contexts, either legacy naming or an internal path that still uses “codex” as a bucket for coding flows. The snippet circulating showed a few things you’d expect around model routing and feature flags, nothing that screamed “launch,” more like plumbing. If you’ve worked around model switches, you know these lines are usually boring and important.

Two things stood out in those snippets: a reference to a “/fast” switch in a chat or agent command layer, and a capability label that read like full-resolution vision. I’m being specific here because it matters. Labels don’t always match reality, but they’re rarely random.

Why the code was quickly removed

The commit didn’t last. From what I saw, the branch got rewritten and the PR’s diff was scrubbed. That’s common when a team lands a reference too early or blends internal and external configs by mistake. In other words: it looked like routine house-keeping under pressure, not a takedown.

I’ve done the same thing on smaller teams, catch a flag I shouldn’t have exposed, force-push, and move on. The speed of the correction suggested someone upstream noticed chatter and decided to close the loop. Not scandal. Just containment.

What force-push deletions usually signal

A force-push doesn’t prove anything glamorous. It usually signals urgency and a desire to restore the repo to a known state. You can read Git’s own stance on history rewriting in the docs, useful, sharp tool, easy to cut yourself with if you’re not careful. If you see a force-push in the middle of a leak, it often means the team treats the leakage as noise rather than a coordinated reveal.

For context (not proof), here’s a neutral reference: Git’s notes on force pushing and rewriting history. Different world, same pattern.

What the Leaked Code Actually Shows

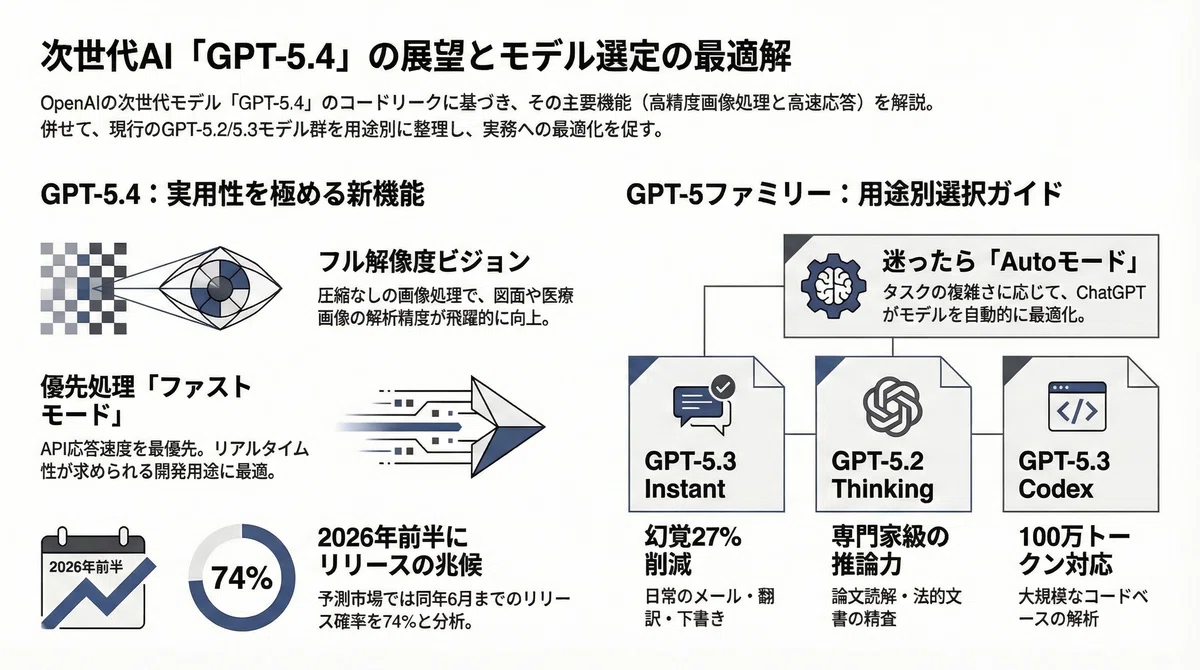

Fast mode command (/fast)

The line that mentioned a “/fast” command read, to me, like a user- or agent-level override. In practical terms, it suggests a mode that trades some depth for speed, a familiar dial in model routers. If this is tied to GPT 5.4, I’d expect faster first tokens, maybe more aggressive caching, maybe looser tool-calling thresholds. Nothing flashy, but useful when you’re inside a loop running dozens of small checks.

This didn’t sound like a wins-the-demo feature. It sounded like something you’d flip on during deployments or CI steps when you care about latency more than eloquence, say, doc string normalization, tiny refactors, or schema diffs that don’t need perfect prose.

Full-resolution vision reference

“Full-res vision” is a loaded phrase. In practice, that could mean higher input limits, better handling of dense UI screenshots, or less aggressive down scaling before the model sees pixels. If accurate, this leans toward workflows where fidelity matters, reading actual code in screenshots, reviewing UI states, or extracting structure from diagrams without smudging the details.

I work with a lot of product notes packed into images, mocks with tiny annotations, redlines, that sort of thing. IfGGPS 5.4 handles those natively at higher resolution, it would remove a quiet but constant tax: the prep step where I crop or re-encode images to get a model to see what I see.

Coding agent signals in Codex context

The Codex references felt like scaffolding for coding agents. Not “write me an app” magic, more like the small muscles: tool selection, function calls, retry policies, and fallbacks when a call returns something unexpected. The hints I saw pointed to that layer, not the headline.

If that reading is right, GPT 5.4 may be tuned for agentic coding flows that survive the mess of real repositories: partial tests, flaky environments, mixed dependency managers. Less “genius coder,” more “reliable coworker who doesn’t quit after the second error.” I can work with that.

What GPT-5.4 Is Likely Built For

AI coding workflows

I don’t think GPT 5.4 is chasing novelty. The trail suggests steadier hands on common loops: reading code, making small edits, validating, and trying again without drama. If you ship features around real constraints, that rhythm matters more than one-off brilliance.

A guess, grounded in what I saw and what keeps showing up in teams I work with: GPT 5.4 is probably meant to sit closer to the code, not the slide deck. It likely aims at reasonably fast diffs, consistent doc updates, safer refactors, and pragmatic suggestions that survive the running stage.

Agent loop optimization

Agent loops are fragile for boring reasons, timeouts, tool errors, context drift, and attempts that never converge. The “/fast” hint reads like a way to keep loops brisk, while the vision reference suggests the agent can read what humans actually pass around (screenshots, logs, terminal photos) without extra fuss.

If true, two quality-of-life wins could show up:

- Fewer manual retries: clearer error typing and calmer back off logic reduce thrash.

- Tighter tool calls: cheaper, quicker hops when a step doesn’t need full reasoning.

This didn’t save me time at first, tracking a leak never does, but the shape of it felt like reduced mental effort for teams that automate the dull parts of code maintenance.

Developer tooling integrations

The way the references were tucked into routing hints tells me GPT 5.4 might be packaged to slot into existing developer stacks. Think:

- CI/CD hooks that choose speed or depth per step.

- Editor extensions that can read images at higher fidelity without external pre-processing.

- Agent frameworks that rely less on bespoke glue code.

If that’s where GPT 5.4 is going, the value won’t be in a demo: it’ll be in fewer brittle adapters. That’s the kind of upgrade you notice three weeks later when nothing breaks, and you realize you didn’t have to babysit the agent on a Friday.

What We Still Don’t Know

No benchmarks confirmed

I haven’t seen credible benchmarks tied to GPT 5.4. No eval sheets, no standardized tasks, no head-to-heads with current models. Without numbers, all we have are impressions and labels. If and when this lands, I’ll look first for small, practical tests: time-to-fix on a failing test, accuracy on reading dense screenshots, or number of retries per resolved issue.

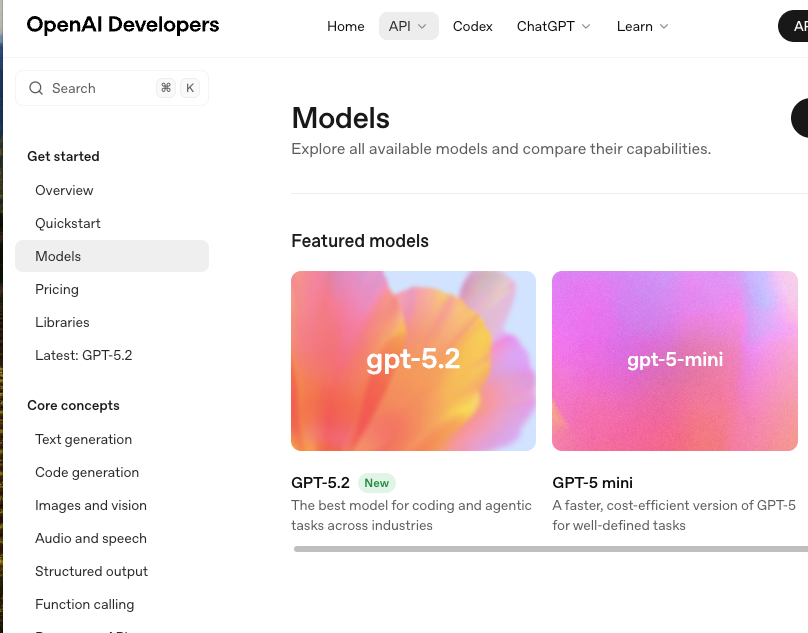

No pricing or API details

There’s nothing public about pricing or quotas. For planning, that matters more than hype. A great model that’s hard to budget doesn’t land in production. If you’re mapping scenarios today, keep placeholders in your spreadsheets and sanity-check against current OpenAI model documentation rather than leaked labels.

Release timeline unclear

I don’t have a date, a quarter, or even a season. The fast removal suggests internal motion, not market timing. If GPT 5.4 appears, it may show up quietly in routing tables or as a flag in agent frameworks before any big banner. Or it may change names and never be called “GPT 5.4” in public at all.

Current Status & Disclaimer (model not officially released)

As of early March 2026, GPT 5.4 hasn’t been announced or documented. I’m sharing observations from short-lived code references and the way they were handled, nothing more. This isn’t advice to re-architect anything. If you’re curious, keep an eye on stable docs, not screenshots. And if you do spot another stray reference, take a breath. Most leaks are quieter, and more useful, than the headlines suggest.

I’ll leave it here: the part that caught me wasn’t the version number. It was the small signs of care around loops and latency. If that’s where things are headed, I’m fine with less spectacle.