As generative image models continue evolving, creators want clarity on which tools deliver the best results for real design, marketing, and content workflows. WaveSpeedAI now supports both Seedream 4.5 and Google Nano Banana Pro, two powerful text-to-image models with distinct strengths. This comparison breaks down their capabilities, ideal use cases, and differences so you can select the right model for your project.

To make this the ultimate creative image showdown, we analyze typography, composition, multilingual text, realism, camera control, and consistency—all areas where these models differ significantly.

Core Differences Between Seedream 4.5 and Nano Banana Pro

To understand each model’s role, here is the clearest breakdown of their defining strengths.

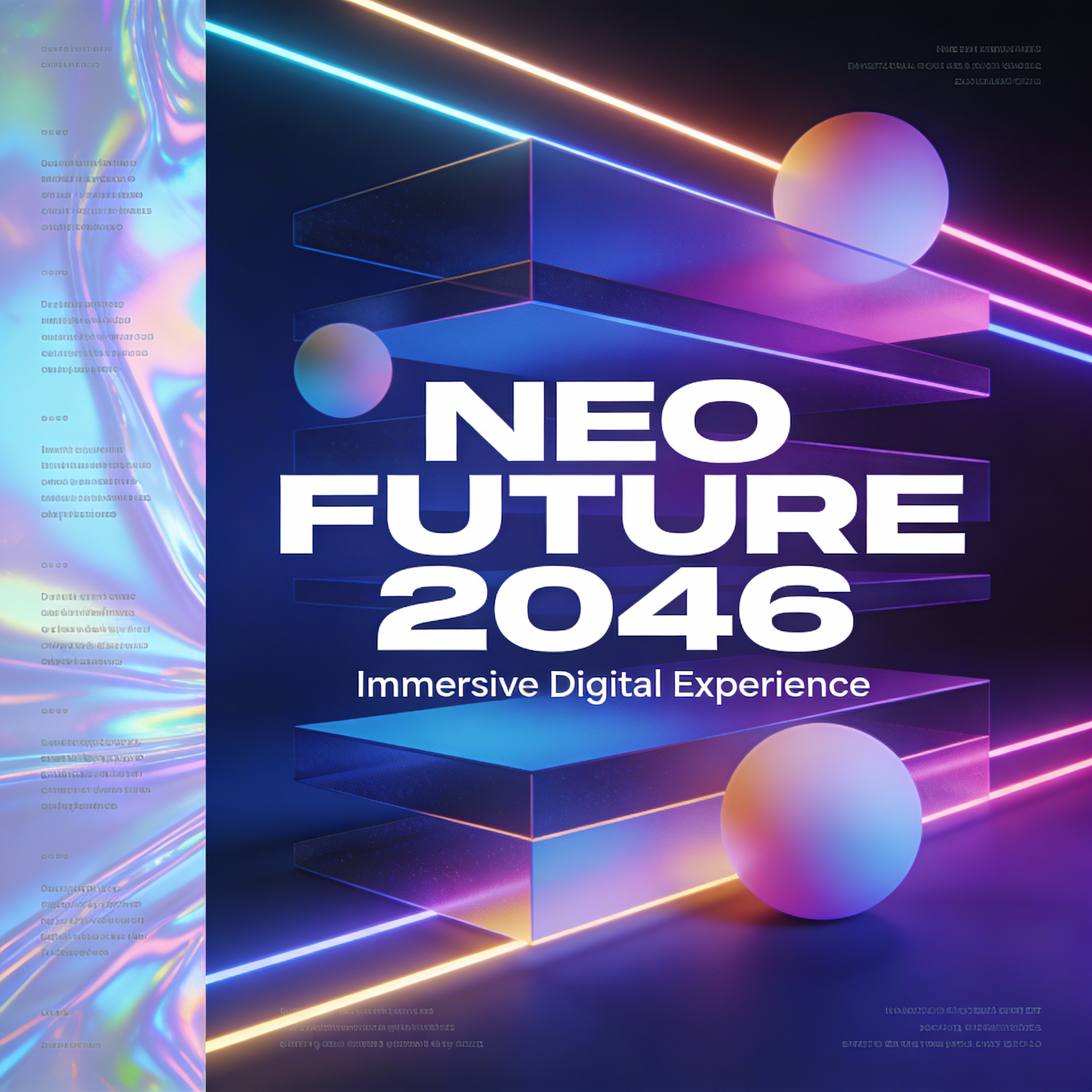

Seedream 4.5 — Design-Focused Strengths

Seedream 4.5 is built for professional graphic design, where layout, typography, and brand clarity are essential.

Key characteristics:

- Top-tier typography with sharp, legible, layout-aware text

- Poster-grade composition with clear hierarchy for title, subtitle, and body text

- High prompt adherence for structured layouts, grid systems, and brand visuals

- High-resolution output up to 4096×4096 for campaigns and commercial assets

- Best suited for posters, banners, KV campaigns, e-commerce hero shots, and UI-style visuals

Seedream 4.5 excels at poster-quality design, typography, and structured visual layouts.

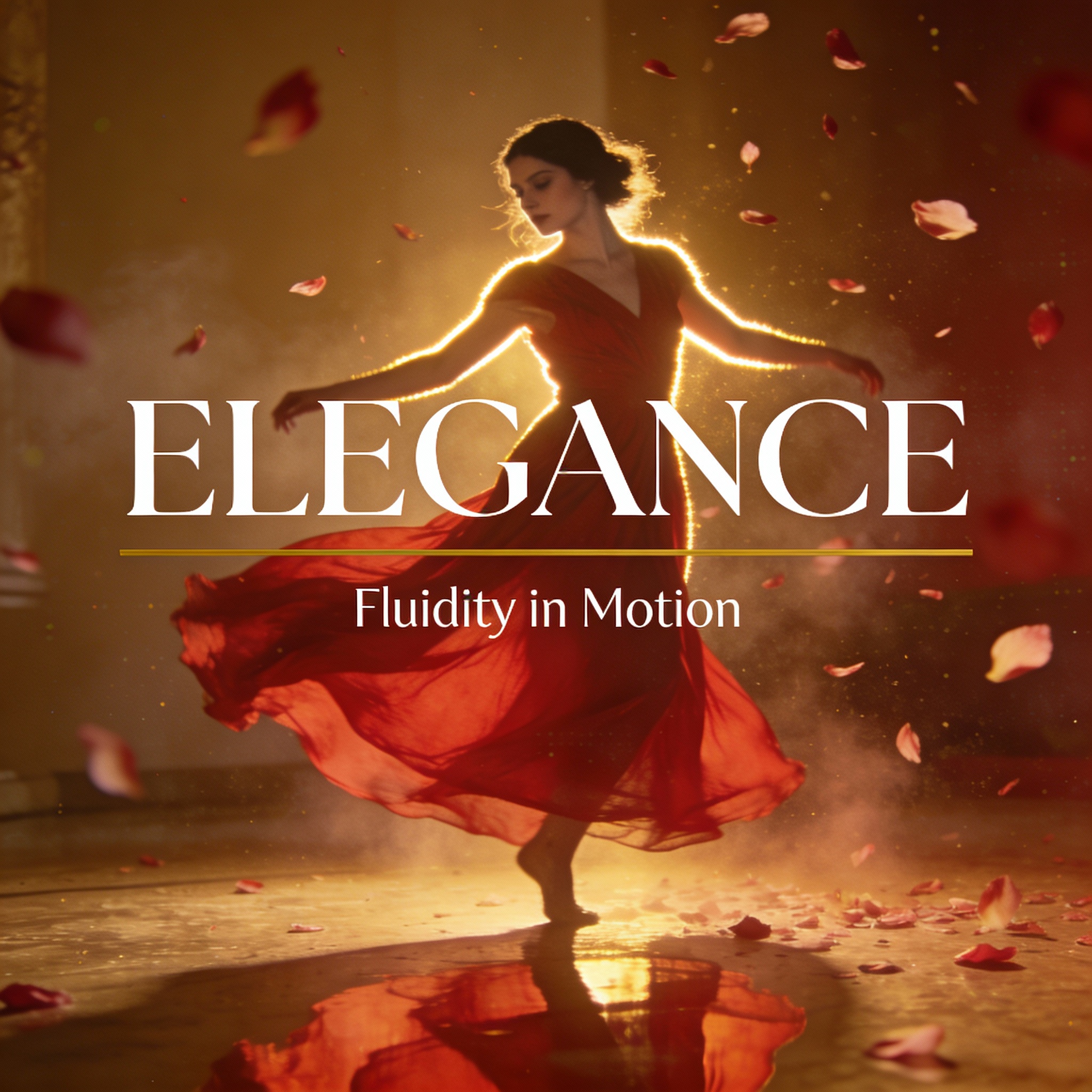

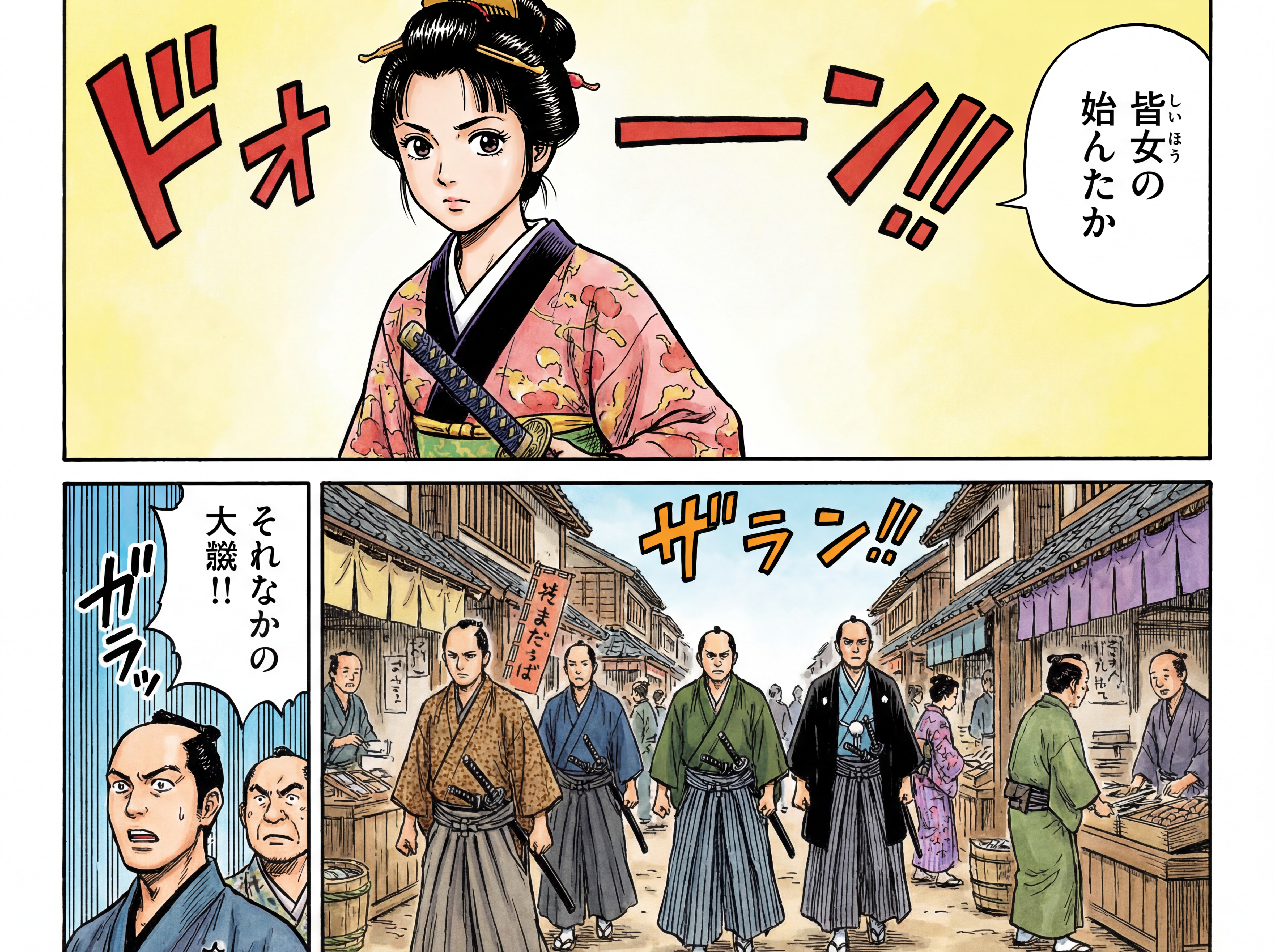

Nano Banana Pro — Photography & Multilingual Strengths

Nano Banana Pro (Gemini 3.0 Pro Image) focuses on photographic realism, multilingual text control, and semantic editing, making it more flexible across creative use cases.

Key characteristics:

- Photographic realism with camera-style parameters (lens, DOF, lighting)

- Multilingual on-image text, automatically adapted to perspective and style

- Semantic editing through natural-language instructions

- Character and style consistency, suitable for multi-image narratives

- Highly flexible aspect ratios, from 1:1 to 21:9+

👉 Nano Banana Pro excels at realistic imagery, multilingual text, and flexible scene generation.

When to Choose Seedream 4.5

Use Seedream 4.5 when your task requires:

- Clean, sharp typography

- Structured visual layout

- Branding, campaign, or marketing assets

- Poster-style designs with text hierarchy

- High-resolution visuals for print or digital ads

It is especially strong for:

- KV campaigns

- E-commerce hero images

- Logos + Titles + Subtitles

- UI-style mockups

- Landing page banners

When to Choose Nano Banana Pro

Use Nano Banana Pro when you need:

- Photographic realism

- Camera-style control: lens, angle, DOF

- Multilingual on-image text

- Scene-level semantic edits

- Strong consistency across multiple images

Perfect for:

- Product photography mockups

- Social media content

- Storyboards & concept art

- Multilingual advertising

- Lifestyle visuals

Conclusion

Seedream 4.5 and Nano Banana Pro excel in different areas—Seedream in layout-driven design and typography, Nano Banana Pro in realistic imagery and multilingual text control. Together, they cover a wide spectrum of creative needs.

WaveSpeedAI makes it effortless to run both models with instant access, no cold starts, and stable, production-ready performance.

Try them today on WaveSpeedAI → https://wavespeed.ai