Where to Try HappyHorse-1.0: Access and Availability

Looking to try HappyHorse-1.0? Here's every access option by availability — demo, API, self-hosting, and what's not live yet.

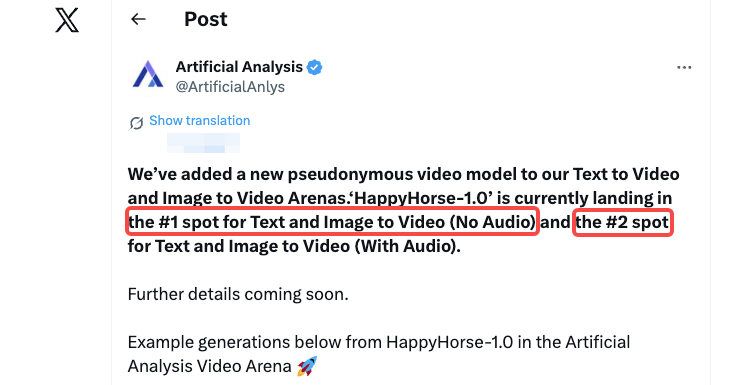

I nearly choked on my coffee when I saw the Artificial Analysis leaderboard last weekend.

A model I’d never heard of — no company name, no launch event, no press release — had just taken the top spot. Artificial Analysis confirmed that HappyHorse-1.0 landed at #1 for both Text-to-Video and Image-to-Video (No Audio) in their Arena. My immediate reaction wasn’t excitement. It was who on earth is this.

So I did what I always do when something doesn’t add up: I mapped every single access path. Not what the marketing copy claims — what actually works right now.

Hi, I’m Dora! This article is that map.

Access Status at a Glance

Before getting into specific options, here’s the honest summary: HappyHorse-1.0 is partially accessible today, but the developer-facing infrastructure is still incomplete.

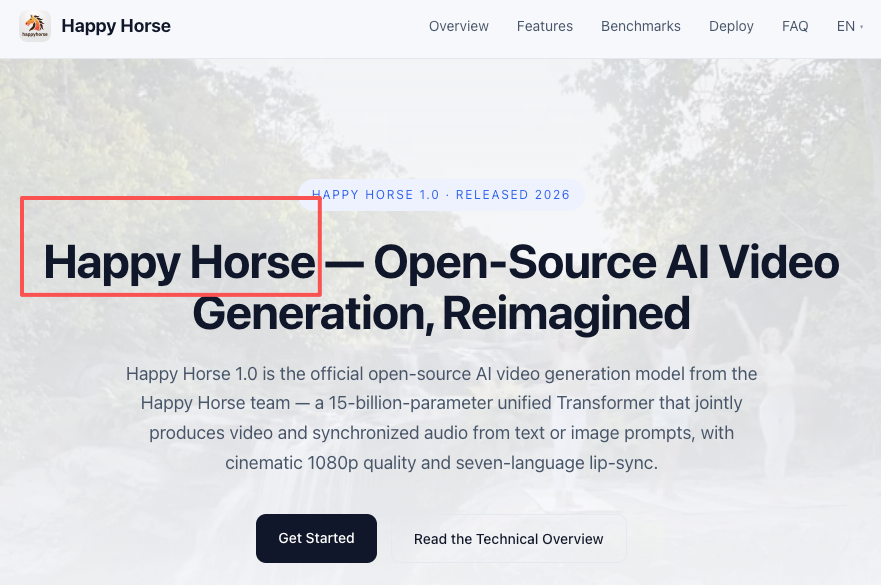

The official site at happyhorses.io has a Live Demo button, but both the GitHub repository and Model Hub are marked as “coming soon” — not accessible as of publication. That gap matters a lot depending on what you’re trying to do.

On the Artificial Analysis leaderboard, HappyHorse-1.0 currently sits at Elo 1333 for text-to-video (no audio) and Elo 1392 for image-to-video (no audio) — numbers that made a lot of people suddenly very interested in getting their hands on it. The problem is that leaderboard performance and practical access are two completely different questions right now.

What’s live vs. what’s still coming

| Access Path | Status |

|---|---|

| Official demo (happyhorses.io) | ✅ Live |

| Official API | ❌ Not announced |

| GitHub / model weights | ⏳ Coming soon |

| Third-party API (Replicate, fal.ai) | ❌ Not confirmed |

| HuggingFace Spaces demo | ❌ Not confirmed |

Why access is more complicated than a typical model launch

Most model releases follow a predictable pattern: paper → weights → API → third-party integrations, usually over a few weeks. HappyHorse-1.0 skipped that entirely. The model appeared pseudonymously on the Artificial Analysis Video Arena with no clear developer identity — community speculation suggests it may originate from Asia, possibly connected to an existing model lineage, but nothing has been officially confirmed. That opacity makes the normal “check the docs” approach useless here. You’re working with what’s actually observable.

Option 1 — Official Demo (happyhorse-ai.com)

This is the only confirmed path to try HappyHorse-1.0 today.

What it offers

The official site describes HappyHorse-1.0 as a 40-layer Transformer that processes text, video, and audio via self-attention only — no cross-attention — and covers expressive facial performance, natural speech coordination, and multilingual support across Chinese, English, Japanese, Korean, German, and French.

The live demo lets you generate from text prompts and observe the model’s behavior directly. On the Artificial Analysis Video Arena, HappyHorse-1.0 scored around Elo 1333 for no-audio text-to-video, with users noting strong camera drift, body movement, and atmospheric consistency.

Limitations

Here’s where I want to be direct: I can confirm the demo exists and is accessible. What I can’t confirm with certainty — because the official site doesn’t specify — are the exact session limits, whether outputs carry watermarks on the free tier, and what resolution the public demo runs at. Verify these yourself before building any workflow assumptions around them. The demo is a useful evaluation tool, not a production pipeline.

Who this is actually for

The live demo is the right starting point if you want to form your own opinion about HappyHorse-1.0’s motion quality before the infrastructure matures. It’s not sufficient for production workflow testing — no rate limits are published, no SLA exists, and the backend could change at any time.

Option 2 — API Access

This is the question most developers are actually asking, and the honest answer is: there is no confirmed official API as of publication.

Is there an official API?

No public API endpoint has been announced. The official site links to the demo and marks developer resources as coming soon. Without a published API, there’s no authentication model, no pricing, no rate limits, and no stability guarantee — which means you can’t build anything against it yet.

Third-party aggregators: any platforms carrying HappyHorse-1.0?

I checked Replicate, fal.ai, and HuggingFace Spaces for any HappyHorse-1.0 integration. As of publication, none of these platforms have confirmed support. This isn’t surprising — platforms like fal and Replicate integrate models through inference provider ecosystem, which requires model weights to be publicly available first. Since the weights haven’t dropped yet, aggregator support can’t come before that.

If you see third-party platforms claiming HappyHorse-1.0 API access right now, approach them with caution and verify independently.

What signals to watch for an official API announcement

Given that the GitHub and Model Hub are explicitly listed as “coming soon” on the official site, those are the clearest indicators. When those pages go live, API access and third-party integrations typically follow within days to weeks. Watch the Artificial Analysis Video Arena for model status updates, and the official site for infrastructure announcements.

Option 3 — Self-Hosting (Pending Weight Release)

GitHub and HuggingFace: marked “coming soon”

Both the GitHub repository and Model Hub are listed as “coming soon” on the official HappyHorse-1.0 site — they exist as placeholders but aren’t accessible. This means there is currently no legitimate path to self-host HappyHorse-1.0. Anyone offering “local weights” before an official release should be treated with significant skepticism.

Hardware estimates when weights drop

This is what I can help you prepare for. Based on what’s been described about the architecture — a 40-layer unified Transformer processing text, video, and audio via self-attention, with first and last 4 layers using modality-specific projections and the middle 32 layers sharing parameters across modalities — this is a substantial model. For reference, video generation models of comparable architectural complexity (like SkyReels-V2 at 14B parameters) typically require at minimum an RTX 4090 with aggressive quantization and offloading enabled, or multiple A100s for comfortable inference. Expect similar requirements here — though exact VRAM needs can’t be confirmed until weights are public.

Community mirrors: how to evaluate trust

If community-hosted versions appear before official weights drop, here’s a quick evaluation framework before using them: Does the mirror link back to a verifiable official release? Is the file hash published and checkable? Does the repository have meaningful commit history? Anonymous mirrors with no provenance chain are not worth the risk.

While You Wait: Alternatives You Can Access Today

This section is the most practically useful part of the article — because these three models are accessible right now, have documented APIs, and are sitting at or near HappyHorse-1.0 on the leaderboard.

Seedance 2.0 via Dreamina — strong leaderboard performance, public consumer access

Dreamina Seedance 2.0 currently sits at Elo 1273 for no-audio text-to-video and Elo 1355 for no-audio image-to-video, making it the closest competitor to HappyHorse-1.0 in blind voting. The consumer access path is live through dreamina.capcut.com, where new accounts receive free daily generation credits.

One important caveat: the access situation is complex. The official BytePlus API remains paused as of April 2026 following copyright disputes with major Hollywood studios, so there’s no clean developer API path right now. Consumer access through Dreamina and CapCut is operational, but if you need programmatic integration, check third-party providers like PiAPI for current status before assuming API availability. Dreamina itself is a web-based UI only — it does not expose a direct API, so UI-based testing is your confirmed path today.

Kling 3.0 via API — stable, documented, production-grade

If you need something you can actually ship against today, Kling 3.0 is the most straightforward option. API access is aimed at teams who want to embed Kling 3.0 into internal tools or custom pipelines, and multiple providers — including PiAPI, Kie AI, and the official KlingAI developer platform — offer documented endpoints with published pricing.

Kling 3 supports text-to-video and image-to-video, multi-shot mode with up to 6 scenes, first and end frame image control, and flexible durations from 3 to 15 seconds. It’s not the #1 model on the leaderboard, but it’s the model with a production-ready API you can start using today.

SkyReels V4 — #3 on T2V leaderboard, check current API status

SkyReels V4, announced April 3, 2026, co-synthesizes 1080p/32FPS video with semantically aligned audio using a dual-stream Multimodal Diffusion Transformer. It currently holds the #3 spot on the Artificial Analysis text-to-video leaderboard with audio.

The weight situation here mirrors HappyHorse-1.0. SkyworkAI has consistently open-sourced previous SkyReels versions (V1 through V3 all shipped weights on HuggingFace), but V4 remains report-only for now with no published release date for weights or code. Atlas Cloud has announced upcoming integration. If you need SkyReels access today, V3 weights are available on the SkyworkAI GitHub — useful for understanding the model family while V4 infrastructure catches up.

FAQ

Is there a free trial for HappyHorse-1.0?

The official demo at happyhorses.io is publicly accessible. Whether it requires account creation or has session limits isn’t currently documented — verify this directly on the site before assuming unlimited free access.

Can I access HappyHorse-1.0 through any existing API provider?

Not as of publication. Replicate, fal.ai, and HuggingFace Spaces don’t show confirmed HappyHorse-1.0 support. API aggregators depend on model weights being publicly available first, and those haven’t been released yet.

When will the HappyHorse-1.0 API be available?

No timeline has been announced. The clearest signal will be when the GitHub repository and Model Hub — both currently marked “coming soon” on the official site — go live. That’s the trigger to watch.

What hardware is needed to self-host HappyHorse-1.0?

Weights aren’t public yet, so exact requirements can’t be confirmed. Based on the described architecture (40-layer unified Transformer with shared middle layers), expect requirements similar to other large-scale video models: a minimum of one high-VRAM GPU (24GB+) with quantization enabled, or multi-GPU setups for comfortable inference. Plan for this, but don’t spec hardware until official weight documentation is published.

Is the live demo representative of full model quality?

Demos sometimes run at reduced resolution or with rate limiting that affects generation quality. The Artificial Analysis leaderboard scores are based on blind user votes in the Video Arena, which is a separate environment from the public demo. Treat demo outputs as directional, not definitively representative of production quality.

What I’d Actually Do Right Now

If you’re a developer or AI video team trying to decide what to do with HappyHorse-1.0 today: test it through the official demo to form your own quality opinion, then use Kling 3.0 for anything that needs to ship. Keep the bookmarked — when HappyHorse-1.0’s GitHub goes from “coming soon” to live, that’s when the access picture changes fast.

I’m watching it. But I’m not holding production workflows for it yet.

Previous posts: