MaxClaw vs OpenClaw: Which Should You Actually Use?

Hello, I’m Dora. Over a week, I tried MaxClaw and OpenClaw side by side on two real tasks: a support summarizer that writes internal notes, and a tiny research aide that pulls quotes into a brief. Nothing fancy you know. I kept a short log: setup time, hiccups, and where my shoulders finally dropped. This is me sorting out MaxClaw vs OpenClaw in plain terms, not hype.

The One-Sentence Difference

MaxClaw is a managed cloud that handles the plumbing for you: OpenClaw is the same idea, but self-hosted, where you’re responsible for the pipes.* That’s the real fork in the road: convenience and constraints vs control and chores.

MaxClaw — The Managed Cloud Option

Setup time: under 20 seconds vs hours

I timed it twice. From account to first working endpoint in MaxClaw: 18 seconds on my second run (28 on the first while I hesitated over a naming field). I dropped in an API key, picked a starter template for message routing, and I was done. If you want to see the exact onboarding flow step by step, this guide on how to set up MaxClaw walks through it in under five minutes. No ports. No env files. I pointed my small support summarizer at it and it just… worked. There’s a small relief in not touching Docker on a Tuesday.

With OpenClaw, this same path took me a couple hours, mostly because I poked at defaults I didn’t fully need yet. That’s on me, but it’s also the self-hosted tax: you’ll tinker even when you don’t have to.

Cost: subscription vs unpredictable API bills

MaxClaw is a subscription. You can see the ceiling before you start. For teams, that predictability matters more than the theoretical savings of self-hosting. The hidden win wasn’t dollars: it was fewer tabs and fewer places to monitor. Consolidation is a kind of savings.

OpenClaw rides directly on model APIs you bring (or local models you run). On paper, that can be cheaper at low volume. In practice, I saw small spikes, a few long context calls on GPT-4 ran hotter than I expected. Nothing dramatic, but classic “why is this endpoint suddenly expensive?” energy. If you’re disciplined with rate limits and caching, you can tame it. If not, costs wander.

What you trade off (model flexibility, full control)

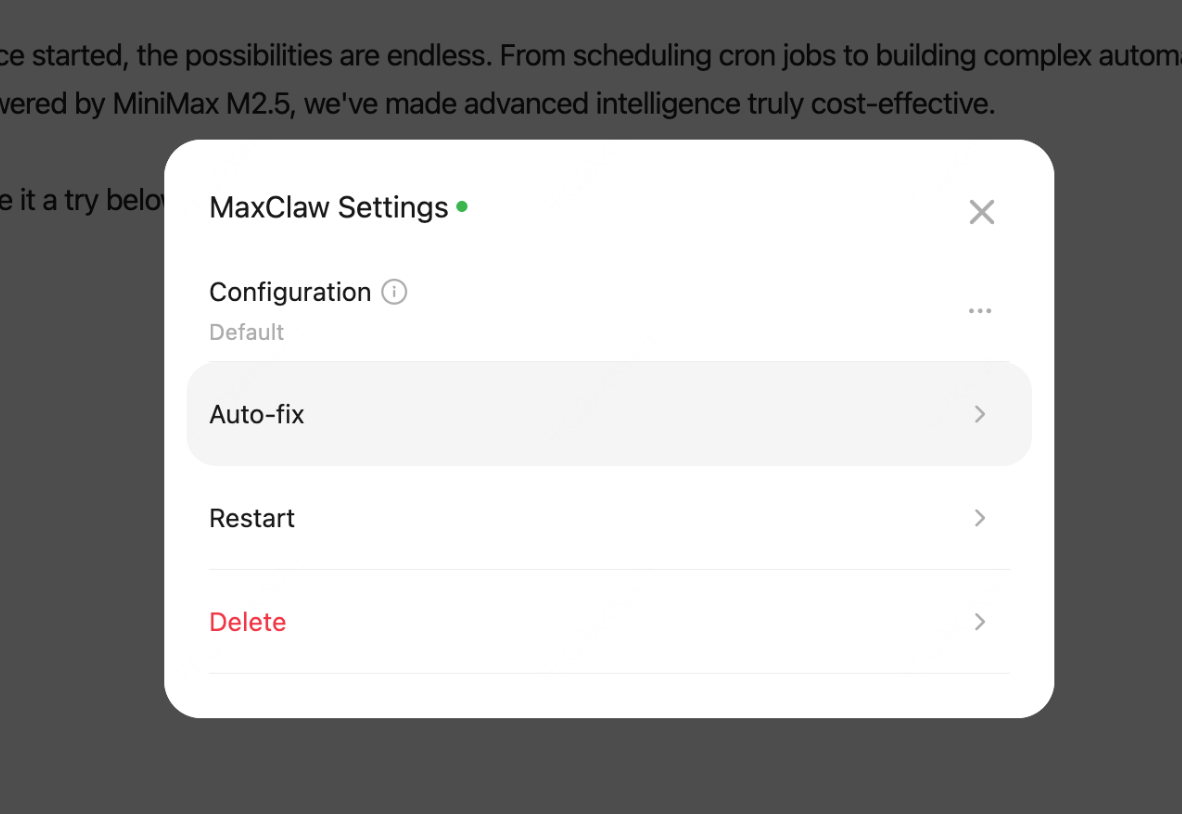

MaxClaw gave me speed and fewer decisions. The trade-off is obvious: it curates models and features. You accept their menu, their observability layer, and their rollout pace. When I tried swapping the summarizer from GPT-4 to Claude mid-week, I had to follow MaxClaw’s lane for that switch. It was fine, just not as open-ended as my own stack.

Control matters when you care about edge behavior. I couldn’t patch a weird tokenization edge case the way I would in my own code. On the other hand, I didn’t have to maintain a queue worker or a retry policy. Less power, fewer papercuts. Pick your poison.

OpenClaw — The Self-Hosted Option

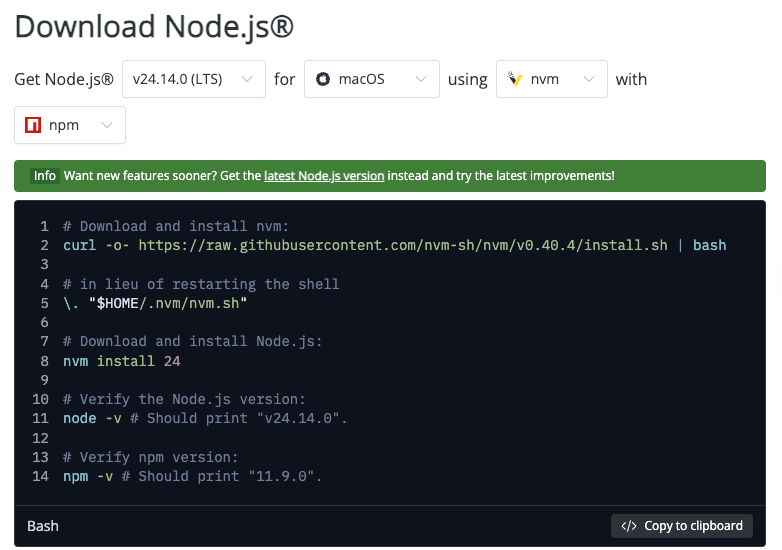

What you actually need: Node.js, 1.5GB RAM, a server

I set this up on a small Ubuntu VM with 2 vCPU and 2GB RAM. You’ll need Node.js (I used v20: grab it from the official Node.js downloads page), about 1.5GB free memory to be comfortable, and somewhere to run it (a basic cloud instance is fine). Add environment variables, reverse proxy if you want TLS, and a process manager. I used PM2. Nothing wild, just work.

Two snags from my notes: I forgot to open the healthcheck path on the firewall (5 minutes lost), and I mixed up an environment variable name (10 minutes reading logs). Not deal-breakers, but real.

Full model flexibility (Claude, GPT-4, etc.)

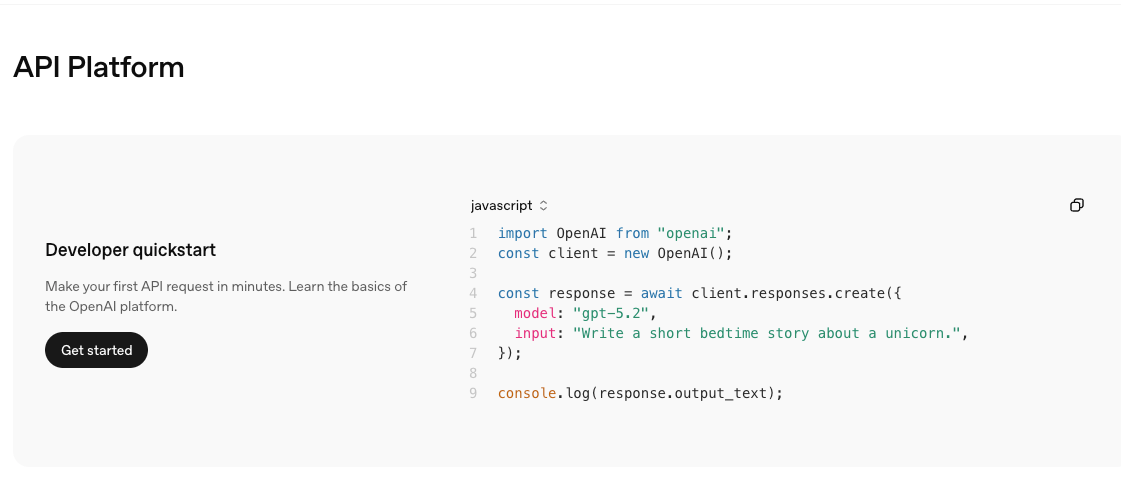

Once running, OpenClaw let me wire in whichever model made sense. For the research aide, I switched between Claude 3.5 Sonnet (snappy, strong on quoting) and GPT-4 Turbo (steadier formatting). If you live in multiple model worlds, this freedom feels normal and necessary. You just point keys at the router and go. For docs, Anthropic’s API reference and OpenAI’s API docs covered the edge cases I hit.

Who genuinely benefits from self-hosting

- Developers who want to instrument every step, your own logging, your own retries, your own redaction.

- Teams with compliance rules that simply prefer owning the box.

- People who enjoy tuning prompt pipelines and caching at the router layer.

If you only need “one endpoint that behaves,” self-hosting can be overkill. It shines when you’ll keep evolving the stack and want the freedom to swap pieces without waiting on a vendor roadmap.

Side-by-Side Comparison Table

Here’s the quick view I wish I had before I started.

I wrote two tiny playbooks during testing: one for incident checks (what to look at when outputs drift) and one for cost sanity (logs to sample weekly). With MaxClaw, those playbooks shrank to a few dashboard clicks. With OpenClaw, they’re scripts and shell aliases. Neither is wrong. It’s just where the time goes.

Real Decision Guide

Choose MaxClaw if…

- You want working endpoints today, not this afternoon.

- Predictable billing matters more than squeezing every API dollar.

- You’d rather trade some model flexibility for fewer moving parts.

- Your use case is steady (summaries, routing, light agents) and you value built-in observability over custom metrics.

- You don’t have a person who enjoys maintaining infra, or you are that person and you’d like your weekends back.

Choose OpenClaw if…

- You need full control over model selection, token limits, and retries.

- Compliance or data residency nudges you toward your own server.

- You’re iterating fast and want to own the pipeline: caching, guards, evals, the lot.

- You have the time (and temperament) to keep logs, update dependencies, and monitor costs.

- You plan to experiment with multiple providers (Claude, GPT-4, others) and don’t want a vendor’s menu deciding your options.

The Hybrid Approach (Best of Both Worlds?)

What actually stuck for me was a split. I kept MaxClaw for the support summarizer, it’s predictable and low-drama, and the managed logs helped me catch a prompt drift in under five minutes. I moved the research aide to OpenClaw so I could hop between models without waiting on anyone. The boundary is simple: stable tasks go managed, experimental ones live on my box.

Does this add another place to check? Yes. But it also lowers pressure. If one side needs maintenance, the other keeps humming. I don’t think hybrid is “best,” it’s just calm. And calm tends to age well.

Last note from the week: the tools faded into the background once the routes were set. That’s my quiet test for fit. If I forget which one I’m using while I’m getting work done, it’s probably the right choice for that job.