Is HappyHorse-1.0 Open Source? What We Can Verify

HappyHorse-1.0 claims open source — but GitHub and HuggingFace links are currently unavailable. Here's what's verified and what to watch.

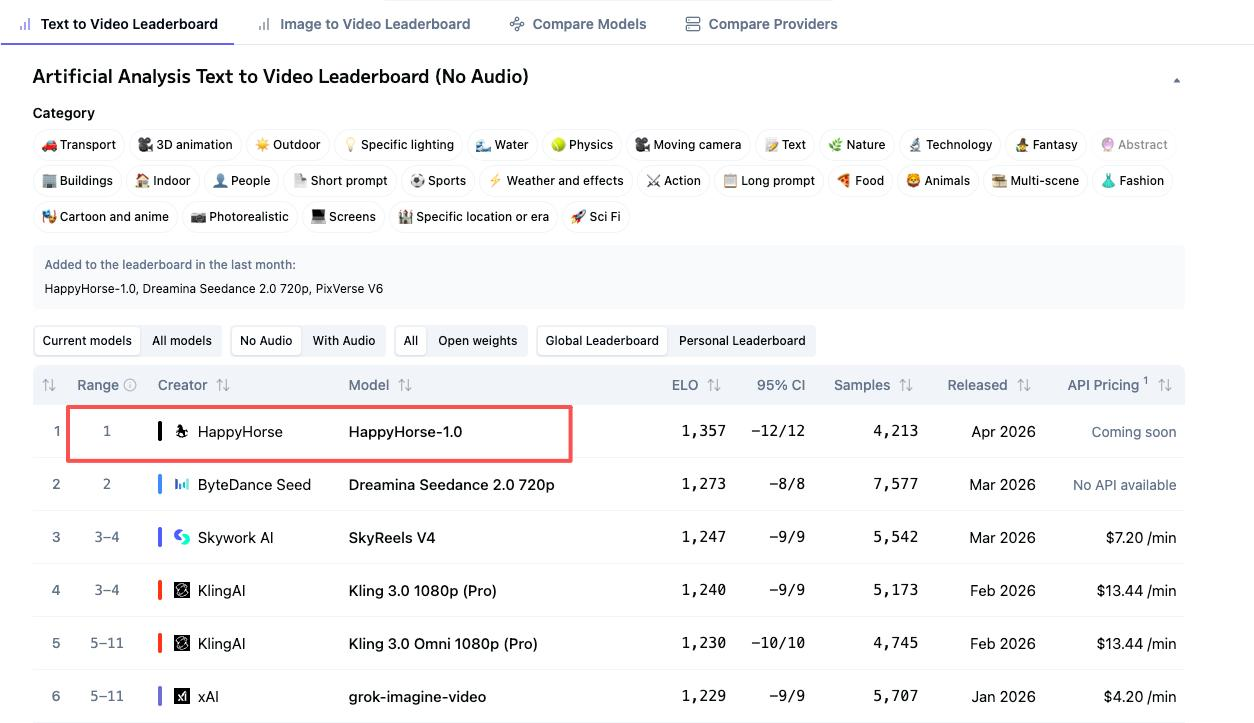

I was halfway through setting up a local WAN 2.2 pipeline last Tuesday when a name I didn’t recognize climbed to the top of the Artificial Analysis Video Arena. HappyHorse-1.0. Elo 1333. Ahead of Seedance 2.0, ahead of Kling 3.0, ahead of everything I’d been testing for the past month.

My first thought wasn’t “wow.” It was “where’s the repo?”

Someone in a Discord channel I follow tagged me — “Dora, have you seen this one?” — and linked the official site. I clicked through. Saw the phrase “fully open source” in bold. Saw “GitHub — coming soon.” And that tiny gap between the claim and the link is the kind of thing that sticks with me. I’ve spent enough time chasing download buttons that don’t exist to know the difference between a model you can use and a model you can read about.

So I spent the next few hours doing what I usually do: pulling at threads, checking HuggingFace, scanning X for anyone who’d actually gotten weights onto a local machine.

Here’s what I can confirm — and what I can’t — as of April 8, 2026.

What HappyHorse-1.0 Claims About Open Source

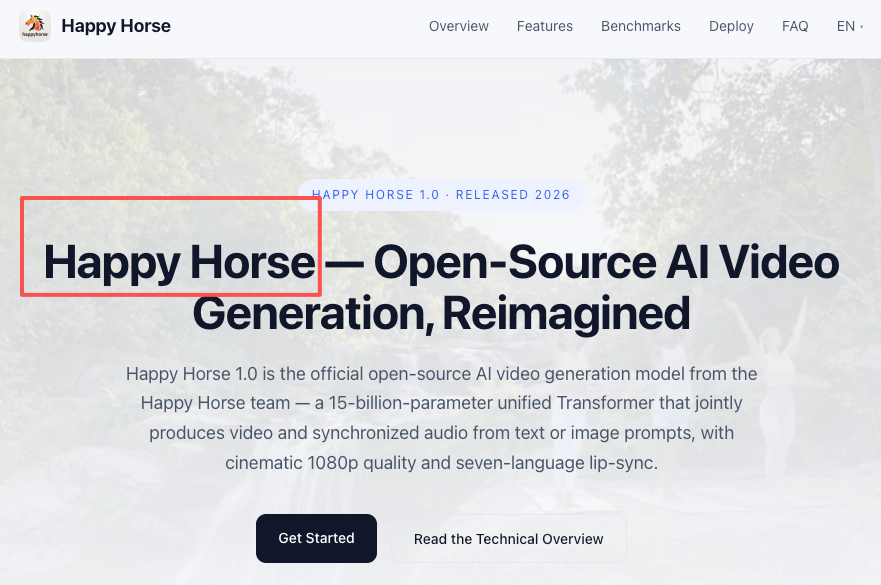

The language on happyhorses.io is confident. The site describes the model as “fully open” and states that base model, distilled model, super-resolution model, and inference code have all been released. The claimed architecture is a 15B-parameter unified 40-layer self-attention Transformer — no cross-attention — capable of joint video and audio generation from text. It reportedly supports seven languages for lip-sync and uses DMD-2 distillation to bring denoising down to eight steps.

Those are strong claims. But here’s the thing: claims and availability are two separate questions. And the gap between them is exactly where builders get burned.

It’s also worth noting that nobody has publicly confirmed who built HappyHorse-1.0. Artificial Analysis itself described it as a “pseudonymous” model when adding it to their arena. Community speculation on X has ranged from it being a WAN variant to a Seedance competitor, but nothing verified. One affiliated site mentions ByteDance infrastructure, though I couldn’t independently confirm that either.

What’s Actually Verifiable Right Now

This is where the story gets thin.

GitHub: The happyhorses.io site has a GitHub link. It says “coming soon.” As of today, there is no public repository — no code, no README, no license file. I searched GitHub for any related org or fork. Nothing.

HuggingFace: Same situation. The “Model Hub” link on the site also reads “coming soon.” No model card exists on HuggingFace, no weight files are listed, no community discussion threads.

Demo: There is a live demo available through the website. It generates video clips from text prompts. The outputs I saw looked genuinely impressive — clean motion, decent coherence. But a demo tells you the model works. It doesn’t tell you whether you can run it yourself, fine-tune it, or build on it.

Bottom line: as of this writing, you can’t download HappyHorse-1.0. You can’t inspect its weights, read its license, or run it locally. The “fully open source” label currently exists only as a stated intention.

Why This Gap Matters for Builders

If you’re a developer evaluating whether to integrate an open model into a production pipeline, the label matters less than the artifact. And there are three terms that get mixed up constantly in this space:

Open source means the full package — weights, training code, architecture details, and a license that permits modification and redistribution. The Open Source Initiative has been vocal about this distinction: releasing weights alone doesn’t qualify.

Open weights means the trained parameters are downloadable and usable, but the training data, code, and methodology may stay private. You can run inference and fine-tune, but you can’t fully reproduce or audit the model.

Open access means you can use the model through an API or demo, but nothing is downloadable. You’re a tenant, not an owner.

Right now, HappyHorse-1.0 falls into the third category. Maybe that changes tomorrow. But building a workflow around an unreleased model is a risk, especially if your timeline depends on local deployment or custom fine-tuning.

How to Track Release Status

If you’re watching this space — and I think it’s worth watching — here’s where to look:

GitHub: Keep an eye out for a new organization page. When (or if) the code drops, that’s where it’ll land. No repo currently exists, but the site suggests one is planned.

HuggingFace and model hubs: The model card will be the clearest signal. Once weights are published there with a license file, you can start evaluating for real. HuggingFace’s model card documentation outlines what a proper release looks like.

Community signals: The discussion on X has been active. Researchers and AI video creators are already comparing HappyHorse outputs to other arena entries. Reddit and Discord channels around AI video generation tend to surface forks or unofficial uploads quickly when they appear.

Artificial Analysis: Their video leaderboard is probably the most objective place to track performance over time. Elo scores shift as more votes come in, so today’s ranking may not hold.

Open Source Track Record in AI Video: A Reference Frame

I find it helpful to compare against models that have delivered on their open-weight promises. It gives you a baseline for what “real” looks like.

LTX-2 from Lightricks is a solid reference. They announced open source, then followed through: full weights on HuggingFace, a GitHub repo with inference code, ComfyUI integration, a training framework, and a clear license. You can download it, run it locally, fine-tune with LoRAs, and build production workflows around it. That’s what a credible open-weight release looks like in practice.

WAN 2.2 is another. Alibaba’s team released multiple model variants on HuggingFace — text-to-video, image-to-video, a lightweight 5B version — along with full inference code on GitHub, Diffusers integration, and Apache 2.0 licensing. Community contributions followed quickly: ComfyUI wrappers, quantized versions, distillation experiments.

What to look for in any release to confirm it’s actually usable:

- Weights available for download (not behind a waitlist or “coming soon” page)

- A license file — Apache 2.0 is the clearest green light for commercial use, but even a custom license is better than nothing

- Inference code that runs without proprietary dependencies

- A model card with architecture details, limitations, and intended use

HappyHorse-1.0 has none of these today. That’s not a judgment on the model’s quality — the arena results suggest it’s genuinely strong. It’s a judgment on its current accessibility.

FAQ

Can I download HappyHorse-1.0 weights right now?

No. As of April 8, 2026, no weights are publicly available. Both the GitHub and Model Hub links on the official site display “coming soon.” There are no third-party uploads or community forks that I’ve been able to find.

Is HappyHorse-1.0 on HuggingFace?

Not yet. There’s no model card, no weight files, and no organizational page on HuggingFace associated with HappyHorse. This could change at any time, so it’s worth checking periodically.

What license will HappyHorse-1.0 use?

Unknown. The affiliated site mentions “commercial usage rights” in the context of their hosted platform, but no standalone model license has been published. Until a license file ships with the weights, there’s no way to assess terms for self-hosted or commercial deployment.

Is it safe to build commercial workflows around HappyHorse-1.0 right now?

I’d wait. Without downloadable weights, a published license, or even a confirmed developer identity, there’s too much uncertainty for production use. If you need an open model for commercial video generation today, LTX-2 and WAN 2.2 are both available with clear licensing.

How does HappyHorse-1.0 compare to LTX-2 or WAN 2.2 on openness?

On output quality, HappyHorse is competitive — possibly ahead in some arena metrics. On openness, it’s not in the same category right now. LTX-2 and WAN 2.2 both have published weights, public repos, inference code, and defined licenses. HappyHorse has a demo and a promise. That gap may close, but it hasn’t yet.

I’ll probably revisit this once (or if) the GitHub repo goes live. The model itself looks interesting — the arena performance is hard to ignore. But I’ve been around long enough to know that impressive demos and downloadable weights are two very different things.

For now, I’m watching. Not building on it yet.

Previous posts: