WAN 2.7 vs WAN 2.6: Feature Diff & Upgrade Decision

Hello, everyone, I’m Dora. I’ve been watching the WAN model family move through its version cycles quietly — not with excitement, but with the attention you pay to infrastructure decisions that are hard to undo. WAN 2.7 is planned for March 2026 and the feature list is notable enough that it’s worth laying out exactly what changes, what stays the same, and where the uncertainty still lives before you touch anything in production.

30-Second Decision (Read This First)

Upgrade now if you need:

- First-frame and last-frame control in a single clip (structural scene control, not just animation anchor)

- Multi-image input via 9-grid layout for richer I2V composition

- Natural language instruction editing on existing videos — change background, lighting, or wardrobe without re-generating from scratch

- Up to 5 simultaneous video references (2.6 caps at fewer; 2.7 expands this significantly)

- Combined subject + voice reference in a single pass (R2V enhanced)

Stay on 2.6 if you need:

- A stable, documented API with tested production behavior

- Self-hosted deployments — WAN 2.7’s open-weight status is not yet confirmed

- Budget clarity — 2.7 pricing hasn’t been published as of this writing

Feature Comparison Table

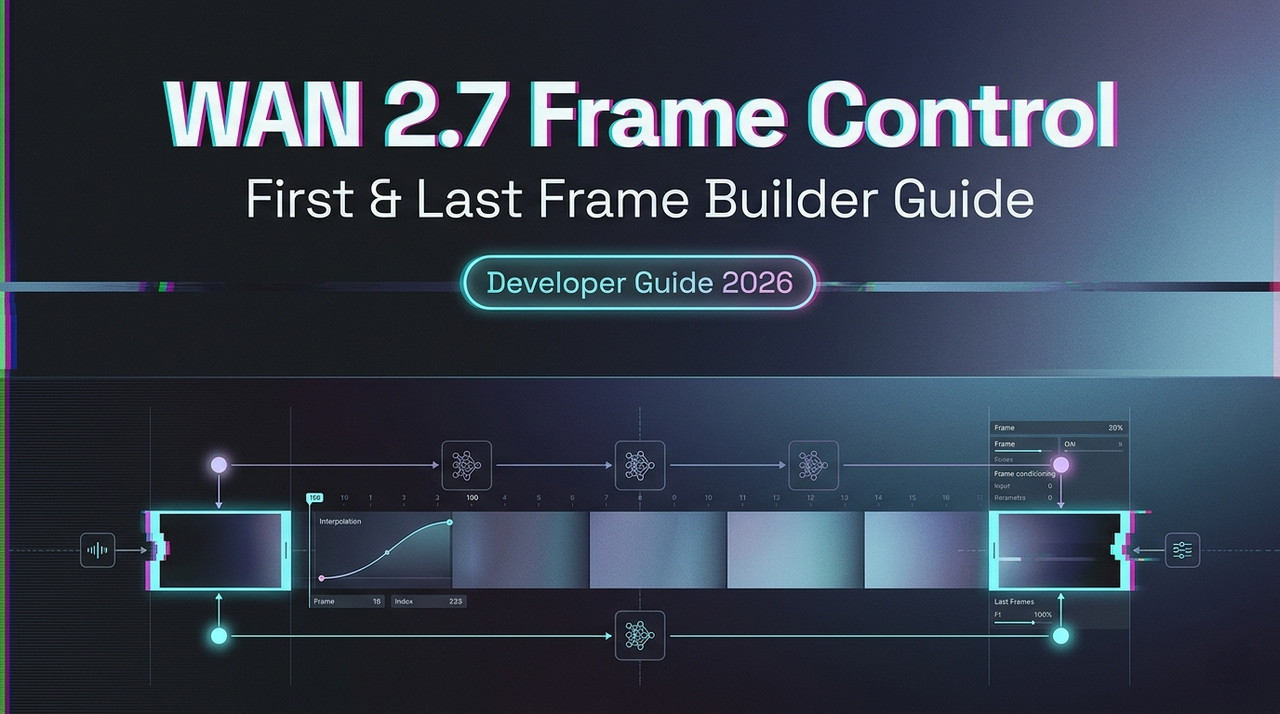

First/Last Frame Control: 2.6 vs 2.7

WAN 2.6 introduced basic first-frame anchoring for I2V. WAN 2.7 adds last-frame control alongside it, meaning you can define both endpoints of a clip. For teams building narrative sequences or looping content, this is the difference between describing motion and actually composing it. The model infers the trajectory between your two keyframes.

This has real workflow implications: instead of generating multiple candidates and hoping one lands on your intended ending, you constrain the output space from both ends.

Multi-Input I2V (9-Grid): New in 2.7

This is the most structurally novel feature in 2.7. Rather than a single reference image, the 9-grid layout accepts a 3×3 arrangement of images — allowing you to feed multi-angle references, sequential poses, or scene variants into a single I2V generation. The model uses this structured visual input to improve scene composition and reduce drift.

Whether this meaningfully outperforms well-prompted single-image I2V in practice is something I’d want to test directly. The architecture is interesting. The real-world delta needs measurement.

Voice Reference: R2V in 2.6 → Enhanced in 2.7

WAN 2.6 introduced Reference-to-Video with voice input. WAN 2.7 refines this into a combined subject + voice reference — a single workflow that anchors both character appearance and voice direction simultaneously. For teams building virtual presenters or character-led content at scale, this reduces the number of pipeline steps considerably. You can read about the broader audio-visual sync architecture underpinning this family in Alibaba’s Wan model research on Hugging Face.

Instruction-Based Editing: New in 2.7

This is the feature that makes 2.7 feel qualitatively different from a pure generation model. You can pass an existing video alongside a natural language instruction (“change the background to a rain-soaked street,” “swap the jacket to red”) and receive an edited output rather than a new generation.

This matters operationally: iteration cycles that previously required re-generating from scratch can now be handled as lightweight edits. It also means your prompt strategy shifts — you’ll be writing edit instructions, not generation prompts.

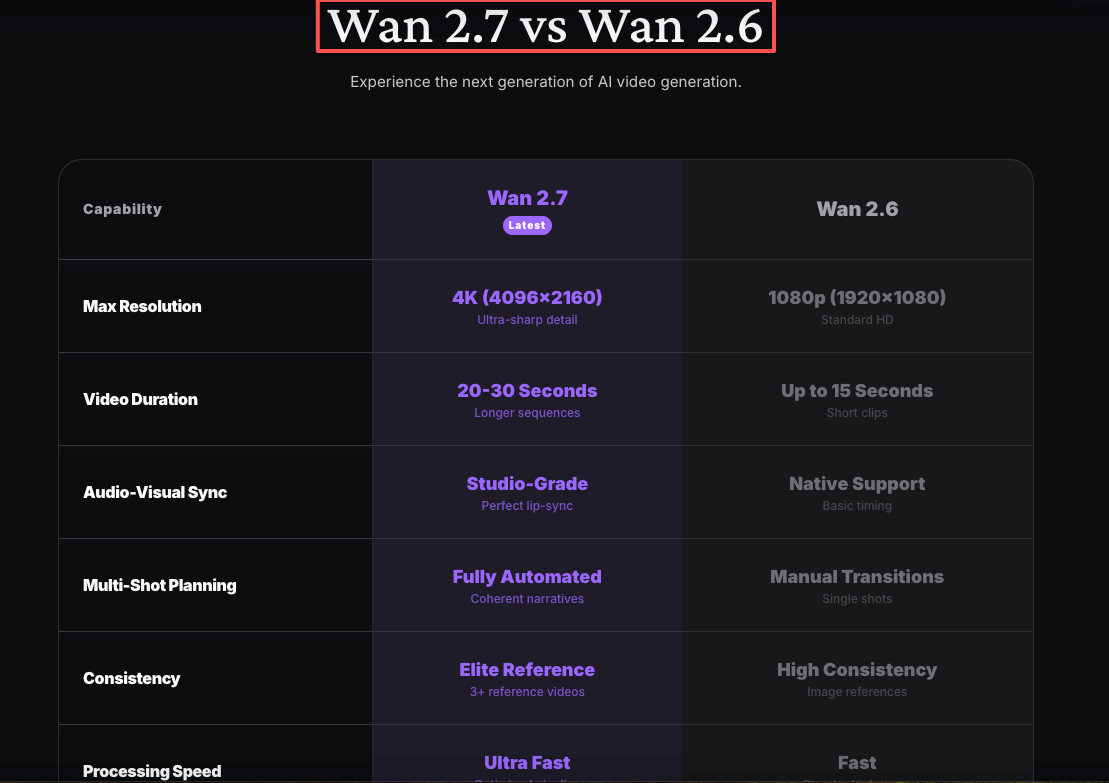

Output Resolution & Duration

Both 2.6 and 2.7 support up to 1080P and up to 15 seconds. No change here. If resolution or duration were your primary constraint, this version doesn’t expand those limits.

Video Reference Count (Up to 5 in 2.7)

WAN 2.6 supports single or dual video references for subject consistency. WAN 2.7 raises this to five simultaneous references, useful for multi-character scenes or production workflows requiring strict brand consistency across reference assets.

API-Level Changes for Developers

New Parameters / Payload Structure

The 9-grid input and instruction-based editing almost certainly require new payload fields — image array structures, an edit_instruction parameter, and possibly a distinct endpoint or mode flag. Until the official API docs drop, treat any third-party parameter speculation as provisional. The WAN model GitHub repository has historically been the first place Alibaba’s team documents schema changes for open-weight releases.

Endpoint and Model ID Changes

Expect a new model ID (e.g., wan-2.7-i2v, wan-2.7-edit) distinct from wan-2.6-i2v. Platforms like fal.ai that provide hosted inference typically publish endpoint availability within days of an official release — worth monitoring their changelog directly.

Backward Compatibility with WAN 2.6 Workflows

Standard I2V and T2V payloads (single image input, text prompt, resolution, duration) should be structurally compatible. The new features appear additive rather than breaking. That said, don’t assume prompt behavior is identical — instruction-following tuning shifts mean prompts calibrated for 2.6 may produce different results in 2.7 even with no payload changes.

Quality & Performance: What the Evidence Shows

Visual Fidelity Claims

Pre-release materials describe improvements to sharpness, color accuracy, and detail preservation. I’m not going to restate those as facts — that’s exactly the kind of claim that needs benchmark data. Once official benchmarks are published, cross-reference against your own representative prompts. Aggregate scores rarely capture edge-case failure modes that matter most to specific workflows.

Audio Sync Improvements

WAN 2.5 introduced native audio generation. WAN 2.6 refined it. WAN 2.7 claims further improvement in audio-visual synchronization. The fal.ai blog on WAN 2.5’s audio architecture gives useful context for how the sync pipeline has evolved — worth reading before evaluating 2.7’s claims with your own test audio.

Motion Consistency

Described as smoother and more physically plausible than 2.6. This is the hardest quality claim to evaluate without running your own clips. Motion consistency degrades unpredictably on edge cases — unusual camera angles, fast motion, complex backgrounds. Run your specific use cases, not generic demos.

Cost Implications of Upgrading

New Feature Cost Structure

9-grid I2V and instruction-based editing will almost certainly carry different cost profiles than standard I2V generation. Multi-input inference is computationally heavier. Budget accordingly, but don’t finalize projections until pricing is live.

Compute Cost: 9-Grid vs Single I2V

Nine reference images versus one is a meaningful increase in input processing. If you’re running high-volume automated pipelines, model this assumption into your cost estimates before migrating: 9-grid likely costs more per generation than single-image I2V at equivalent resolution and duration.

Migration Checklist for Teams Already on WAN 2.5/2.6

- Audit existing payloads for hardcoded model IDs — update to 2.7 endpoint when available

- Re-test your 10 most-used prompts against 2.7 before full migration

- Evaluate instruction-based editing for workflows currently using re-generation for iteration

- Check 9-grid input format against your existing image pipeline

- Hold off on ComfyUI node migration until community-verified 2.7 nodes are published

- Confirm pricing with your inference provider before scaling new feature usage

- Do not deprecate 2.6 workflows until 2.7 API stability is confirmed in production

FAQ

- Can I call WAN 2.7 and WAN 2.6 with the same API key? Almost certainly yes if you’re using a hosted inference provider — model selection is per-request. Confirm with your specific provider.

- Are WAN 2.6 prompts compatible with 2.7? Structurally, likely yes. Behaviorally, not guaranteed. Instruction-following tuning shifts between versions. Treat 2.6 prompts as starting points, not finished assets.

- Does 2.7 change how I structure image inputs for I2V? Standard single-image I2V: probably no change. 9-grid: entirely new structure. Document both paths separately in your codebase.

- What happens to my WAN 2.5 ComfyUI workflows? WAN 2.7 nodes won’t exist until community contributors publish them post-release. The ComfyUI blog has historically been the fastest place to find verified partner nodes for new Wan releases.

- Is WAN 2.7 available to self-host? Unknown at the time of writing. The Wan family has varied — some versions released under Apache 2.0 as open weights, others only via proprietary API. Confirm before building a self-hosting plan around 2.7.

Conclusion

WAN 2.7 is a meaningful version if your work involves iteration, character consistency, or multi-input composition. Instruction-based editing shifts the model from a generation tool into something closer to a video editing pipeline — which changes how you’d structure workflows, not just what prompts you write.

What it’s not: a reason to migrate immediately. API details aren’t finalized, pricing isn’t published, and quality claims need validation against your actual production content. Build 2.7 evaluation into your sprint once documentation drops, run it in parallel with 2.6, and make the migration decision with data rather than release-day enthusiasm.

I’ll be following up with a WAN 2.7 API quickstart once official documentation is live — covering payload structure, the 9-grid input format, and a working instruction-editing example for teams already running 2.6 in production.

Previous Posts:

- See how Sora compares to other video generation models in real workflows

- Explore practical use cases for SkyReels V4 in production pipelines

- Understand how to build consistent characters across AI video generations

- Learn how to turn product images into full AI-generated video ads

- Get a breakdown of Z-Image reference workflows for multi-image generation