WAN 2.7: New Features, API Access & Upgrade Path

Hello, everyone. Dora is here! I’ve been watching the Wan model family closely since 2.1, mostly because once a model starts getting open-source traction in production pipelines, it tends to move faster than the coverage does. WAN 2.7 is still landing — planned to launch within March 2026 — so this isn’t a release review. It’s a pre-flight checklist for teams already running 2.5 who need to decide whether to re-route infrastructure or wait.

No hype. Just what’s confirmed, what’s still fuzzy, and …what it means for your build queue. If you’re still mapping where models like Wan actually fit in a production stack, this breakdown of how AI video generation tools are used in real workflows helps ground the decision before you go deeper into specs.

WAN 2.7 in 60 Seconds (The Short Version)

If you’re evaluating from a product or engineering perspective: WAN 2.7 is shaping up to be a functional expansion more than a pure quality jump. The headline isn’t “better video” — it’s “more control surfaces.” Improvements span visual quality, audio generation, and motion dynamics, but the more interesting additions are structural: first/last frame control, 9-grid multi-input I2V, subject-plus-voice reference, and instruction-based editing.

The delta over 2.5 isn’t a primary resolution. It’s the number of anchoring inputs the model now accepts in a single call.

What’s New in WAN 2.7

First-Frame & Last-Frame Video Control

The first-and-last-frame approach lets users define both the beginning and end of a video using two images, with the model automatically generating the intermediate content. This has existed as a standalone model in earlier Wan series releases (see Wan2.1-FLF2V-14B), but 2.7 brings it in-model rather than as a separate checkpoint.

For production teams: this matters most when you have defined keyframes — a product shot at rest, a product shot in motion — and want the model to interpolate the transition without a full manual animation pass. It’s deterministic on the endpoints. What happens in between is still stochastic, but your compositional guardrails are there.

9-Grid Image-to-Video (3×3 Multi-Input)

WAN 2.7 supports a 3×3 grid synthesis approach, meaning up to nine reference images can be submitted as a structured input for a single video generation task. This is meaningfully different from single-frame I2V.

The practical application: if you’re generating character-consistent content across varied angles or lighting conditions, you can now provide a reference grid …rather than a single anchor. If you’re comparing how different tools handle multi-reference consistency, this guide to best video face swap tools with multi-face and multi-input support shows where current solutions still struggle. It reduces the one-shot luck factor that makes I2V batch jobs expensive to quality-check. Confirm exact API parameter structure at launch — the endpoint schema for grid inputs hasn’t been formally published as of this writing.

Subject + Voice Reference

WAN 2.7 lets you combine a visual subject reference with a voice reference to generate videos where both the appearance and voice of the character are consistent with your inputs. This is an extension of what Wan 2.6 introduced with its R2V model — which enabled users to upload a character reference video with both appearance and voice, utilizing text prompts to generate vivid new scenes starring that same character.

In 2.7, the expectation is that this becomes more tightly integrated rather than a separate model endpoint. For teams building character-driven short-form content or localization pipelines, …this is the feature with the clearest production ROI — provided the voice reference handling is stable under repeated batch calls. For context, this is also where current AI video face swap pipelines start to break down at scale, especially when identity and voice consistency are both required.

Instruction-Based Video Editing

WAN 2.7 supports editing existing videos using natural language instructions — changing backgrounds, modifying lighting, or altering a character’s outfit by describing the change and letting the model handle the rest.

This is a meaningful shift from generation to editing. For product teams running post-production correction loops, it opens up a lighter-weight alternative to manual roto and compositing work for small changes. The key unknown is how the model handles temporal consistency across longer clips when edits affect motion-adjacent elements (clothing on a moving subject, for instance). That’s where similar tools have historically degraded.

The official Alibaba Cloud Model Studio documentation already covers related editing endpoints in the current Wan lineup, which gives some baseline for the API pattern you’ll likely see in 2.7.

Video Recreation / Replication [Needs Verification]

This feature — recreating or replicating existing videos with style or subject changes while preserving motion structure — has appeared in third-party summaries of WAN 2.7. It has not been independently confirmed in official Alibaba documentation as of this writing. Do not build workflow dependencies on this capability until Alibaba’s formal release notes confirm it. Flag it as exploratory if you’re scoping an upgrade project now.

What Stays the Same (Architecture Continuity from 2.6)

The underlying DiT (Diffusion Transformer) architecture carries forward. The Wan series uses a Transformer component based on the mainstream video DiT architecture, employing a Full Attention mechanism to accurately capture long-range spatiotemporal dependencies, ensuring high temporal and spatial consistency.

This matters for teams running local inference: the GPU memory profile and quantization approach won’t change drastically. If your 2.6 inference stack is optimized (FP8 ops, multi-GPU sharding), that investment carries forward. Don’t expect a completely new deployment architecture.

API Access & Availability — What’s Confirmed, What’s TBD

Official Access Paths (DashScope / Wan.video)

The two confirmed official channels for prior Wan versions have been Alibaba Cloud’s DashScope platform — which handles API key provisioning and model access — and Wan.video for creator-facing workflows. Both are expected to carry 2.7 at launch, though regional availability (particularly for international accounts vs. mainland China) should be confirmed at release.

The DashScope API key is loaded from the DASHSCOPE_API_KEY environment variable, with international users pointing to dashscope-intl.aliyuncs.com — the same pattern will almost certainly apply for 2.7 given the established infrastructure.

Third-Party API Availability

At the time of this writing, WAN 2.7 is not yet confirmed on third-party API aggregators. Based on the pattern from 2.2 onward — where ComfyUI native support arrived on launch day for open-source model weights — expect community integrations to follow quickly if 2.7 ships with public weights.

Whether 2.7 ships as open-source is itself unconfirmed. See FAQ below.

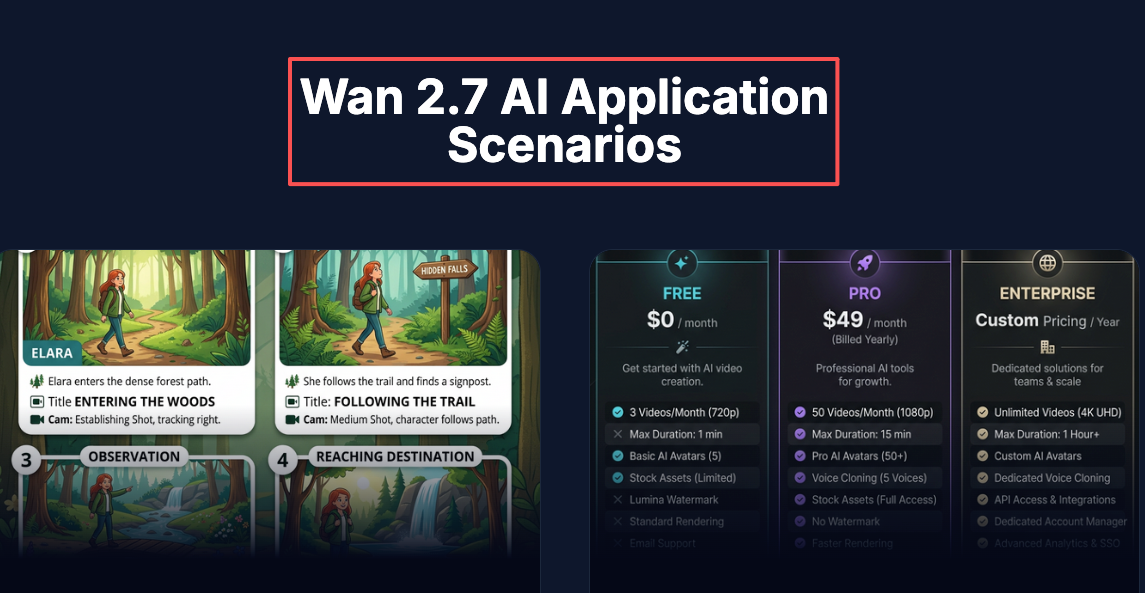

Who Should Prioritize WAN 2.7 Right Now

Teams Doing Reference-Heavy Video Production

If your pipeline already relies on character consistency across shots — and you’ve been compensating with multiple generation passes or ControlNet workarounds — the subject + voice reference and multi-frame grid input are the two features that most directly reduce that overhead. Worth scoping an upgrade.

Builders Who Need Multi-Input I2V

Single-image I2V works until you need consistency. The 9-grid input changes the reference surface area significantly. If you’ve been batching workarounds to simulate this, 2.7 may consolidate multiple API calls into one.

Creative Teams Who Can Wait on WAN 2.5

If your 2.5 workflows are stable and the new features don’t map to active production bottlenecks, there’s no urgency. The model is still launching. Let the first two weeks of community feedback surface the edge cases before re-plumbing anything.

FAQ

- When is WAN 2.7 officially available via API? The launch is planned within March 2026. Exact API availability date per region: not yet confirmed.

- Is WAN 2.7 open-source like WAN 2.2? Unconfirmed. Wan 2.2 shipped under Apache 2.0, which enabled commercial use and ComfyUI integration. Whether 2.7 follows the same license has not been stated in pre-launch materials.

- Does WAN 2.7 support ComfyUI? No confirmed integration yet. Given prior releases — Wan 2.1 and 2.2 both had ComfyUI support on or near launch day — assume community nodes will follow quickly if model weights are public.

- What’s the pricing compared to WAN 2.5? No pricing has been announced. The Wan model series has typically been billed per-second or per-video through DashScope. Check Alibaba Cloud Model Studio pricing at launch for updated rates.

- Can I access WAN 2.7 from third-party API providers today? Not yet. The model hasn’t launched. Monitor the official Wan GitHub and DashScope docs for release confirmation, then check platform support from there.

What to Watch After Launch

A few things worth tracking as 2.7 stabilizes in the first weeks:

— Temporal consistency on instruction edits: Instruction-based editing is the feature most likely to behave differently in practice versus the preview framing. Watch community outputs closely.

— Open-source weight release: If weights ship publicly, expect ComfyUI workflows, Diffusers integration, and quantized variants within days. If not, the DashScope API remains the primary access path — and regional latency becomes a real workflow variable.

— Video recreation feature status: This one needs an official source. Don’t spec it into anything until Alibaba confirms it explicitly.