SkyReels V4 vs SkyReels V2: How Much Has the Model Actually Improved?

Hi, I’m Dora. I didn’t plan to compare SkyReels this week. I just wanted a looping background clip for a landing page mock, and my usual setup felt heavier than it should. That small weight, clicking through old nodes, waiting on previews, guessing at audio timing, made me pause. So I set V2 and V4 next to each other and ran the same prompts through both. Not to crown a winner. Just to see where the work felt lighter.

If you’re here for a simple verdict, you won’t find it. SkyReels V2 and V4 solve different pieces of the puzzle. This is my field note version of “skyreels v4 vs v2,” written after a handful of real runs between Feb–Mar 2026.

A Quick SkyReels Family Timeline

V1 (human-centric, Feb 2025) → V2 (infinite length) → V3 (audio experiments) → V4

I first touched SkyReels around V1 in early 2025. It felt like a careful project, human-in-the-loop, slower but steady. V2 arrived and quietly changed the center of gravity: “infinite” video via diffusion forcing. Not infinite in the poetry sense, actually unbounded sequences you could keep feeding frames into.

V3 played with audio more seriously. I remember decent alignment on speech beats, but it still felt like two trains sharing a track: audio on one, video on the other, waving across the gap.

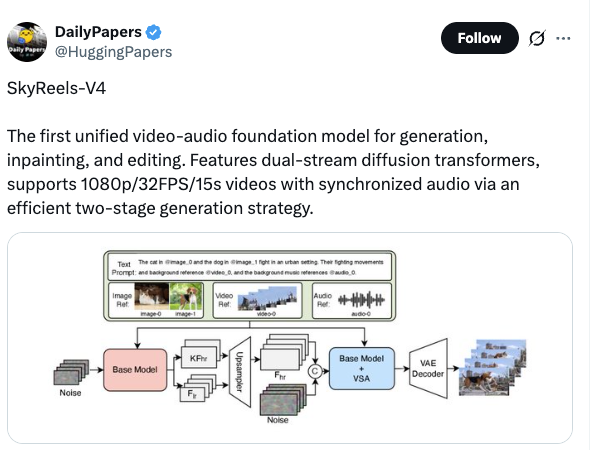

V4 tightens it. Different priorities, different defaults. It isn’t a linear upgrade so much as a reset of what “a unit of output” means. With V4, a clip becomes a cohesive artifact, audio and video produced together, at higher native quality, with a ceiling on length. That ceiling is an intentional trade.

What V2 Did Really Well

Diffusion Forcing for infinite video

The first time I used V2’s diffusion forcing for long-form, I overshot. I let it run during lunch and came back to four minutes of eerily consistent motion, like a music visualizer that forgot to stop. That was both the thrill and the risk: you could go on and on. In practice, I learned to treat it like a camera rolling until I had enough natural motion to cut.

For looping backgrounds, textures, abstract motion, V2 carried the weight. The mental relief came from not juggling restarts or timestamps. I’d set a direction, then keep or trim as needed. When I wanted a 45–60 second backdrop for an event page last month, V2 got me there in one pass. No stitching, no scene boundaries.

Open-source, ComfyUI compatible

I also appreciated how V2 slotted into my existing graph. ComfyUI nodes, community snippets, a few tiny custom tweaks, I could keep my house plants in place while rearranging furniture. If you have a mishmash rig (I do) and sometimes collaborate with folks who bring their own graphs (also me), V2 plays well. That matters more than it sounds. The time saved isn’t just minutes: it’s fewer mental branches. Less “where did that converter node go?”

I noticed V2 was forgiving on hardware, too. Not cheap to run, but I could scale down without everything collapsing. If someone sent me a preset, it usually “just worked” after minor nudges. That’s a boring strength. I like boring strengths.

What V4 Changes Fundamentally

Audio becomes a first-class citizen

In V4, audio isn’t an afterthought. It’s baked in. I tested this by generating a short promo clip for a podcast trailer on Feb 27 and again on Mar 2 with a slightly different voice bed. V4 synced visual emphasis to the kick and snare more cleanly than any V2 pipeline I’ve assembled. Not perfect, but natural enough that I didn’t reach for keyframes.

The simple version: V2 could attach audio: V4 composes with it. If your work depends on beat-matched visuals or voice-guided pacing, V4 reduces the elbow grease.

Unified architecture vs separate pipelines

What this felt like: fewer switches in my head. In V2 land, I’d think “audio world” and “video world” and spend time gluing decisions between them. In V4, I give a single brief and let the model carry context across both streams. When I adjusted the voiceover emphasis (one line softer, one line sharper), V4 rebalanced cuts and motion to match. With V2, that would’ve meant a partial rebuild.

Less visible benefit: fewer fragile handoffs. The number of files I passed between steps dropped. My project folder looked calmer, fewer temp exports, fewer naming rituals. It’s small, but those small things signal whether a tool respects the way people actually work.

Resolution and quality jump

The visual jump in V4 showed up most in edges and motion consistency. Thin details, signage, fabric textures, hair against a window, held longer before smearing. On my runs, native clarity at 1080p felt reliable: 4K upscales held together better than my old V2 stack. I still saw minor shimmer on fine diagonals, but fewer of those “oil painting” frames that slip into long V2 sequences.

Two caveats I wrote down:

- The first frame quality in V4 is strong, but early micro-jitter can appear on complex scenes. It usually settles by second three or four.

- Color holds better in V4, yet aggressive grade shifts mid-clip can confuse the model. I got cleaner results grading after export rather than mid-prompt.

Overall, if your deliverable is a short, polished piece with sound baked in, V4‘s defaults point you there with fewer detours.

What V2 Still Wins At

Video length (V4 = 15s max, V2 = infinite)

This is the obvious one. V4 caps at 15 seconds right now. For social teasers, intros, or product loops, that’s fine. For ambient canvases, long explainers, or gallery walls, it isn’t. V2’s “let it run” mode still makes more sense for anything over half a minute. I don’t have to pre-plan scene boundaries. I can discover the moment in the middle and trim outward.

I tried to fake length in V4 by chaining outputs. It worked, technically, but I could feel the seam. The flow changed at each hop, like splicing two songs in the same key but different drummers.

Wider hardware/integration support today

V2 has a longer tail in the wild. More examples, more community nodes, more posts from people solving edge cases you’ll also hit. If you’re running mixed machines (I sometimes bounce between a studio box and a travel laptop), V2’s tolerance for variation helps. I loaded a teammate’s V2 graph last week and it ran after one patch. The equivalent V4 workflow felt pickier about environment and versions.

If your stack relies on ComfyUI-plus-random-helpers, V2 asks fewer questions. That can be the difference between shipping today and poking a dependency chain for an afternoon.

Decision Guide: V2 or V4?

Here’s how I’d frame it after a week of back-and-forth runs and a few real deliverables.

Choose V4 if:

- Your output lives under 15 seconds and need to feel finished out of the box.

- Audio matters, beat sync, voice-led pacing, or music-driven motion.

- You value fewer moving parts, even if it means less room for long-form experiments.

Choose V2 if:

- You need sequences longer than 15 seconds without obvious seams.

- Your workflow is already ComfyUI-heavy and you trade presets with collaborators.

- You’re okay shouldering more manual polish in exchange for open-ended length and wider compatibility.

What surprised me

- V4 reduced my project sprawl. Fewer temp files, fewer half-baked stems. That’s a different kind of speed, less context switching.

- V2 still felt more like clay. I could push and stretch it without the model nudging me back into a “short clip” mindset.

Why this matters

Most of us don’t need another tool. We need fewer steps and steadier outcomes. V4 steers toward done. V2 steers toward open. Neither is universally better. It’s about the shape of your day.

If you’re on deadlines with short formats, V4 is the calmer path. If you’re building ambient canvases, live visuals, or anything that breathes past 15 seconds, V2 keeps your hands free.

This worked for me, your mileage may vary. I’ll probably keep both installed. One for finishing with sound, one for when I just want the camera to keep rolling. The small question I’m sitting with: will V4 ever lift the cap without losing its composure? I’d like that. But I’m not in a hurry.