Introducing Runway Gen4 Aleph on WaveSpeedAI

Introducing Runway Gen4 Aleph on WaveSpeedAI

The landscape of video editing is undergoing a fundamental transformation. What once required expensive software, specialized skills, and hours of painstaking frame-by-frame work can now be accomplished with a simple text prompt. Runway Gen4 Aleph represents this new frontier—a state-of-the-art video-to-video model that brings professional-grade video editing capabilities to everyone through the power of natural language.

WaveSpeedAI is thrilled to offer Runway Gen4 Aleph through our inference platform, making this groundbreaking technology accessible with no cold starts, reliable performance, and straightforward per-second pricing.

What is Runway Gen4 Aleph?

Runway Gen4 Aleph is an in-context video editing model that understands and transforms your footage based on text instructions. Unlike traditional video editing tools that require manual manipulation of every element, or earlier AI models that regenerate videos from scratch, Aleph works directly with your existing footage—understanding the scene’s structure, lighting, depth, and motion to apply intelligent edits while maintaining visual coherence.

Released in July 2025, Aleph emerged from Runway’s pioneering research in visual AI. The company behind tools used in Oscar-winning productions like “Everything Everywhere All at Once” has distilled years of advancement into a model that democratizes what was previously the domain of VFX studios with million-dollar budgets.

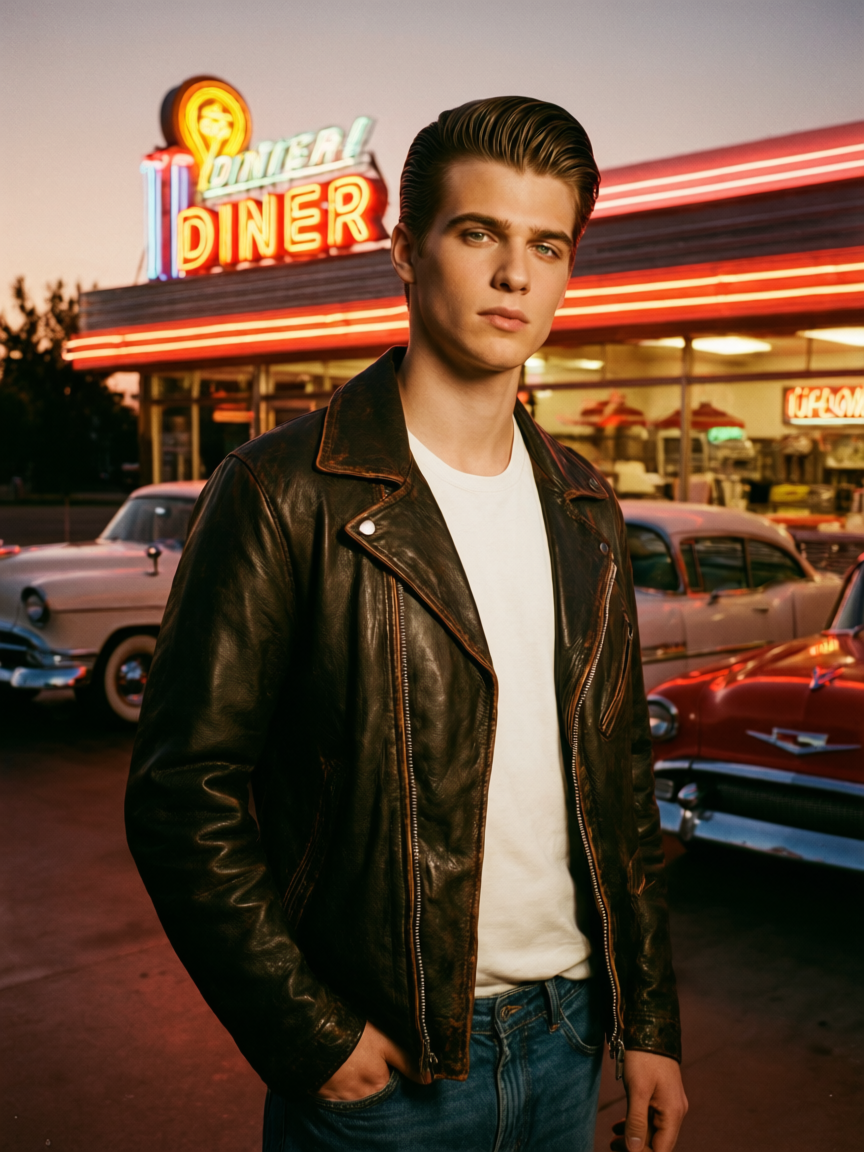

The core principle is simple: upload a video, describe what you want changed, and Aleph handles the rest. Want to remove a person from your footage? Change the time of day? Transform your video into an anime style? Just tell it what you want.

Key Features

Natural Language Editing Describe your edits in plain English. No need to learn complex software interfaces or spend hours masking and tracking. Simply write “Remove the people walking in the background” or “Make the sky look like a dramatic sunset” and Aleph interprets and executes your vision.

Context-Aware Intelligence Aleph doesn’t just apply filters—it understands your footage. It recognizes what’s in focus, where light sources are positioned, who’s moving and how, and maintains this understanding throughout the transformation. This context awareness ensures edits look natural rather than artificially composited.

Reference Image Guidance For style transfers and visual transformations, you can provide a reference image that guides the target aesthetic. Want your corporate video to have the color palette of a specific film? Upload a still as reference and let Aleph match that look across your footage.

Flexible Output Formats Support for multiple aspect ratios (16:9, 4:3, 1:1, 3:4, 9:16) means you can optimize output for any platform—from widescreen YouTube videos to vertical TikTok content—without awkward cropping or black bars.

Temporal Consistency Unlike image-based editing applied frame-by-frame, Aleph maintains smooth, stable results across all frames. Objects that are added stay consistent. Removed elements don’t flicker back. Style changes remain uniform throughout the clip.

Built-in Prompt Enhancer Not sure how to phrase your edit request for optimal results? The integrated Prompt Enhancer helps refine vague instructions into precise prompts that yield better transformations.

Real-World Use Cases

Content Creators and Social Media

Transform your existing content library without reshooting. A travel vlogger can change overcast skies to golden hour in old footage. A product reviewer can remove distracting background elements. A fitness influencer can add atmospheric effects to workout videos—all without leaving the editing timeline.

Marketing and Advertising Teams

Test different visual concepts rapidly. Generate multiple versions of the same ad with different color grades, environments, or visual styles. Adapt existing campaigns for different markets or seasons without commissioning new shoots. An e-commerce team can place products in various settings from a single studio shoot.

Independent Filmmakers

Achieve visual effects that previously required dedicated VFX artists. Remove unwanted elements from location shoots. Add atmospheric effects like rain, fog, or snow. Transform contemporary settings into period pieces. Runway’s tools have already proven their worth in award-winning productions, and Aleph extends these capabilities further.

Agencies and Production Houses

Rapid prototyping of visual concepts for client pitches. Quick iterations on style and mood before committing to expensive production. Post-production cleanup that doesn’t require sending footage to specialized facilities.

Educational and Corporate Video

Clean up training videos without reshooting. Update branding elements across legacy content. Add visual polish to internal communications that previously looked like afterthoughts.

Getting Started on WaveSpeedAI

Using Runway Gen4 Aleph through WaveSpeedAI is straightforward:

- Prepare your video: Have your source video ready—either upload it directly or provide a public URL

- Write your prompt: Describe the transformation you want in natural language

- Select your aspect ratio: Choose the output format that fits your needs

- Add a reference image (optional): Provide visual guidance for style-based edits

- Run the transformation: WaveSpeedAI handles the processing with no cold starts

The pricing is transparent at $0.18 per second of input video—a 5-second clip costs $0.90, while a 30-second video runs $5.40. No subscriptions required, no credits to manage, just pay for what you use.

Access the model directly at https://wavespeed.ai/models/runwayml/gen4-aleph.

Tips for Best Results

Start with clear, specific prompts. “Remove the person in the red shirt walking from left to right” will yield better results than “clean up the background.” Be explicit about what you want changed and how.

For style transfers, provide reference images that capture your target aesthetic. The model can match color palettes, visual textures, and artistic styles more accurately when given visual guidance rather than relying solely on text descriptions.

Test with shorter clips first. Before processing a full 30-second video, try your prompt on a 5-second segment to verify the transformation matches your vision. This approach saves both time and cost.

Combine operations when it makes sense. A single prompt like “Remove the people in the background and change the sky to sunset” can achieve complex edits in one pass.

Transform Your Video Workflow Today

The gap between professional VFX capabilities and accessible video editing has never been smaller. Runway Gen4 Aleph represents a genuine shift in what’s possible for creators at every level—from solo content creators to production studios.

WaveSpeedAI makes accessing this technology simple: reliable infrastructure, no cold starts, and pricing that scales with your actual usage. Whether you’re cleaning up a single clip or processing entire content libraries, the model is ready when you are.

Try Runway Gen4 Aleph on WaveSpeedAI and discover what natural language video editing can do for your projects.