LTX-2 License & Commercial Use: What You Can Ship With Open Weights

I opened a repo last week to try a new model and saw “Open Weights” in big, friendly letters. Then, three lines down, a smaller note: “Released under the LTX-2 license.” I paused. I’ve learned that those two phrases don’t always mean the same thing. So I set my coffee down and went looking for the fine print.

This isn’t a takedown. I like open weights. I rely on them. But “open” has taken on a lot of meanings lately, and the LTX-2 license sits in that slippery space between helpful and vague. Here’s what I found, what I tested in January 2026, and how I’m handling it in my own work.

”Open Weights” ≠ Unlimited Commercial Use

I’ve fallen for this before: the weights are downloadable, so my brain assumes “go build anything.” That’s not always true. With LTX-2, the promise is access, but access isn’t the same as permission for every scenario. Trust me, it’s a classic trap.

I’ve fallen for this before: the weights are downloadable, so my brain assumes “go build anything.” That’s not always true. With LTX-2, the promise is access, but access isn’t the same as permission for every scenario. Trust me, it’s a classic trap.

When I scanned a few projects using the LTX-2 license, “open weights” meant you can:

- Pull the model weights locally

- Run evaluations and experiments

- Build internal prototypes

But it didn’t automatically mean:

- You can resell the model itself

- You can offer it as a hosted API to the public without conditions

- You can embed it in a product that breaches usage caps, attribution rules, or data constraints

The gap between “I can try this” and “I can ship this” is where teams get burned. I’ve seen a prototype slip into a client pilot because the weights were easy to run. Two months later, legal had to unwind the whole thing. Nobody liked that week.

So my default now: I treat “open weights” like a lab key, not a retail license. I check the actual terms before a single user touches it.

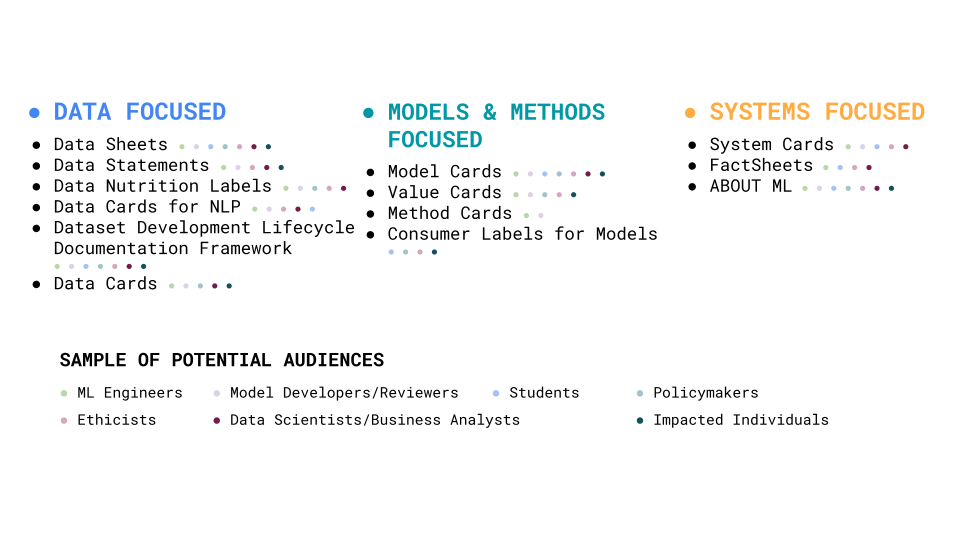

Key Files to Read (License / Model Card)

I know this sounds obvious, but with LTX-2 I’ve found the details scattered between two places:

- LICENSE (or LICENSE.md): The binding terms. This is where you’ll see conditions for distribution, hosting, attribution, and trademark. If anything conflicts with the README, I defer to the license file.

- Model Card (MODEL_CARD.md or docs/): The practical context. Intended use, out-of-scope uses, training data notes, evaluation metrics, known risks. Sometimes it sneaks in de facto rules (e.g., “not for biometric identification”), which usually echo the license or policy.

What I look for first:

What I look for first: - Can I offer a paid service powered by this model? If yes, what’s restricted?

- Am I allowed to fine-tune and distribute the fine-tuned weights?

- Are there attribution or notice requirements in UX or docs?

- Any field-of-use limits (e.g., medical, surveillance, political)?

- Data restrictions for training, fine-tuning, or evaluation logs?

Tiny habit that helps: I copy the key clauses into a one-page note and add my interpretation in plain language. Then I ask a teammate to challenge it. Honestly, if they can poke holes, we slow down — better safe than sorry.

Allowed Commercial Scenarios

I’m cautious with blanket statements, but across projects I reviewed under LTX-2, a few patterns showed up. These were usually okay when terms were followed:

- Internal tools and pilots: Running LTX-2 models inside a company to support employees. No public exposure, no model redistribution. This is the least controversial lane.

- Feature integration with guardrails: Embedding the model in a product as one of several components, with proper attribution and without exposing the raw weights. Think: a text feature inside a helpdesk tool, processed server-side.

- Fine-tuning for a client with private deployment: You tune on the client’s data and deploy to their VPC. You don’t hand over the derived weights unless the license explicitly allows redistribution.

- Evaluation-as-a-service: Benchmarking or red-teaming a client’s models using your LTX-2 instance, without giving them the model.

Even in these, I keep an eye on:

- User count or usage caps (some licenses set thresholds)

- Required notices in product docs or UI

- Export controls if you’re deploying across regions

What surprised me most: a few repos allowed revenue-generating use but drew a hard line at “model-as-a-service” to third parties. So, you could sell a product feature powered by the model, but not sell the model’s API as your product. Subtle, but important — ignore it and oops.

Restrictions to Watch (distribution / trademark / data)

This is where most of the friction lives.

Distribution

- Many LTX-2 terms block redistributing the original or modified weights outside specific channels. Shipping a Docker image that contains the weights can count as redistribution. I’ve seen teams accidentally violate this with CI artifacts.

Trademark & Naming

- You can use the model, but you can’t name your product after it or imply endorsement. A small “Powered by X (LTX-2)” note is fine following nominative fair use guidelines: a brand-forward landing page often isn’t.

Hosted Access

- Offering an external API can be treated as distribution, depending on the wording. Some clauses allow hosted inference if the weights aren’t exposed: others treat any external access as redistribution. I don’t assume.

Data Use

- Look for prohibitions on training the model with certain datasets (e.g., biometric, sensitive personal data) and for requirements to respect training data licenses. If you fine-tune, you own your weights only as far as the license allows.

Compliance Hooks

- Some LTX-2 variants require keeping logs, passing along notices to downstream users, or providing a copy of the license with your software. It’s clerical, but skipping it can void permissions.

If I can’t find clear text for one of these, I consider the scenario restricted until I get written clarification from the maintainers.

Team Compliance Workflow

This is the simple loop I’ve been using since January 2026. It’s not fancy, but it saves drama.

This is the simple loop I’ve been using since January 2026. It’s not fancy, but it saves drama.

1. Intake

- Capture: repo link, commit hash, LICENSE, model card, and release date.

- Snapshot the files into our knowledge base so terms don’t “move” later.

2. Triage

- Tag intended use: internal, client pilot, public feature, or service.

- Flag risky zones: redistribution, external API, fine-tuned weights, regional exports.

3. Interpret

- Summarize the LTX-2 clauses in one page, plain language.

- Map to our use: yes/no/maybe. “Maybe” means we pause and ask.

4. Controls

- Add attribution to UI/docs if required.

- Configure inference to prevent raw weight downloads.

- Restrict logs to non-sensitive data. Set retention by policy.

5. Sign-off

- Product lead and legal check the one-pager. If the change is minor (e.g., internal-only), PM sign-off may be enough.

6. Monitor

- Set a monthly check for license updates or model card changes.

- Track usage metrics against any thresholds the license mentions.

It’s boring in the right way. The key is writing down the interpretation before shipping. Future-you will thank you.

UGC & Client Project Risks

User-generated content is where licenses meet reality.

- Unintended redistribution: If your app lets users export models, embeddings, or system files, make sure the LTX-2 weights aren’t in the bundle. I saw a plug-in auto-attach checkpoints to “project exports.” That counted as redistribution.

- Output claims: Some licenses restrict certain outputs (e.g., biometric classification). If users can prompt anything, you need usage policies, filters, and a way to act on abuse reports.

- Client handoffs: In agency work, a client might expect “all deliverables,” including the tuned model. If LTX-2 blocks transferring derived weights, manage that expectation upfront. Offer hosted deployment instead of a file handoff.

- Attribution drift: UGC templates and white-label deployments are where notices disappear. I’ve started baking attribution into the feature’s settings page so it travels with the component.

A small note on risk-sharing: Put the license name and key constraints in SOWs. Plain text. No scare tactics, just clarity. It sets a boundary before everyone is tired and behind schedule.

Compliance on WaveSpeed (logs / permissions / export)

WaveSpeed is the workspace my team uses to run and compare models. It’s not special, just the place where these habits live. Here’s how I set it up for LTX-2 projects.

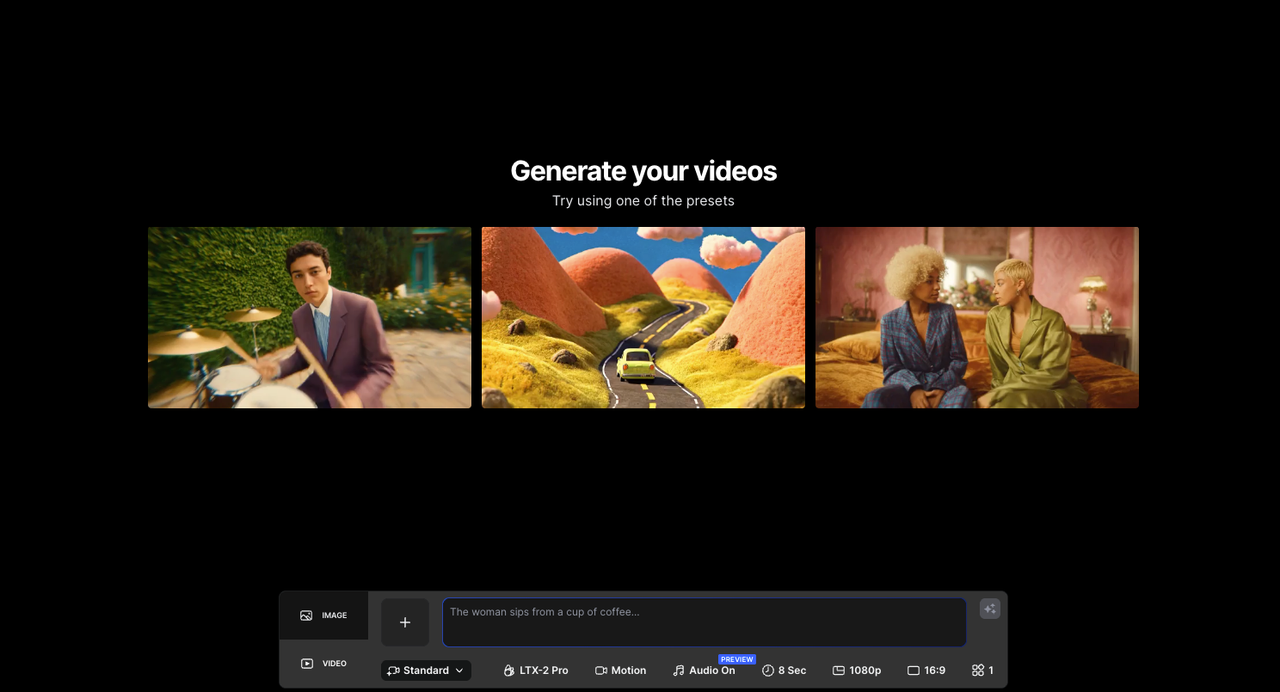

WaveSpeed is the workspace my team uses to run and compare models. It’s not special, just the place where these habits live. We built WaveSpeed for exactly this kind of careful, controlled workflow — you can try it yourself here.

Logs

- I turn on inference logs only for prompts, latency, and token counts, no payload content unless a debug flag is toggled. Retention is 14 days by default. The goal is to prove responsible use without hoarding data we don’t need.

Permissions

- Roles: Viewer (no downloads), Operator (run jobs, no weight access), Maintainer (can update containers but not export weights), Admin (rare).

- API keys are scoped per model and environment. Staging keys can’t touch production weights. It stops “quick tests” from becoming quiet incidents.

Export

- Artifact builds exclude weight files by default. If someone tries to push a container with embedded weights, CI fails with a clear message referencing the LTX-2 clause we annotated in Intake.

- Model cards and licenses are bundled into the app’s About panel and the docs site. It’s boring, but it keeps attribution in the ship.

Audits

- Once a quarter, I run a dry-run “license swap” exercise: could we replace this model in a week if terms changed? If the answer is no, we’re too attached.

This might sound heavy for small teams. In practice, it’s a few checkboxes and a habit of writing things down. It’s lighter than a rollback after launch.

A quiet reminder I keep taped to my monitor: open weights are an invitation, not a blank check. The LTX-2 license, in particular, asks you to be a careful guest.

If you’re working in similar constraints, this setup has been steady for me. If you’re building a fully public API or a model marketplace, you’ll want a closer read of redistribution clauses, and probably an email to the maintainers. I’ve found most are happy to clarify when asked.

If you’re working in similar constraints, this setup has been steady for me. If you’re building a fully public API or a model marketplace, you’ll want a closer read of redistribution clauses, and probably an email to the maintainers. I’ve found most are happy to clarify when asked.

I’m still curious about one thing: how many of us read model cards before README files. I didn’t, for years. Now it’s the first click. Old habits die hard, right?