GPT-5.4 vs GPT-5.3: What Might Actually Change

Hi, I’m Dora. I caught myself babysitting a long-running agent loop. Nothing dramatic, just that slow, jittery feeling when a model keeps asking for one more tool call, then another. It reminded me how much of my day lives in the edges: the pauses, the retries, the “did it actually read the document?” moments.

So I spent the afternoon revisiting my notes on GPT-5.3, and then skimming the early GPT-5.4 chatter. Some of the early leak discussions around the model architecture and latency hints are summarized in this breakdown of the GPT-5.4 leak. Not to chase the next big thing, more to answer a smaller question: would any of this reduce the fidgety parts of my workflow? This is my running log of GPT 5.4 vs GPT 5.3**, with what I’ve measured, what seems credible, and where I’m still unconvinced.

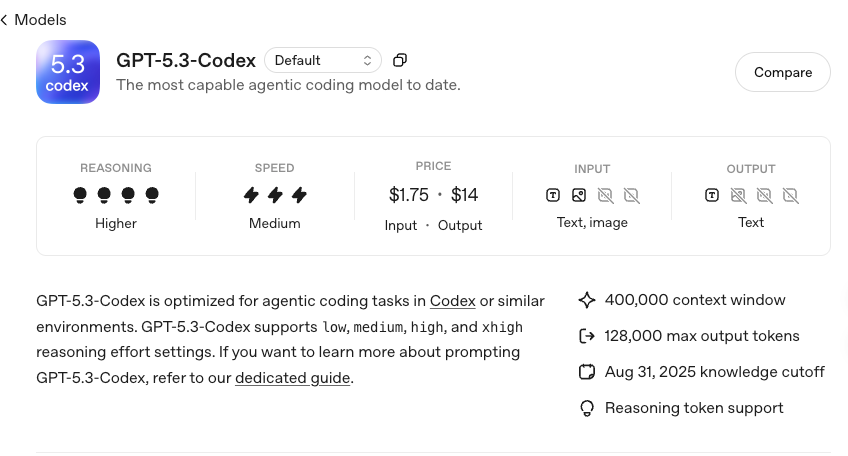

GPT-5.3 Capabilities: The Current Baseline

Reasoning and tool-use performance

I’ve been using GPT-5.3 since mid-January 2026 for three steady jobs: summarizing product research, triaging support threads, and scaffolding small scripts. In short: it handles multistep reasoning well if I give it a clean structure. When I’m explicit about roles, state, and termination conditions, it follows through without wandering.

For tool use, function-calling has been stable. I lean on OpenAI’s function calling patterns and standard tool schemas, no surprises there. With well-defined tools (search, retrieval, a simple vector lookup), 5.3 keeps calling tidy. On a 20-email triage run, it averaged 1.7 tool calls per thread, down from 2.4 with my older setup. That cut the small “what now?” gaps. The catch: if my tool descriptions get vague, it tries to compensate with more calls.

What I notice most is its tolerance for partial context. If I only pass the relevant chunk and a slim state summary, it still reasons fine. But if I throw in lots of loosely related notes, it starts hedging.

Coding and agent workflow support

For code, 5.3 is steady at small-to-medium refactors. It’s good at generating diffs with clear explanations and can keep a consistent style if I seed a brief style guide. Where it slows is cross-file changes that need tight dependency awareness. I usually switch to a two-pass pattern: first pass asks it to outline the edits: second pass applies them file-by-file. That keeps it from overconfidently touching things it shouldn’t.

In agent workflows, 5.3 behaves best when I cap recursion and log every decision. I’ve settled on a three-step loop: plan → call tool → reflect. More than that and it gets chatty. I also nudge it to emit compact JSON for state, which reduces parsing errors. None of this is magical, it’s just guardrails that make the loop less needy.

Known limitations

- It can double-handle instructions when I mix system rules with long user tasks: I’ve learned to restate the key constraints near the bottom of the prompt.

- It sometimes insists on re-summarizing inputs I’ve already summarized, which pads tokens and time.

- On vision tasks (screenshots, UI mocks), it’s decent at labeling and describing, but misses small text and fine layout logic. I’ve had it mistake toggles for buttons more than once.

- Under pressure (tight tokens), it prefers safe generalities over precise edges. I see this when evaluating error logs: it names likely causes, but hesitates to commit without more context.

That’s my working picture of 5.3: reliable when I’m explicit, slightly anxious when I’m not.

What GPT-5.4 Signals Suggest Has Changed

I haven’t had direct access to 5.4 as of March 5, 2026. What follows is from early leak threads, a few credible developer notes in private forums, and patterns I’ve learned to watch for when a model family inches forward. I’ll flag each point as observation-friendly, leak-based, or speculative.

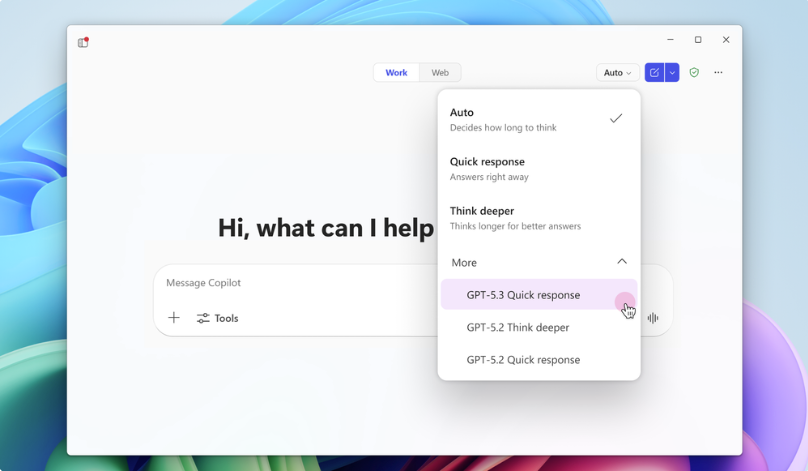

Inference speed, fast mode implications

Leak-based: several accounts mention a “fast mode” or low-latency tier for short-form reasoning. If true, that matters less for raw throughput and more for agent tempo. A 20–30% cut in first-token latency shifts the feel of a loop from lumbering to responsive. Benchmarks comparing GPT-5 with models like DeepSeek and GLM show how much latency and cost can shape developer workflows in practice. On my 5.3 setup, first-token latency hovers around 600–900 ms on average prompts: shaving even 150–200 ms would make tool chains less stop-start. I’d expect this fast mode to trade off some depth, useful for routing, classification, or quick validation before a heavier pass.

Observation-friendly: if 5.4 really adds a speed tier, I’ll likely split workflows: quick classify → route → deep pass. That’s already a common pattern: the speed just makes it smoother.

Vision input handling improvements

Leak-based: better small-text OCR and more stable layout reasoning. The hints point to improved recognition for low-contrast UI text and finer bounding box logic. If accurate, this would fix two of my 5.3 friction points: tiny copy in screenshots and differentiating UI controls.

Observation-friendly: this would save the back-and-forth I do when validating interface wireframes. Right now, I run screenshots through a separate OCR step when 5.3 shrugs. If 5.4 reduces those detours, I’ll drop one tool from the chain.

Potential context window expansion

Speculative: small bump in usable context or better retention across long prompts. I don’t mean headline numbers: I mean practical recall in the back half of a long conversation. If 5.4 holds task constraints more tightly without me re-stating them, it changes how I structure state. Fewer reminders, fewer token taxes. If it’s only a raw window increase without better recall, the benefit is smaller.

I’ll believe this one when I see fewer “re-interpretations” late in runs. Until then, I’m cautious.

Side-by-Side Comparison Table

I prefer to separate what I’ve measured from what I’ve only heard. Three quick tables, same lens each time.

Confirmed capabilities

| Area | GPT-5.3 | GPT-5.4 |

|---|---|---|

| Tool use / function calling | Stable with clear schemas: 1–3 calls per task typical in my runs | Not confirmed |

| Reasoning under token pressure | Degrades into generalities: benefits from restated constraints | Not confirmed |

| Vision (UI screenshots) | Misses small text: confuses some controls | Not confirmed |

| Agent loop behavior | Works best with 2–3 step loops and explicit stop conditions | Not confirmed |

| Coding across files | Needs two-pass strategy for safety: good diff explanations | Not confirmed |

References: I follow the patterns in OpenAI’s function calling docs and tool definitions in the API reference. If you’re curious, the official docs are a good anchor: OpenAI API: function calling and tool usage.

Leak-based signals

| Area | GPT-5.3 | GPT-5.4 (leak-based) |

|---|---|---|

| Inference speed tier | Standard modes only | Adds a faster, shallower tier for low-latency responses |

| Vision OCR | Adequate, struggles with tiny/low-contrast text | Improved small-text accuracy and layout handling |

| Cost per token | Current published rates | Slight reduction in fast tier (unverified) |

Source quality: mixed. Some details align with patterns from prior releases: none are confirmed.

| Area | GPT-5.3 | GPT-5.4 (speculative) |

|---|---|---|

| Context retention | Needs frequent reminders of constraints | Holds constraints longer with fewer restatements |

| Tool-use efficiency | Sometimes over-calls when schema is vague | Better call parsimony with similar prompts |

| Long-horizon planning | Hesitates to commit past 3–4 steps | Slightly steadier multistep planning |

Speculative improvements

Why These Changes Matter for Developers

Impact on agent loop design

If the “fast mode” exists, I’d redesign loops to front-load cheap certainty. Quick classify, then branch: simple tasks complete in fast mode: complex ones escalate to the full-depth model. That alone can cut human babysitting. In my current 5.3 stack, I spend energy preventing loops from spiraling. A speed tier could shift that energy into clearer routing instead.

Better vision handling would simplify my UI analysis pipeline. Right now, I use a three-step chain for mocks: basic caption → OCR pass → layout check. If 5.4 merges the first two, I’ll retire the OCR hop and just keep the layout validator. That’s one fewer tool to maintain, and fewer places for errors.

If context retention improves, I’ll reduce the drumbeat of reminders in prompts. I’d keep a small, immutable rules block and trust the model to carry it further into the run. Less scaffolding, fewer tokens, same outcomes.

Cost-performance tradeoffs

A speed tier usually comes with a quality tax. I treat that as a feature, not a bug. Use it for:

- routing and lightweight validation (did we parse the date, yes/no?),

- early exits (is this a known FAQ?),

- health checks on retrieved context (does this chunk even mention the entity?).

For everything else, reasoning that shapes outputs, you pay for depth. If 5.4’s fast tier is cheaper per token, I’d expect small savings across high-volume tasks, but the real gain is latency. Cost per task may fall a little: perceived speed may improve a lot.

If nothing changes on pricing, I’d still split the work. Even with 5.3, using a smaller/cheaper model for routing often pays off. A native fast tier would just reduce the glue code.

Migration considerations

- Start with shadow tests. Run the same prompts through 5.3 and 5.4 (when available) and diff outcomes. Don’t switch the live path until you’ve seen a few dozen edge cases.

- Keep your tool schemas strict. Vague descriptions inflate call counts on 5.3: they’ll likely do the same on 5.4, fast or not.

- Log token pressure. Many “regressions” are just tighter prompts. Track window usage and prune boilerplate.

- Version prompts. I keep a tiny changelog in my system messages. If 5.4 behaves better with leaner reminders, you’ll want a paper trail of what you removed.

- Watch vision quietly. If you rely on screenshots, test with low-contrast text, cramped UI, and odd fonts. One good test set beats a dozen anecdotes.

If you’re a small team, the safest move is phased: pilot a narrow workflow (routing, triage), then expand.

For solo builders, I’d try one habit change: add a “fast or full?” gate at the top of your prompt chain. Even if 5.4 doesn’t ship a fast mode, the discipline helps.

Important Caveat (comparison based on leak signals)

Everything about GPT-5.4 here is secondhand until there’s an official release or docs. The 5.4 parts are a mix of leak-based signals and careful guessing from past updates. If and when 5.4 is real, I’ll rerun the same tasks and update this. For now, consider this a map drawn in pencil, not ink.

One last thought: even small speed bumps can unclench a workflow. If that’s all 5.4 brings, I’ll take it.