GPT-5.4 Release Date: What the Signals Say

Hello, everyone! I’m Dora. A small thing set this off. I opened my notes last week to update a script that leans on GPT for summarizing user interviews. In my inbox: three messages asking if I’d “switched to GPT 5.4 yet.” I hadn’t even seen an announcement. That quiet disconnect, the inbox hype vs. the docs, sent me to do what I usually do: close the tabs with predictions, open the changelog, and trace what’s real.

Here’s what I found, and how I’m thinking about a GPT 5.4 release date as of 2026. No drama, just signals that tend to matter when you’re trying to plan work around moving targets.

Why There’s No Official Date Yet

Leak vs official announcement

I’ve learned the hard way: leaks lead to calendar whiplash. Screenshots float around, a name like “GPT 5.4” appears in a dropdown, and everyone starts talking like it’s out next Tuesday. Then I check the OpenAI blog and the API changelog, and, silence. When that gap appears, I stick to the official side. If it’s not in docs, a signed blog post, or surfaced in the API model list, I treat it as noise.

A simple rule that’s saved me from scrambling: model names in marketing slides are not the same thing as production endpoints you can call at scale. If procurement, privacy, or rate limits are in your world, this difference matters a lot.

What internal testing signals usually mean

Sometimes we see hints, private preview mentions in conference talks, a stray reference in an eval paper, or a partner demo that feels slightly… ahead. In my experience (2024–2026), those signals usually translate to one of three timelines:

- Weeks: If docs quietly add a “beta” flag and a limited-availability note, GA may be close.

- Months: If only partners have it and there’s no doc artifact yet, we’re likely several cycles out.

- Indefinite: If it shows up in slideware or third-party benchmarks without OpenAI citations, it might never ship as-named.

I don’t say this to be cynical. I just prefer building project plans around things with URLs and version numbers.

OpenAI Release Cadence: Pattern Analysis

GPT-4 to GPT-5 timeline

Looking back helps. From GPT‑3.5 to GPT‑4, we saw a big step-change, then a long tail of iteration. After GPT‑4, progress arrived less as a single “leap day” and more as steady capability layers, vision, function calling improvements, tool use, cost/latency trims. The lesson for me: the headline model number is rarely the day-to-day pivot. The supporting releases and reliability gains change my workflows more.

GPT-5 incremental version releases

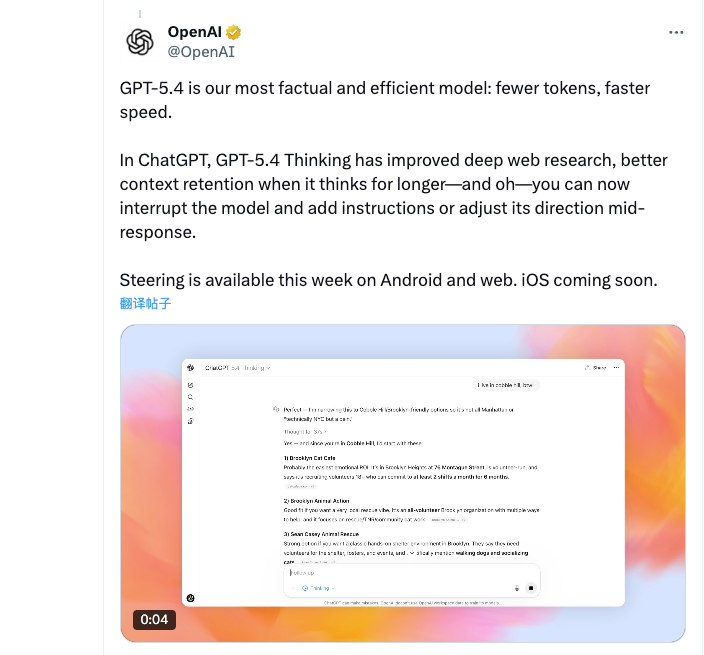

OpenAI tends to ship in increments: point releases, specialized variants (reasoning-focused, multimodal-tuned), and new context window options. Even when a “5.x” label appears, it often arrives alongside:

- New tokens-per-minute limits or pricing tiers that enable (or block) real adoption

- Tooling upgrades (structured output, improved function-calling semantics)

- Safety/eval updates that shift what’s allowed by default

So if a GPT 5.4 appears, I expect it to land as part of a cluster, docs, pricing tables, SDK nudges, not as a lone flag in the wind. That cluster is what flips my roadmap.

Comparison to Anthropic and Google release cadence

Competitors matter for context, not prediction. Benchmarks comparing GPT models with alternatives like DeepSeek and GLM show how quickly performance and cost dynamics can shift across model generations. Anthropic and Google have followed similar arcs: a marquee family release, then rapid sub-versions that harden reliability and reduce latency. Names differ: the tempo doesn’t. For planning, I assume:

- Major families: 9–18 months apart

- Notable increments: every 2–4 months, sometimes faster when safety and infra align

When timelines slip, it’s usually for the same reasons: eval thresholds, model behavior at edge cases, and infrastructure scaling. None of that shows well in a screenshot, but it’s what actually gates release dates.

Realistic Launch Window Scenarios

Scenario A, possible Q2 2026 release

If you forced me to circle a window on the calendar for a potential GPT 5.4, I’d pick late Q2 2026. Not because of any secret, but because the cadence of past point releases plus the current pacing of partner events makes that window plausible. A few things I’d watch for in April–June:

- A beta mention in the API changelog with a concrete model name

- Updated pricing tables in the docs the same week

- Early references in OpenAI eval write-ups linking to method notes

If those don’t appear by mid-June, I’d slide my expectations to late summer.

Scenario B, skip directly to GPT-5.5

OpenAI has skipped tidy numbering before. If internal evals suggest the upgrade is more than incremental, say, materially better reasoning traces or tool orchestration, it could get a 5.5 label instead. Practically, that would mean a bigger doc rewrite, likely fresh safety cards, and a migration note telling us what to change in prompts and function schemas. For teams, it’s a different kind of work: less toggling a model name, more retesting assumptions and guardrails.

Factors that could delay launch

When releases drift, I usually see one (or more) of these in the background:

- Reliability under load: Great in lab, fussy at scale. Spiky latencies, odd timeouts.

- Safety regressions: Edge behaviors failing red-team tests, especially with tool use.

- Cost curves: Model’s too expensive to run at planned tiers: pricing needs another pass.

- Docs debt: New behaviors without clear developer guidance slow responsible rollout.

None of these are glamorous. All of them are good reasons to wait a few weeks.

What Developers Should Watch For

OpenAI developer announcements

I keep two tabs pinned:

- The official OpenAI blog for product posts.

- The Developer forum when I need to see what breaks or surprises people first.

If a release is real, both light up within a day or two of each other. When only the forum buzzes, I stay put.

API changelog signals

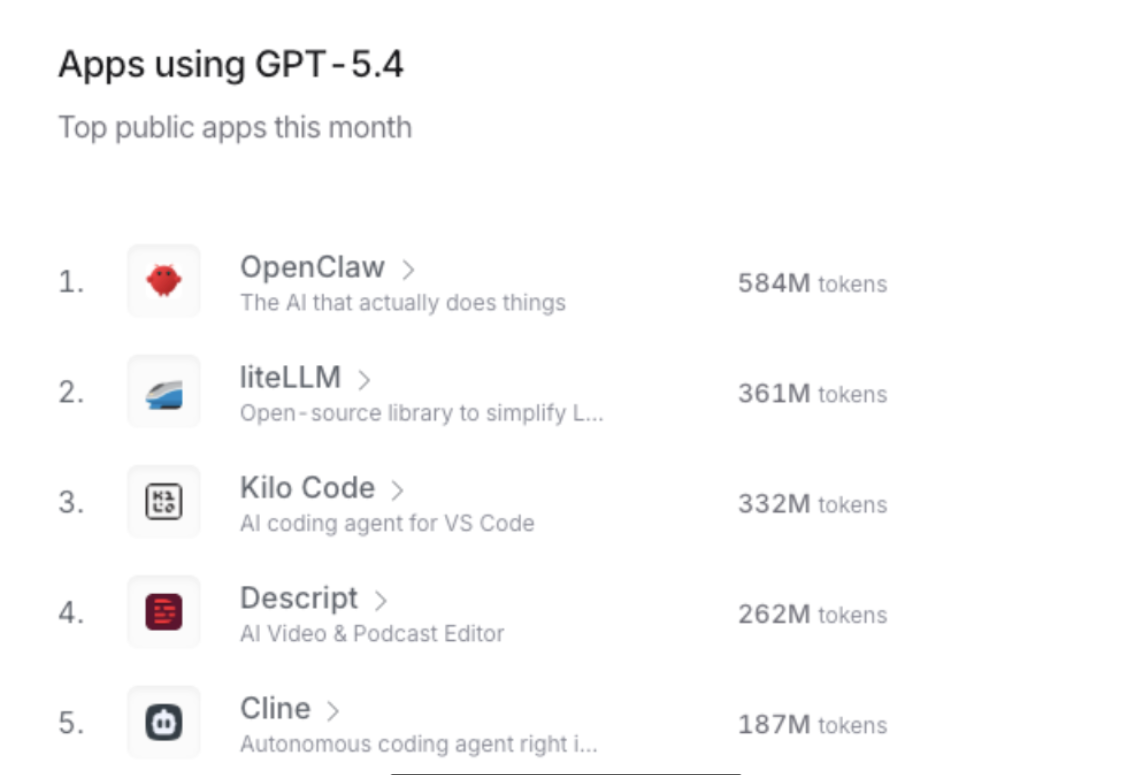

The changelog is the most honest artifact. A few tells I look for:

- Model alias updates: when a stable alias (like “-latest”) quietly points to a new family

- Beta tags that change to GA without fanfare

- Pricing and rate limit lines landing the same day, a sign the infra is ready

I also skim SDK release notes on GitHub. Maintainers sneak small but revealing lines into version bumps. If a client adds a constant for a new model name, that’s usually not an accident.

Early access community signals

I trust patterns more than pronouncements. When a few independent developers share:

- Reproducible latency numbers over a week (not a one-off)

- Prompt diffs that show consistent gains, not cherry-picked wins

- Notes on failure modes (e.g., tool-calling hallucinations down in specific schemas)

…then I start migrating small workloads. Not before. My rule of thumb this past year: two weeks of stable signals before touching production.

Disclaimer (timeline speculative)

There’s no official GPT 5.4 release date at the time of writing. Everything above reflects my own testing habits and how I plan around moving targets. If your constraints are tighter, compliance, SLAs, or big user traffic, give yourself more buffer.

If you’re juggling the same question I was last week, “Should I wait for 5.4?”, my quiet answer is: ship with what’s reliable, and keep one eye on the changelog. If 5.4 lands soon, you’ll see it there first. And if it doesn’t, you haven’t paused your work for a rumor.

I’ll keep the tab pinned. You know the one.